Understanding the Basics of Tensors

Tensors are fundamental in mathematics and machine learning. They are extensions of concepts like scalars, vectors, and matrices.

This section explains the basics of tensors, including their operations, shapes, sizes, and how they are notated.

Defining Scalars, Vectors, and Matrices

Scalars, vectors, and matrices are the building blocks of tensors.

A scalar is a single number, like a temperature reading, and it has no dimensions.

Vectors are one-dimensional arrays of numbers, representing quantities like velocity with both magnitude and direction.

A matrix is a two-dimensional grid of numbers, useful for operations in systems of equations and transformations.

In more complex applications, matrices allow multiple operations simultaneously. Each element in these structures is a number, which maintains the simplicity while providing powerful ways to compute in multiple dimensions.

Understanding these elements helps grasp more complex tensor operations.

Tensor Fundamentals and Operations

A tensor is a multi-dimensional generalization of scalars, vectors, and matrices. Tensors can have any number of dimensions, allowing them to store data variously. These data structures become helpful in areas like machine learning and scientific computing.

Tensor operations include addition, subtraction, and product operations, much like those used with matrices.

For advanced applications, tensors undergo operations like decomposition that break them into simpler components. These operations allow the manipulation of very large datasets efficiently.

Tensor comprehensions enable high-performance computations, streamlining calculations in different frameworks.

Shape, Size, and Tensor Notation

The shape of a tensor indicates the number of dimensions and size in each dimension. For example, a matrix with 3 rows and 4 columns has a shape of (3, 4). Tensors can extend this concept to more dimensions, expressed as a sequence of numbers.

The size of a tensor refers to the total number of elements it contains.

Understanding these concepts aids in managing the efficiency of computational tasks involving tensors.

The tensor notation often represents these as tuples, making it easier to understand complex mathematical operations. It allows effective management of data using concise, standardized forms seen in areas like tensor decompositions.

Mathematical Foundations for Machine Learning

Understanding the mathematical foundations is crucial for designing and optimizing machine learning algorithms. Core concepts in linear algebra, probability, statistics, and calculus lay the groundwork for effective model development and analysis.

Essential Linear Algebra Concepts

Linear algebra forms the backbone of machine learning.

Concepts like vectors and matrices are central to representing data and transformations. Operations such as matrix multiplication and inversion enable complex computations.

Key elements include eigenvalues and eigenvectors, which are used in principal component analysis for reducing dimensionality in data.

Understanding these fundamentals is essential for both theoretical and practical applications in machine learning.

Probability and Statistics Review

Probability and statistics provide the tools to model uncertainty and make predictions.

Probability distributions, such as Gaussian and Bernoulli, help model different data types and noise, which is inherent in data.

Statistics offers methods to estimate model parameters and validate results.

Concepts like mean, variance, and hypothesis testing are essential for drawing inferences, making predictions, and evaluating the performance of machine learning models.

Calculus for Optimization in Machine Learning

Calculus is vital for optimizing machine learning algorithms.

Derivatives and gradients are used to minimize loss functions in models like neural networks.

Gradient descent, a key optimization technique, relies on these principles to update model weights for achieving the best performance.

Understanding integrals also aids in computing expectations and probabilities over continuous variables, crucial for models like Gaussian processes. This knowledge ensures efficient and effective learning from data.

Data Structures in Machine Learning

In machine learning, understanding the right data structures is crucial. Key structures like vectors and matrices are foundational, enabling various computations and optimizations. Algebra data structures further enhance the efficiency and capability of machine learning models.

Understanding Vectors and Matrices as Data Structures

Vectors and matrices are basic yet vital data structures in machine learning.

Vectors represent a single column of data and are important for modeling features in datasets. They are often used in algorithms, playing a critical role in linear transformations.

Matrices extend this concept to tables of numbers, enabling the storage and manipulation of two-dimensional data.

Libraries like NumPy provide powerful operations for matrices, such as addition, multiplication, and transposition. These operations are essential in training machine learning models, where matrices represent input features, weights, and biases.

Algebra Data Structures and Their Operations

Algebra data structures include tensors that represent multi-dimensional arrays, supporting more complex data.

These are used extensively in deep learning frameworks like TensorFlow and PyTorch, where tensors handle large volumes of data efficiently.

Operations like tensor decomposition and manipulation play a significant role. These operations involve reshaping or altering the dimensions of tensors without compromising the data integrity, as explained in tensor techniques.

Such data structures allow for implementing complex networks and algorithms with Python, providing robustness and flexibility in machine learning applications.

Introduction to Tensor Operations

Understanding tensor operations is essential for applying machine learning techniques effectively. These operations include element-wise calculations, addition and multiplication, and special functions such as norms, each playing a crucial role in data manipulation and analysis.

Element-Wise Operations

Element-wise operations are applied directly to corresponding elements in tensors of the same shape.

These operations include basic arithmetic like addition, subtraction, multiplication, and division. In practice, they are used to perform computations quickly without the need for complex looping structures.

A common example is the element-wise multiplication of two tensors, often used in neural networks to apply activation functions or masks. This operation ensures that each element is processed individually, enabling efficient parallel computing.

Libraries like NumPy offer built-in functions to handle these tasks efficiently.

Tensor Addition and Multiplication

Tensor addition involves adding corresponding elements of tensors together, provided they have the same dimensions. This operation is fundamental in neural network computations, where weights and biases are updated during training.

Tensor addition is straightforward and can be performed using vectorized operations for speed.

Matrix multiplication, a specific form of tensor multiplication, is more complex. It involves multiplying rows by columns across matrices and is crucial in transforming data, calculating model outputs, and more.

Efficient implementation of matrix multiplication is vital, as it directly impacts the performance of machine learning models.

Norms and Special Tensor Functions

Norms describe the size or length of tensors and are crucial for evaluating tensor properties such as magnitude.

The most common norms include the L1 and L2 norms. The L1 norm is the sum of absolute values, emphasizing sparsity, while the L2 norm is the square root of summed squares, used for regularization and controlling overfitting.

Special tensor functions, like broadcasting, allow operations on tensors of different shapes by expanding dimensions as needed.

Broadcasting simplifies operations without requiring explicit reshaping of data, enabling flexibility and efficiency in mathematical computations.

Understanding these operations helps maximize the functionality of machine learning frameworks.

Practical Application of Tensor Operations

Tensor operations are essential in machine learning. They are used to perform complex calculations and data manipulations. Tensors are crucial in building and training models efficiently. They enable the construction of layers and algorithms that are fundamental to modern AI systems.

Tensor Operations in Machine Learning Algorithms

Tensors are data structures that are fundamental in machine learning. They allow efficient representation of data in higher dimensions. By using tensors, algorithms can process multiple data points at once. This enhances the speed and capability of learning processes.

Tensor operations like addition, multiplication, and decomposition are used to manipulate data.

For example, tensor decomposition simplifies large datasets into more manageable parts. This is particularly helpful when processing large datasets.

Tensor operations enable high-performance machine learning abstractions. They enhance computing efficiency, helping in faster data processing. These operations are vital for transforming and scaling data in algorithms.

Using Tensors in Neural Networks and Deep Learning

In neural networks, tensors are used to construct layers and networks. They help in structuring the flow of data through nodes. Tensors manage complex operations in training deep learning models.

Tensors allow implementation of various network architectures like convolutional neural networks (CNNs) and recurrent neural networks (RNNs). These architectures rely on tensor operations to process different dimensions of data effectively.

Deep learning techniques leverage tensor operations for backpropagation and optimization, which are key in model accuracy.

Tensor operations help in managing intricate calculations, making them indispensable in neural networks.

Using tensor decompositions helps in compressing models, thus saving computational resources. This efficiently supports complex neural network operations in various practical applications.

Leveraging Libraries for Tensor Operations

Popular libraries like TensorFlow, PyTorch, and Numpy simplify tensor operations in machine learning. These tools are crucial for handling complex computations efficiently and boosting development speed.

Introduction to TensorFlow and PyTorch

TensorFlow and PyTorch are widely used in Python for machine learning and AI tasks.

TensorFlow, created by Google, offers flexibility and control through its computation graph-based model. This feature makes it great for deployment across various platforms. TensorFlow can handle both research and production requirements effectively.

PyTorch, developed by Facebook, is popular due to its dynamic computation graph. It allows for more intuitive debugging and ease of experimentation. PyTorch is favored in research settings because of its straightforward syntax and Pythonic nature.

Both libraries support GPU acceleration, which is essential for handling large tensor operations quickly.

Numpy for Tensor Computations

Numpy is another powerful Python library, fundamental for numerical computations and array manipulation.

Though not specifically designed for deep learning like TensorFlow or PyTorch, Numpy excels in handling arrays and matrices. This makes it a valuable tool for simpler tensor calculations.

With support for broadcasting and a wide variety of mathematical functions, Numpy is highly efficient for numerical tasks.

It acts as a base for many other libraries in machine learning. While it lacks GPU support, Numpy’s simplicity and performance in handling local computations make it indispensable for initial data manipulation and smaller projects.

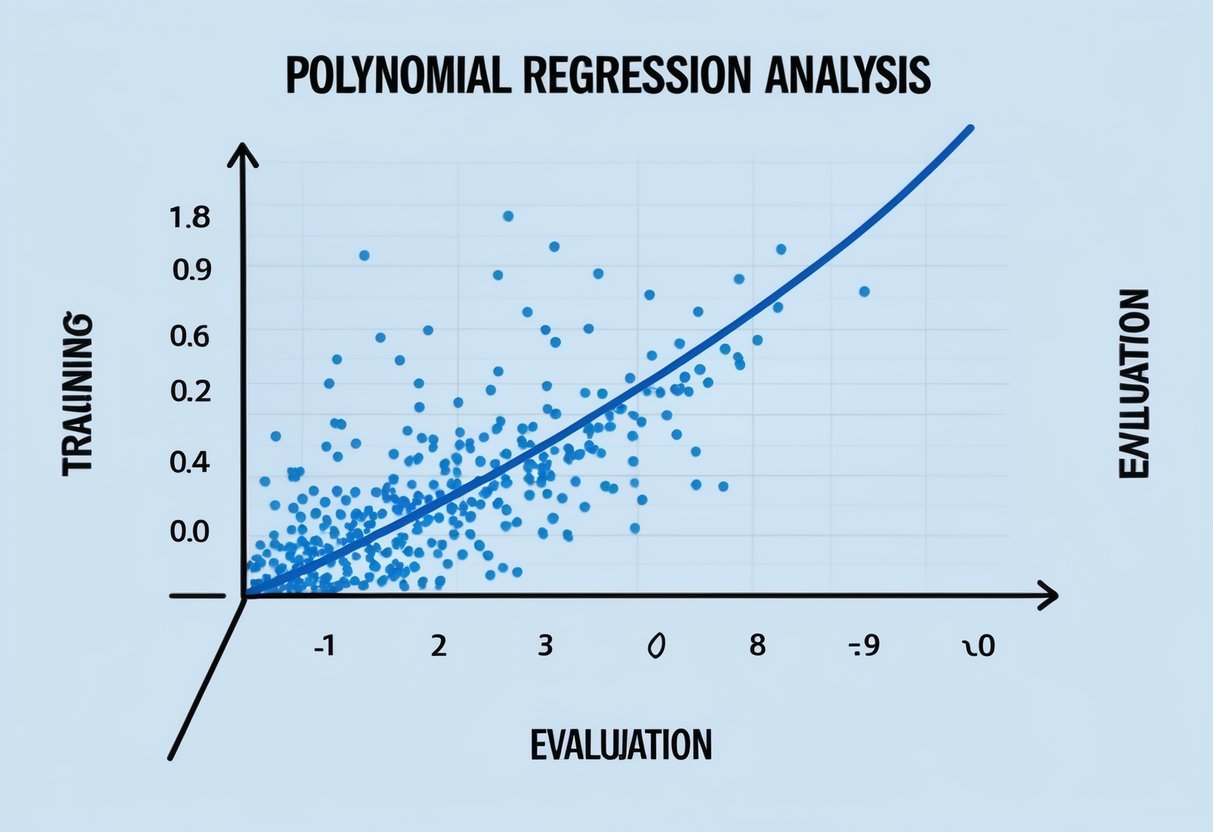

Dimensionality Reduction and Feature Extraction

Dimensionality reduction helps manage complex datasets by reducing the number of variables. Feature extraction plays a key role in identifying important data patterns. These techniques include methods like Principal Component Analysis (PCA) and Singular Value Decomposition (SVD), which are essential in data science and tensor operations by simplifying models and improving computation.

Exploring PCA for Dimensionality Reduction

PCA is a popular method used to reduce the dimensionality of large datasets while preserving important information. It works by converting the original data into a set of principal components. These components are new variables that are linear combinations of the original variables. These components capture the variance in the data. The first few principal components usually explain most of the variability, making them highly useful for analysis.

In practice, PCA helps eliminate noise and redundant features, allowing algorithms to operate more efficiently. This method is particularly beneficial in data science for tasks like feature extraction and machine learning. Here, it can simplify data input while retaining critical properties needed for accurate predictions.

Singular Value Decomposition (SVD)

SVD is another key technique used for dimensionality reduction and feature extraction. This method factorizes a matrix into three components (U, Σ, V*), which can reveal underlying structures in data. It is widely used for its precision in decomposing data with minimal loss of information. SVD is especially useful in data science for handling large-scale datasets.

By breaking down matrices, SVD helps in tasks such as image compression and noise reduction, making it a powerful tool for feature extraction. Additionally, it plays a significant role in optimizing large-scale problems by improving the efficiency of computations, a critical aspect in handling vast dimensional data.

Advanced Topics in Tensor Algebra

In advanced tensor algebra, differentiation and optimization are crucial for improving machine learning models. Understanding these processes leads to better handling of tensor operations.

Gradients and Differential Operations

Gradients play a key role in machine learning by guiding how models update their parameters. Differentiation involves calculating the gradient, which tells how much a function output changes with respect to changes in input. In tensor algebra, this involves using calculus on complex algebra data structures. Gradients help in adjusting tensor-based models to minimize errors gradually. Techniques like backpropagation leverage these gradient calculations extensively, making them essential in training neural networks. Thus, mastering differentiation and gradient calculation is vital for those working with machine learning models that rely on tensor operations.

Optimization Techniques in Tensor Algebra

Optimization techniques are necessary to improve the performance of machine learning models. In tensor algebra, optimization involves finding the best way to adjust model parameters to minimize a loss function. Algorithms like stochastic gradient descent (SGD) and Adam optimizer are widely used. These methods iteratively tweak tensor data structures to achieve the most accurate predictions. Tensor decomposition is another technique that simplifies complex tensor operations, making calculations faster and more efficient. These optimization strategies help harness the full potential of tensor operations, thereby improving the overall efficiency and accuracy of machine learning models significantly.

The Role of Tensors in Quantum Mechanics

Tensors play a critical role in quantum mechanics by modeling complex systems. They represent quantum states, operations, and transformations, allowing for efficient computation and analysis in quantum physics.

Quantum Tensors and Their Applications

In quantum mechanics, tensors are fundamental for describing multi-particle systems. They allow scientists to manage the high-dimensional state spaces that are typical in quantum computing. Using tensor networks, these multi-dimensional arrays can handle the computational complexity of quantum interactions efficiently.

Tensors also enable the simulation of quantum states and processes. In quantum computer science, they are used to execute operations like quantum gates, essential for performing calculations with quantum algorithms. For instance, tensor methods contribute to quantum machine learning, enhancing the capability to process data within quantum frameworks.

Quantum tensors simplify the representation of entangled states, where particles exhibit correlations across large distances. They allow for the efficient decomposition and manipulation of these states, playing a vital role in various quantum technologies and theoretical models. This makes tensors indispensable in advancing how quantum mechanics is understood and applied.

The Importance of Practice in Mastering Tensor Operations

Mastering tensor operations is crucial in the fields of AI and machine learning. Consistent practice allows individuals to develop comfort with complex mathematical calculations and apply them to real-world scenarios effectively.

Developing Comfort with Tensor Calculations

Regular practice with tensors helps in building a strong foundation for understanding complex machine learning strategies. It involves becoming familiar with operations such as addition, multiplication, and transformations.

By practicing repeatedly, one can identify patterns and develop strategies for solving tensor-related problems. This familiarity leads to increased efficiency and confidence in handling machine learning tasks.

Additionally, seasoned practitioners can spot errors more quickly, allowing them to achieve successful outcomes in their AI projects.

Overall, comfort with these operations empowers users to handle more advanced machine learning models effectively.

Practical Exercises and Real-world Applications

Engaging in practical exercises is essential for applying theoretical knowledge to actual problems. Hands-on practice with real-world data sets allows learners to understand the dynamic nature of tensor operations fully.

Projects that simulate real-world applications can deepen understanding by placing theories into context. The projects often involve optimizing prediction models or improving computation speed using tensors.

Furthermore, these exercises prepare individuals for tasks they might encounter in professional settings. Participating in competitions or collaborative projects may also refine one’s skills.

Practicing in this manner unlocks creative solutions and innovative approaches within the ever-evolving landscape of AI and machine learning.

Frequently Asked Questions

Tensors are vital in machine learning for their ability to handle complex data structures. They enhance algorithms by supporting high-performance computations. Understanding tensor calculus requires grasping key mathematical ideas, and Python offers practical tools for executing tensor tasks. The link between tensor products and models further shows their importance, while mastery in foundational math aids effective use of TensorFlow.

What role do tensors play in the field of machine learning?

Tensors are used to represent data in multiple dimensions, which is crucial for processing complex datasets in machine learning. They facilitate operations like tensor decomposition and transformations, enabling algorithms to work efficiently with large-scale data.

How do tensor operations enhance the functionality of machine learning algorithms?

Tensor operations, such as those performed in tensor comprehensions, streamline computations by optimizing mathematical expressions. This increases the speed and accuracy of learning algorithms, making them more effective for processing intricate datasets.

Which mathematical concepts are essential for understanding tensor calculus in machine learning?

Key concepts include linear algebra, calculus, and matrix decompositions. Understanding these basics helps in grasping tensor operations and their applications in machine learning, as seen in tensor decomposition techniques.

In what ways can Python be used to perform tensor operations?

Python, especially with libraries like NumPy and TensorFlow, allows for efficient tensor computations. It enables the handling of large datasets and complex operations, making it a popular choice for implementing and experimenting with machine learning models, as highlighted in tensor learning.

Can you explain the relationship between tensor products and machine learning models?

Tensor products extend the operations that can be performed on data, integrating multiple datasets to better train models. By combining information in different dimensions, tensor products improve the learning capacity of machine algorithms, bridging various data forms into cohesive models.

What foundational mathematics should one master to work effectively with TensorFlow?

To effectively work with TensorFlow, one should master calculus, linear algebra, and statistics. These foundational skills aid in constructing and optimizing machine learning models. They make TensorFlow’s powerful capabilities more accessible and manageable for practitioners.