Understanding Classification Metrics

Classification metrics are crucial in evaluating the performance of classification models. They help determine how well a model is performing in distinguishing between classes, which is especially important for decision-making in various applications.

These metrics allow practitioners to gauge the accuracy, precision, and other key indicators of model performance.

Importance of Classification Metrics

Classification metrics are essential for assessing the quality of classification models. They offer a way to quantify how well models predict the correct class for each instance.

By using these metrics, one can gain insights into the strengths and weaknesses of a model, allowing for better optimization and enhancement in different applications.

For instance, in medical diagnosis, accurate classification can significantly impact treatment decisions. Classification metrics such as accuracy, precision, and recall provide different perspectives on model performance. Accuracy gives an overall view, while precision focuses on the correctness of positive predictions.

Recall, on the other hand, emphasizes the ability to find all positive instances. These metrics are balanced by the F1 score, which offers a single measure by considering both precision and recall.

Types of Classification Metrics

Several types of classification metrics are used to evaluate model performance in classification problems.

A commonly used metric is the confusion matrix, which presents the counts of true positives, false positives, false negatives, and true negatives. This matrix provides a comprehensive overview of the model’s outcomes.

Further metrics include precision, recall, and F1-score. Precision indicates how many of the predicted positives are actually true positives, while recall measures how many true positives are captured by the model out of all possible positive instances.

The F1 score combines these two metrics into a single value, helpful in situations with imbalanced classes. The area under the ROC curve (AUC-ROC) is another metric, which assesses the trade-off between true positive rate and false positive rate, highlighting the model’s ability to distinguish between classes.

Basics of the Confusion Matrix

The confusion matrix is a tool used in classification problems to evaluate the performance of a model. It helps identify true positives, true negatives, false positives, and false negatives in both binary and multi-class classification scenarios.

Defining the Confusion Matrix

For binary classification tasks, the confusion matrix is a simple 2×2 table. This matrix displays the actual versus predicted values. The four outcomes include True Positive (TP), where the model correctly predicts the positive class, and True Negative (TN), where it correctly predicts the negative class.

False Positive (FP), often called a Type I Error, occurs when the model incorrectly predicts the positive class, while False Negative (FN), or Type II Error, arises when the model fails to identify the positive class.

The matrix’s structure is crucial for understanding a model’s strengths and weaknesses. In multi-class classification, this matrix extends beyond 2×2 to accommodate multiple categories, impacting how each class’s performance is assessed.

Reading a Confusion Matrix

Reading a confusion matrix involves analyzing the count of each category (TP, TN, FP, FN) to gain insights.

The model’s accuracy is determined by the sum of TP and TN over the total number of predictions. Precision is calculated as TP divided by the sum of TP and FP, indicating how many selected items were relevant.

Recall is calculated as TP divided by the sum of TP and FN, showing the ability of the model to find true examples. For datasets with balanced or imbalanced data, analyzing these components is essential. High accuracy may not reflect the model’s performance on imbalanced datasets, where class frequency varies significantly.

Metrics Derived from the Confusion Matrix

The confusion matrix is a valuable tool in evaluating the performance of classification models. It provides the foundation for calculating accuracy, precision, recall, F1-score, specificity, and sensitivity. These metrics offer different insights into how well a model is performing.

Accuracy

Accuracy refers to the ratio of correctly predicted observations to the total observations. It is calculated using the formula:

[ \text{Accuracy} = \frac{TP + TN}{TP + TN + FP + FN} ]

where TP is true positives, TN is true negatives, FP is false positives, and FN is false negatives.

This metric is useful in balanced datasets but can be misleading in cases with high levels of class imbalance.

Accuracy provides an overview of the model’s performance, but it doesn’t distinguish between different types of errors. In situations where one class is more important, or where data is imbalanced, other metrics like recall or precision may be needed to provide a more nuanced evaluation.

Precision and Recall

Precision is the ratio of correctly predicted positive observations to the total predicted positives. It is calculated as:

[ \text{Precision} = \frac{TP}{TP + FP} ]

High precision indicates a low false positive rate.

Recall, or sensitivity, measures the ability of a model to find all relevant instances. It is expressed as:

[ \text{Recall} = \frac{TP}{TP + FN} ]

Together, precision and recall provide insights into the classification model’s balance. High recall indicates that the model returns most of the positive results, yet it may at the cost of more false positives if precision isn’t considered.

F1-Score

The F1-score is the harmonic mean of precision and recall, helping to balance the two metrics. It is especially useful when dealing with imbalanced datasets. The formula for F1-score is:

[ \text{F1-Score} = 2 \times \frac{\text{Precision} \times \text{Recall}}{\text{Precision} + \text{Recall}} ]

An F1-score close to 1 signifies both high precision and recall. This score is critical in applications where balancing false positives and false negatives is important. It prioritizes models that achieve a good balance between capturing relevant data and maintaining low error rates.

Specificity and Sensitivity

Specificity measures the proportion of true negatives correctly identified by the model. It is defined as:

[ \text{Specificity} = \frac{TN}{TN + FP} ]

This metric is essential when false positives have a high cost.

On the other hand, sensitivity (or recall) focuses on capturing true positives. These two metrics provide a detailed view of the model’s strengths and weaknesses in distinguishing between positive and negative classes. A complete evaluation requires considering both, especially in domains like medical testing, where false negatives and false positives can have different implications.

Advanced Evaluation Metrics

Understanding advanced evaluation metrics is crucial in analyzing the performance of classification models. These metrics help provide a deeper view of how well the model distinguishes between classes, especially in scenarios where imbalanced datasets might skew basic metrics like accuracy.

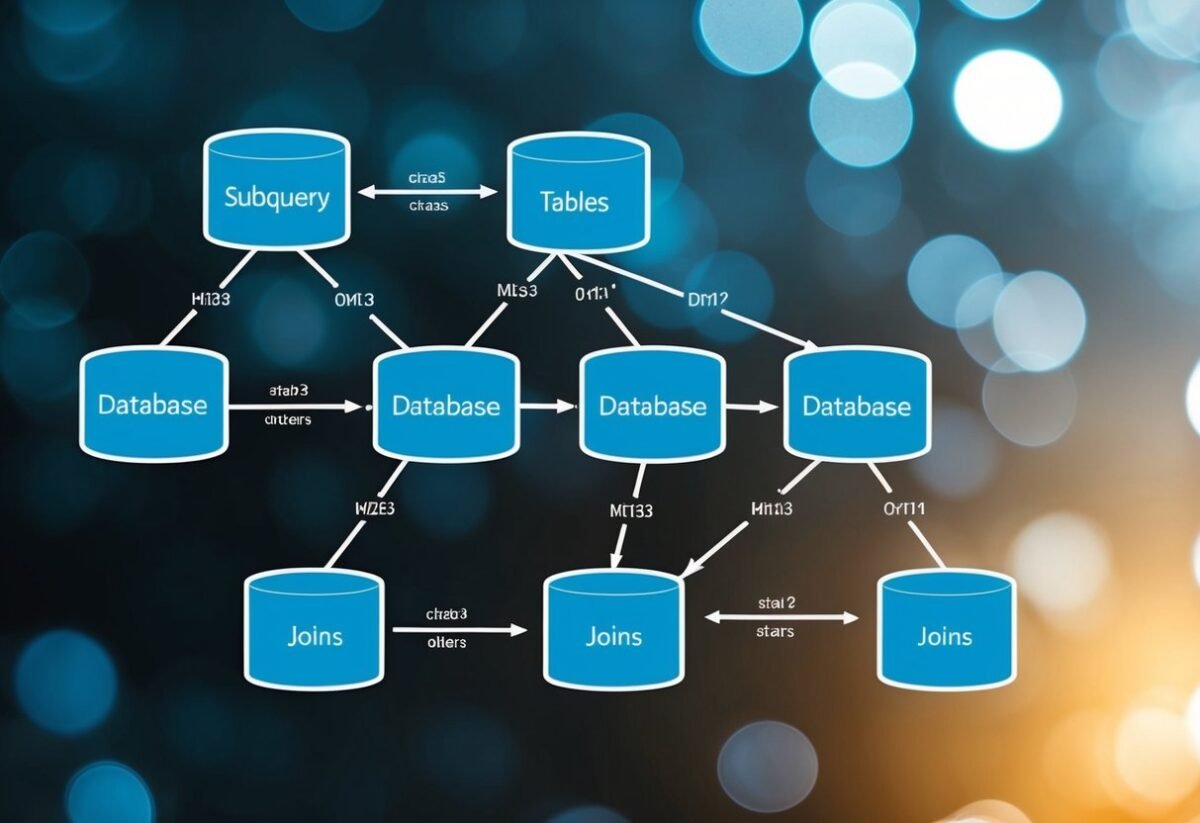

ROC Curves and AUC-ROC

ROC (Receiver Operating Characteristic) curves plot the true positive rate (TPR) against the false positive rate at various threshold settings. This graph is instrumental in visualizing the diagnostic ability of a binary classifier.

The area under the ROC curve, known as AUC-ROC, quantifies the overall performance, where a value of 1 indicates perfect classification and 0.5 suggests random guessing.

Models with a high AUC-ROC are better at distinguishing between the classes. This is particularly helpful when dealing with class imbalance, offering a more comprehensive measure than accuracy alone.

Analysts often compare models based on their AUC scores to decide which model fares best under various conditions. It is worth noting that while AUC-ROC serves as a powerful metric, it generally assumes equal costs for false positives and false negatives.

Precision-Recall Curve

The precision-recall curve displays the trade-off between precision and recall for different threshold settings.

Precision measures the correctness of positive predictions, while recall gauges the ability to identify all actual positives. This curve is especially useful in situations with a substantial class imbalance, where accuracy might not give a clear picture of a model’s performance.

An important world is the F1 score, which is the harmonic mean of precision and recall. It balances both aspects when assessing models. High precision with low recall or vice versa doesn’t always indicate good performance, but the curve visualizes each combination. Analysts should focus on the area under the precision-recall curve to understand the balance achieved by a model.

Impact of Class Imbalance on Metrics

When dealing with classification problems, class imbalance can greatly affect the evaluation of performance metrics. It often results in misleading interpretations of a model’s success and needs to be addressed with appropriate methods and metrics.

Understanding Class Imbalance

Class imbalance occurs when the number of instances in different classes of a dataset is not evenly distributed. For example, in a medical diagnosis dataset, healthy cases might massively outnumber the disease cases. This imbalance can lead to biased predictions where the model favors the majority class, reducing detection rates for minority classes.

An imbalanced dataset is challenging as it may cause certain metrics, especially accuracy, to give a false sense of high performance.

For instance, if a model predicts all instances as the majority class, accuracy might be high, misleadingly suggesting the model is effective, even though it’s not predicting the minority class correctly at all.

Metrics Sensitive to Class Imbalance

Some metrics are more sensitive to class imbalance than others.

Accuracy can be particularly misleading, as it considers the correct predictions of the majority class but overlooks errors on the minority class. Instead, measures like precision, recall, and F1-score offer better insight since they account for the correct detection of positive instances and balance between false positives and negatives.

ROC Curves and Precision-Recall curves are also useful tools.

ROC Curves represent the trade-off between true positive rate and false positive rate, while Precision-Recall curves focus on the trade-off between precision and recall. These tools help evaluate a model’s performance in the face of imbalance, guiding towards methods that better handle such data.

Comparing Classification Models

When comparing classification models, it is important to consider the type of classification problem along with the criteria used to assess model performance.

Differences between multi-class and binary classification can influence model choice, while various criteria guide the selection of the most suitable classification model.

Multi-Class vs Binary Classification

Binary classification involves predicting one of two possible classes. An example is determining whether an email is spam or not. Binary models are generally simpler and often utilize metrics like the confusion matrix, accuracy, precision, recall, and the F1-score.

Multi-class classification deals with more than two classes. For instance, identifying which object is in an image (cat, dog, car, etc.). It requires models that can handle complexities across multiple class boundaries, and the metric evaluations extend to measures like micro and macro averages of metrics.

While binary models benefit from having straightforward metrics, multi-class models must contend with increased complexity and computational requirements. Selecting an appropriate model depends largely on the number of classes involved and the specifics of the dataset.

Model Selection Criteria

Key criteria for choosing between classification models include accuracy, precision, recall, and the F1-score.

While accuracy indicates the general correctness, it might not reflect performance across imbalanced datasets. F1-score provides a balance between precision and recall, making it more informative in these cases.

ROC curves are also useful for visualizing model performance, especially in imbalanced classification tasks.

They help explore the trade-offs between true positive and false positive rates. Decision makers should prioritize models that not only perform well in terms of these metrics but also align with the problem’s specific requirements.

Utilizing Scikit-Learn for Metrics

Scikit-Learn offers a range of tools to evaluate machine learning models, particularly for classification tasks.

The library includes built-in functions to calculate standard metrics and allows for customization to fit specific needs.

Metric Functions in sklearn.metrics

Scikit-Learn’s sklearn.metrics module provides a variety of metrics to evaluate classification algorithms. These include measures like accuracy, precision, recall, and the F1-score, which are crucial for assessing how well a model performs.

A confusion matrix can be computed to understand the number of correct and incorrect predictions.

Accuracy gives the ratio of correct predictions to the total predictions. Precision and recall help in understanding the trade-offs between false positives and false negatives.

The F1-score combines precision and recall to provide a single metric for model performance. For more comprehensive evaluation, ROC curves and AUC scores can be useful to understand the model’s ability to differentiate between classes.

Custom Metrics with sklearn

In addition to built-in metrics, users can define custom metrics in Scikit-Learn to suit specific model evaluation needs.

This can include writing functions or classes that compute bespoke scores based on the output of a classification algorithm.

Creating a custom metric might involve utilizing make_scorer from sklearn.metrics, which allows the user to integrate new scoring functions.

This flexibility helps in tailoring the evaluation process according to the specific requirements of a machine learning model.

A custom metric can be useful when conventional metrics do not capture a model’s unique considerations or objectives. This feature ensures that Scikit-Learn remains adaptable to various machine learning scenarios.

Handling Imbalanced Data

Imbalanced data can significantly affect the results of a classification model. It’s crucial to use the right techniques to handle this issue and understand how it impacts performance metrics.

Techniques to Address Imbalance

One of the key techniques for addressing imbalanced data is resampling. This involves either oversampling the minority class or undersampling the majority class.

Oversampling duplicates data from the minor class, while undersampling involves removing instances from the major class.

Another technique is using synthetic data generation, such as the Synthetic Minority Over-sampling Technique (SMOTE).

Ensemble methods like Random Forests or Boosted Trees can handle imbalances by using weighted voting or adjusting class weights.

Cost-sensitive learning is another approach, focusing on penalizing the model more for misclassified instances from the minority class.

Impact on Metrics and Model Performance

Imbalance affects various performance metrics of a classification model. Metrics like accuracy might be misleading because they are dominated by the majority class.

Instead, precision, recall, and the F1-score provide more insight. These metrics give a clearer sense of how well the model is handling the minority class.

Precision measures the proportion of true positive results in the predicted positives, while recall evaluates how well the model captures positive cases.

The F1-score is the harmonic mean of precision and recall, especially useful for imbalanced datasets.

ROC and Precision-Recall curves are also valuable for visualizing model performance.

Error Types and Interpretation

Understanding different types of errors and their interpretation is crucial in evaluating classification models. Key error types include Type I and Type II errors, and the misclassification rate provides a measure of a model’s accuracy.

Type I and Type II Errors

Type I error, also known as a false positive, occurs when a test incorrectly predicts a positive result. This type of error can lead to unnecessary actions based on incorrect assumptions. For instance, in medical testing, a patient may be incorrectly diagnosed as having a disease.

Addressing Type I errors is important to prevent unwarranted interventions or treatments.

Type II error, or false negative, happens when a test fails to detect a condition that is present. This error implies a missed detection, such as overlooking a harmful condition.

In critical applications, such as disease detection, minimizing Type II errors is imperative to ensure conditions are identified early and accurately addressed. Balancing both error types enhances model reliability.

Misclassification Rate

The misclassification rate measures how often a model makes incorrect predictions. This rate is calculated by dividing the number of incorrect predictions by the total number of decisions made by the model.

A high misclassification rate indicates the model is frequently making errors, impacting its effectiveness.

To reduce this rate, it’s important to refine the model through improved data processing, feature selection, or by using more advanced algorithms.

Lowering the misclassification rate aids in developing a more accurate and reliable model, crucial for practical deployment in diverse applications such as finance, healthcare, and more.

Optimizing Classification Thresholds

Optimizing classification thresholds is crucial for enhancing model performance. The threshold determines how classification decisions are made, impacting metrics like precision, recall, and F1 score. By carefully selecting and adjusting thresholds, models can become more accurate and effective in specific contexts.

Threshold Selection Techniques

One common approach for selecting thresholds is using the Receiver Operating Characteristic (ROC) curve. This graphical plot illustrates the true positive rate against the false positive rate at various thresholds.

By analyzing this curve, one can identify the threshold that optimizes the balance between sensitivity and specificity.

Another technique involves precision-recall curves. These curves are especially useful for imbalanced datasets, where one class significantly outnumbers the other.

Selecting a threshold along this curve helps in maintaining an optimal balance between precision and recall. Adjusting the threshold can lead to improved F1 scores and better handling of class imbalances.

In some cases, automated methods like the Youden’s J statistic can be used. This method directly calculates the point on the ROC curve that maximizes the difference between true positive rate and false positive rate.

Balancing Precision and Recall

Balancing precision and recall often requires adjusting thresholds based on specific application needs.

For instance, in scenarios where false positives are costly, models can be tuned to have higher precision by increasing the threshold. Conversely, if missing a positive case is more detrimental, a lower threshold may be chosen to improve recall.

The goal is not just to improve one metric but to ensure the model performs well in the context it is applied.

Tools like the classification threshold adjustment allow for practical tuning. They enable analysts to fine-tune models according to the desired trade-offs.

For maximum effectiveness, teams might continuously monitor thresholds and adjust them as data changes over time. This ongoing process ensures that the balance between precision and recall aligns with evolving conditions and expectations.

Loss Functions in Classification

Loss functions in classification help measure how well a model’s predictions align with the true outcomes. They guide the training process by adjusting model parameters to reduce errors. Log loss and cross-entropy are key loss functions used, especially in scenarios with multiple classes.

Understanding Log Loss

Log loss, also known as logistic loss or binary cross-entropy, is crucial in binary classification problems. It quantifies the difference between predicted probabilities and actual class labels.

A log loss of zero indicates a perfect model, while higher values show worse predictions. The formula for log loss calculates the negative log likelihood of the true labels given the predicted probabilities.

Log loss is effective for models that output probabilities like logistic regression. It penalizes wrong predictions more severely and is sensitive to well-calibrated probabilities. Thus, it pushes models to be more confident about their predictions.

Cross-Entropy in Multiclass Classification

Cross-entropy is an extension of log loss used in multiclass classification problems. It evaluates the distance between the true label distribution and the predicted probability distribution across multiple classes.

When dealing with several classes, cross-entropy helps models adjust to improve prediction accuracy.

The formula for cross-entropy sums the negative log likelihoods for each class. This encourages models to assign high probabilities to the true class.

Cross-entropy is widely used in neural networks for tasks such as image recognition, where multiple categories exist. Its adaptability to multi-class scenarios makes it a standard choice for evaluating model performance in complex classification settings.

Frequently Asked Questions

Understanding the differences between accuracy and F1 score is crucial for evaluating model performance. Confusion matrices play a key role in computing various classification metrics. Additionally, recognizing when to use precision over recall and vice versa can enhance model evaluation.

What is the difference between accuracy and F1 score when evaluating model performance?

Accuracy measures the proportion of correct predictions in a dataset. It’s simple but can be misleading if classes are imbalanced.

The F1 score, on the other hand, is the harmonic mean of precision and recall, providing a balance between the two. It is particularly useful for datasets with uneven class distribution, as it considers both false positives and negatives.

How is the confusion matrix used to compute classification metrics?

A confusion matrix is a table that lays out the predicted and actual values in a classification problem. It enables the calculation of metrics like precision, recall, and F1 score.

The matrix consists of true positives, true negatives, false positives, and false negatives, which are essential for determining the effectiveness of a model.

Why is the ROC curve a valuable tool for classifier evaluation, and how does it differ from the precision-recall curve?

The ROC curve illustrates the trade-off between true positive and false positive rates at various thresholds. It’s valuable for evaluating a classifier’s performance across different sensitivity levels.

Unlike the ROC curve, the precision-recall curve focuses on precision versus recall, making it more informative when dealing with imbalanced datasets. The area under these curves (AUC) helps summarize each curve’s performance.

In what situations is it more appropriate to use precision as a metric over recall, and vice versa?

Precision should be prioritized when the cost of false positives is high, such as in spam detection.

Recall is more crucial when catching more positives is vital, as in disease screening.

The choice between precision and recall depends on the context and the balance needed between false positives and false negatives in specific scenarios.

How do you calculate the F1 score from precision and recall, and what does it represent?

The F1 score is calculated using the formula: ( F1 = 2 \times \left(\frac{precision \times recall}{precision + recall}\right) ).

This metric represents the balance between precision and recall, offering a single score that favors models with similar precision and recall values. It’s especially helpful for evaluating performance on imbalanced datasets.

Can you explain ROC AUC and PR AUC, and how do they perform on imbalanced datasets?

ROC AUC measures the area under the ROC curve, indicating the model’s capability to differentiate between classes. In contrast, PR AUC focuses on the area under the precision-recall curve, which is often more suitable for imbalanced classes. AUC values help compare models, emphasizing that PR AUC provides a clearer picture when dealing with imbalances.