Understanding CTEs in T-SQL

Common Table Expressions (CTEs) in T-SQL help simplify complex queries and enhance code readability. They allow developers to define temporary result sets within queries. This makes it easier to work with intricate data operations.

Definition and Advantages of Common Table Expressions

Common Table Expressions, or CTEs, are temporary result sets defined in SQL Server using the WITH clause. They are used to simplify and organize complex queries. Unlike derived tables, CTEs can be referenced multiple times within the same query. This makes code easier to understand and maintain.

One important advantage of CTEs is their ability to improve code readability. They allow for the breakdown of complex queries into more manageable parts. This feature is particularly useful when dealing with subqueries or recursive operations. CTEs also enhance performance by reducing repetition in SQL code.

CTE Syntax Overview

The syntax of a CTE involves using the WITH clause followed by the CTE name and the query that defines it. A simple example might look like this:

WITH EmployeeCTE AS (

SELECT EmployeeID, FirstName, LastName

FROM Employees

)

SELECT * FROM EmployeeCTE;

Here, EmployeeCTE acts as a temporary view in the SQL query. It starts with the keyword WITH, followed by the CTE name, and the query enclosed in parentheses. This structure makes the CTE accessible in subsequent queries, promoting cleaner and more organized SQL statements.

Anatomy of a Simple CTE

A simple CTE breaks down a query into logical steps. Consider this basic structure:

WITH SalesCTE AS (

SELECT ProductID, SUM(Quantity) AS TotalQuantity

FROM Sales

GROUP BY ProductID

)

SELECT * FROM SalesCTE WHERE TotalQuantity > 100;

In this scenario, SalesCTE is defined to summarize sales data. It calculates the total quantity sold for each product. Once established, the CTE is queried again to filter results. This step-by-step approach makes the logic transparent and the SQL code more readable and modular.

Basic CTE Queries

Common Table Expressions (CTEs) are useful tools in T-SQL for simplifying complex queries. They help organize code and improve readability. A CTE can be used with SELECT, INSERT, UPDATE, and DELETE statements to manage data efficiently. Here’s how each works within CTEs.

Crafting a Select Statement within CTEs

A SELECT statement within a CTE allows for temporary result sets that are easy to reference. To create one, use the WITH keyword followed by the CTE name and the SELECT query:

WITH EmployeeData AS (

SELECT EmployeeID, FirstName, LastName

FROM Employees

)

SELECT * FROM EmployeeData;

This example defines EmployeeData, which can be queried as a table. CTEs improve readability and make code cleaner, especially when dealing with complex joins or aggregations.

Using CTEs with Insert Statements

INSERT statements add new records. CTEs can prepare the dataset for insertion into a target table. For instance:

WITH NewData AS (

SELECT 'John', 'Doe', 'john.doe@example.com'

)

INSERT INTO Employees (FirstName, LastName, Email)

SELECT * FROM NewData;

This takes the specified data and inserts it into the Employees table. The CTE allows the source data to be easily modified or expanded without changing the main insert logic.

Updating Data with CTEs

CTEs are helpful in organizing complex UPDATE operations. They provide a clearer structure when the updated data depends on results from a select query:

WITH UpdatedSalaries AS (

SELECT EmployeeID, Salary * 1.10 AS NewSalary

FROM Employees

WHERE Department = 'Sales'

)

UPDATE Employees

SET Salary = NewSalary

FROM UpdatedSalaries

WHERE Employees.EmployeeID = UpdatedSalaries.EmployeeID;

Here, the CTE calculates updated salaries for a particular department. This simplifies the update process and makes the code more maintainable.

Deleting Records Using CTEs

For DELETE operations, CTEs can define the subset of data to be removed. This makes it easy to specify only the needed criteria:

WITH OldRecords AS (

SELECT EmployeeID

FROM Employees

WHERE HireDate < '2010-01-01'

)

DELETE FROM Employees

WHERE EmployeeID IN (SELECT EmployeeID FROM OldRecords);

This example removes employees hired before 2010. The CTE targets specific records efficiently, and the logic is easy to follow, reducing the chance of errors.

Implementing Joins in CTEs

Implementing joins within Common Table Expressions (CTEs) helps in organizing complex SQL queries. This section explores how inner and outer joins work within CTEs, providing a clearer path to refined data retrieval.

Inner Joins and CTEs

When using inner joins with CTEs, the goal is to combine rows from multiple tables based on a related column. This is useful for filtering data to return only matching records from each table.

Consider a scenario where a CTE is used to extract a specific subset of data. Inside this CTE, an inner join can link tables like employees and departments, ensuring only employees in active departments are selected.

The syntax within a CTE starts with the WITH keyword, followed by the CTE name and a query block. Inside this block, an inner join is used within the SELECT statement to relate tables:

WITH EmployeeData AS (

SELECT e.Name, e.DepartmentID, d.DepartmentName

FROM Employees e

INNER JOIN Departments d ON e.DepartmentID = d.ID

)

Here, the INNER JOIN ensures that only rows with matching DepartmentID in both tables are included.

Outer Joins within CTE Structure

Outer joins in a CTE structure allow retrieval of all rows from the primary table and matched rows from the secondary table. This setup is beneficial when needing to display unmatched data alongside matched results.

For instance, if a task is to find all departments and list employees belonging to each—while also showing departments without employees—an outer join can be used. This involves a LEFT JOIN within the CTE:

WITH DeptWithEmployees AS (

SELECT d.DepartmentName, e.Name

FROM Departments d

LEFT JOIN Employees e ON d.ID = e.DepartmentID

)

The LEFT JOIN retrieves all department names and includes employee data where available. Unmatched departments are still displayed with NULL for employee names, ensuring complete department visibility.

Complex CTE Queries

Complex CTE queries involve advanced techniques that enhance SQL efficiency and readability. They allow for the creation of sophisticated queries using multiple CTEs, combining CTEs with unions, and embedding subqueries.

Managing Multiple CTEs in a Single Query

When working with multiple CTEs, organizing them properly is crucial. SQL allows defining several CTEs within a single query, each separated by a comma. This method enables the SQL engine to process complex logic step by step.

For instance, a developer can create one CTE for filtering data and another for aggregating results. Managing multiple CTEs in a query helps break down complicated logic into more digestible parts and improve clarity.

Leveraging Union and Union All with CTEs

Incorporating UNION and UNION ALL with CTEs can be particularly useful for combining results from multiple queries. The UNION operator merges results but removes duplicates, while UNION ALL includes all entries, duplicates intact.

Using these operators with CTEs allows for seamless integration of diverse datasets. Developers can quickly perform comprehensive data analyses by combining tables or data sets, which would otherwise require separate queries or complex joins.

Applying Subqueries in CTEs

Subqueries within CTEs add a layer of flexibility and power to SQL queries. A subquery permits additional data processing and can be a foundation for a CTE.

For example, you might use a subquery within a CTE to identify records that meet specific conditions. By doing so, the outer query can focus on further details, improving both performance and clarity. When CTEs involve subqueries, it is important to ensure they are optimized to prevent performance lags.

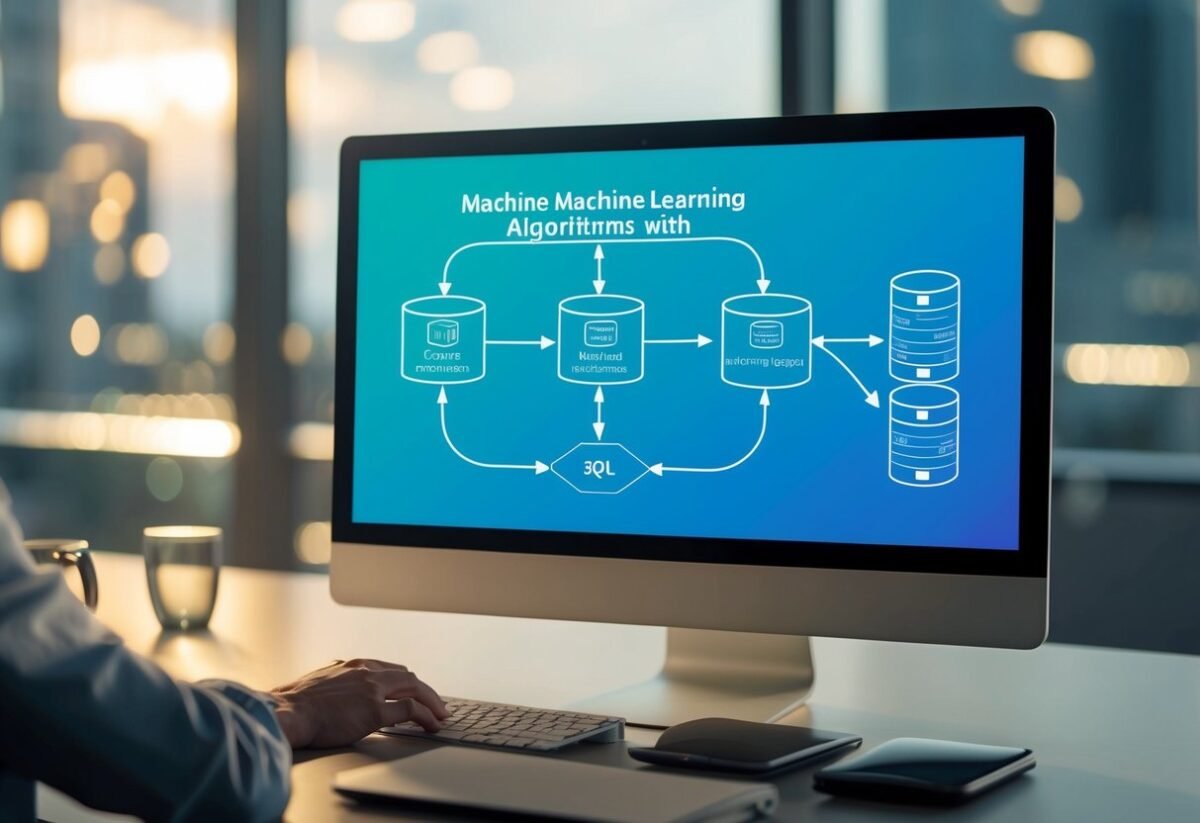

Recursive CTEs Explained

Recursive Common Table Expressions (CTEs) are powerful tools in T-SQL for handling complex queries involving hierarchies and repeated processes. Understanding how to write them effectively can help avoid common pitfalls like infinite loops.

Basics of Recursive CTEs

A Recursive CTE is a query that references itself. It consists of two parts: an anchor member and a recursive member.

The anchor member initializes the CTE, and the recursive member repeatedly executes, each time referencing results from the previous iteration.

Anchor Member

This part sets the starting point. For example, it begins with a base record.

Recursive Member

It uses recursion to pull in rows relative to the data retrieved by the anchor member.

When the recursive query runs, it continues processing until no more data is left to evaluate. This makes it ideal for queries where you need to connect related rows.

Building Hierarchies with Recursive Queries

Recursive CTEs are well-suited for hierarchical structures, like organizational charts or folder trees. They efficiently traverse a hierarchical relationship and organize records in a clearly defined order.

To build such structures, define a parent-child relationship within the data.

The CTE starts with a root node (row), then iteratively accesses child nodes. This method is extremely useful in databases where relationships can be defined by IDs.

When executing, the CTE retrieves a row, retrieves its children, and continues doing so until no children remain. This layered approach allows for easy visualization of parent-child relationships.

Preventing Infinite Loops in Recursion

Infinite loops can be a risk. They occur when a recursive CTE continually refers to itself without terminating. To prevent this, two main strategies are employed.

MAXRECURSION

Use the MAXRECURSION option to limit the number of recursive calls. For example, setting OPTION(MAXRECURSION 100) will stop recursion at 100 levels, preventing infinite loops.

Stop Conditions

Implement checks within the CTE to stop recursion naturally.

By using conditions to exclude rows that should not continue, it limits how far recursion extends.

These strategies ensure that queries execute efficiently without entering endless cycles, protecting both data and system resources.

Advanced CTE Applications

Advanced Common Table Expressions (CTEs) can transform how data is processed and analyzed in SQL Server. They offer efficient solutions for dynamic reporting, pivoting data, and removing duplicate information. This guide explores these applications to enhance data management strategies.

CTEs for Pivoting Data in SQL Server

Pivoting data is a method used to transform rows into columns, simplifying data analysis. In SQL Server, CTEs can streamline this process.

By defining a CTE, users pre-select the necessary data before applying the PIVOT function. This pre-selection reduces complexity in the final query, making it more readable and efficient.

Pivoting helps in scenarios where data needs restructuring to create reports or feed into applications.

Using CTEs before the pivot operation can significantly improve performance, especially with large datasets, by organizing data logically beforehand. This approach is suitable for scenarios where data is stored in time-series formats and must be presented in a different layout.

Using CTEs for Dynamic Reporting

Dynamic reporting requires adaptable queries to respond to changing user inputs or datasets.

CTEs in SQL Server are ideal for this. They can simplify complex queries and improve readability.

For dynamic reporting, a CTE can break down a large query into manageable parts, making adjustments easier.

They can also be used to prepare data sets by filtering or aggregating data before the main query.

This organization leads to faster query execution and more responsive reports.

Furthermore, when handling multiple datasets, CTEs provide a consistent structure, ensuring that reports remain accurate and relevant.

Data Deduplication Techniques with CTEs

Data deduplication is essential to maintain the integrity and quality of databases.

With CTEs, deduplication becomes straightforward by temporarily organizing duplicated data for later removal.

By using a CTE, users can first define criteria for duplicate detection, such as identical records in primary key fields or other identifiers.

After identifying duplicates, it’s easy to apply filters or delete statements to clean the data.

This method helps maintain clean datasets without resorting to complex procedures.

Additionally, when combined with SQL Server’s ROW_NUMBER() function, CTEs can effectively rank duplicates, allowing precise control over which records to keep.

This technique not only optimizes storage but also ensures that data remains consistent and reliable.

Performance Considerations for CTEs

Performance in SQL queries is crucial when working with large datasets.

Evaluating the differences between common table expressions (CTEs) and temporary tables helps enhance efficiency.

Exploring how to optimize CTE queries can significantly boost overall execution speed and resource management.

Comparing CTE Performance with Temporary Tables

CTEs and temporary tables both serve the purpose of organizing data. A key difference lies in their scope and lifetime.

CTEs are embedded in a SQL statement and exist only for the duration of that statement. They offer a tidy structure, which makes them readable and easy to manage.

This makes CTEs ideal for complex queries involving joins and recursive operations.

Temporary tables, in contrast, are more versatile and can be reused multiple times within a session or script. This reusability could potentially lead to better performance in iterative operations where the same data set is repeatedly accessed.

However, temporary tables may require careful management of SQL resources to avoid any potential system overhead.

Deciding between CTEs and temporary tables depends largely on the use case, query complexity, and performance needs.

Optimization Strategies for CTE Queries

Optimizing CTEs involves several strategies.

An important method is minimizing the data scope by selecting only the necessary columns and rows. This reduces memory usage and speeds up query execution.

Indexes can help improve performance, even though they’re not directly applied to CTEs. Applying indexes on the tables within the CTE can enhance the query performance significantly by reducing execution time.

Another strategy is evaluating execution plans frequently. By analyzing these plans, developers can identify bottlenecks and optimize query logic to improve performance.

Adjusting query writing approaches and testing different logic structures can lead to more efficient CTE performance.

Integrating CTEs with SQL Data Manipulation

Integrating Common Table Expressions (CTEs) with SQL data manipulation provides flexibility and efficiency.

By using CTEs in SQL, complex queries become more manageable. This integration is especially useful when combining CTEs with aggregate functions or merge statements.

CTEs with Aggregate Functions

CTEs simplify working with aggregate functions by providing a way to structure complex queries.

With CTEs, temporary result sets can be created, allowing data to be grouped and summarized before final query processing.

This step-by-step approach helps in calculating sums, averages, and other aggregate values with clarity.

For instance, using a CTE to first select a subset of data, such as sales data for a specific period, makes it easier to apply aggregate functions, like SUM() or AVG(). This method improves readability and maintenance of SQL code.

Moreover, CTEs enhance performance by allowing SQL Server to optimize execution plans. Because the CTE provides a clear structure, the server can handle queries more efficiently.

This is particularly beneficial when dealing with large datasets, as it reduces complexity and improves execution time.

Merge Statements and CTEs

Merge statements in SQL are used to perform inserts, updates, or deletes in a single statement based on data comparison.

When combined with CTEs, this process becomes even more effective.

A CTE can be used to select and prepare the data needed for these operations, making the merge logic cleaner and more understandable.

For example, using a CTE to identify records to be updated or inserted helps streamline the merge process. This approach organizes the data flow and ensures that each step is clear, reducing the likelihood of errors.

The integration of CTEs also helps in managing conditional logic within the merge statement. By using CTEs, different scenarios can be handled efficiently, leading to robust and flexible SQL code.

This makes maintaining and updating the database simpler and less error-prone.

Enhancing SQL Views with CTEs

Common Table Expressions (CTEs) are useful tools in SQL for enhancing efficiency and readability when creating complex queries. They enable developers to build more dynamic and understandable views.

Creating Views Using CTEs

Creating views in SQL using CTEs allows for cleaner and easier-to-maintain code.

A CTE defines a temporary result set that a SELECT statement can reference. When a view is created with a CTE, the CTE’s ability to break down complex queries into simpler parts makes updates and debugging more straightforward.

Consider a CTE named SalesByRegion that aggregates sales data by region. By using CREATE VIEW, this CTE can be repeatedly referenced without the need to write the complex logic each time.

WITH SalesByRegion AS (

SELECT Region, SUM(Sales) AS TotalSales

FROM SalesData

GROUP BY Region

)

CREATE VIEW RegionalSales AS

SELECT * FROM SalesByRegion;

This approach separates the logic for calculating sales from other operations, enhancing clarity and reducing errors.

Nested CTEs in Views

Nested CTEs increase flexibility in SQL views. They allow one CTE to reference another, building layered queries that are still easy to follow.

This can be especially helpful in scenarios where multiple preprocessing steps are needed.

Suppose a query requires calculating both sales by region and average sales per product. Using nested CTEs, each step can be processed separately and combined seamlessly:

WITH SalesByRegion AS (

SELECT Region, SUM(Sales) AS TotalSales

FROM SalesData

GROUP BY Region

), AverageSales AS (

SELECT ProductID, AVG(Sales) AS AvgSales

FROM SalesData

GROUP BY ProductID

)

CREATE VIEW DetailedSales AS

SELECT sr.Region, sr.TotalSales, a.AvgSales

FROM SalesByRegion sr

JOIN AverageSales a ON sr.Region = a.ProductID;

The readability of layered CTEs makes SQL management tasks less error-prone, as each section of the query is focused on a single task.

By utilizing nested CTEs, developers can maximize the modularity and comprehensibility of their SQL views.

Best Practices for Writing CTEs

Using Common Table Expressions (CTEs) effectively requires a blend of proper syntax and logical structuring. Adopting best practices not only enhances code readability but also minimizes errors, ensuring maintainable and efficient queries.

Writing Maintainable CTE Code

Creating SQL queries that are easy to read and maintain is crucial.

One strategy is to use descriptive names for the CTEs. This helps clarify the function of each part of the query.

Clear naming conventions can prevent confusion, particularly in complex queries involving multiple CTEs.

Another important practice is organizing the query structure. When writing CTEs in SQL Server Management Studio, logically separate each CTE by defining inputs and outputs clearly.

This approach aids in understanding the query flow and makes future adjustments more manageable. Properly formatting the CTEs with consistent indentation and spacing further enhances readability.

It’s also beneficial to maintain predictable logic in your queries. This means keeping calculations or transformations within the CTE that are relevant only to its purpose, rather than scattering logic throughout the query.

Such consistency assists in faster debugging and easier modifications.

Common Mistakes and How to Avoid Them

One frequent mistake is neglecting recursive CTE syntax when writing recursive queries. Ensure to include a termination check to prevent infinite loops.

For example, define a clear condition under which the recursion stops. Failing to do this can lead to performance issues.

Another common error is overusing CTEs where simple subqueries might suffice. Evaluate complexity—using a CTE might add unnecessary layers, making the query harder to follow.

When a CTE is not needed, a subquery can often be a cleaner alternative.

Additionally, misordered or overlapping CTE names can create confusion and bugs. Ensure each name is unique and descriptive to avoid conflicts.

Regularly test each CTE independently within the SQL Server Management Studio to validate its logic and output before integrating it into more complex queries.

Exploring Real-world CTE Examples

Common Table Expressions (CTEs) in SQL Server are crucial for simplifying complex queries. They help in breaking problems into manageable parts, enabling clearer and more readable SQL code. Below are examples that illustrate how CTEs can be applied in various scenarios.

CTE Use Cases in Business Scenarios

In business contexts, CTEs are used to manage and analyze data efficiently.

For instance, they help in calculating the average number of sales orders for a company. This involves defining a cte_query_definition that temporarily holds the data result set for complex queries.

One common application is assessing employee sales performance. By using SQL Server, businesses can quickly determine which employees consistently meet targets by analyzing data over a specified period.

Such analysis aids in identifying top performers and areas for improvement.

Another useful scenario is inventory management. CTEs can track changes in stock levels, helping businesses plan their orders effectively.

They simplify recursive queries, which are essential for operations such as updating stock quantities based on sales data from orders.

Analyzing Sales Data with CTEs

Analyzing sales data is a significant area where CTEs shine.

In the AdventureWorks database, for example, CTEs can aggregate sales information to provide insights into customer buying trends.

For precise results, one first defines a CTE to compute averages like the average sales per customer.

The CTE groups the sales data, offering a clear view of performance metrics.

SQL Server enhances this process by efficiently managing large datasets through CTEs, thus providing accurate and timely sales insights that support strategic business decisions.

Learning Tools and Resources

Using the right tools can enhance one’s expertise in T-SQL and CTEs. Engaging with interactive exercises and educational platforms helps solidify concepts and makes the learning process engaging and effective.

Interactive T-SQL Exercises with CTEs

Interactive exercises are valuable for practicing T-SQL, especially regarding Common Table Expressions (CTEs).

Websites and tools that provide hands-on coding environments allow learners to apply CTE concepts in real time. These exercises often offer immediate feedback, which is crucial for learning.

Platforms such as Microsoft SQL Server provide built-in tools for practicing T-SQL queries.

By using these resources, learners can strengthen their understanding of CTEs and improve their query skills.

This practical approach helps internalize CTE usage in solving complex data retrieval tasks.

Educational Platforms and Documentation

Various educational platforms offer structured courses and tutorials on T-SQL and CTEs. Online learning platforms, books, and documentation, such as Pro T-SQL Programmer’s Guide, provide comprehensive resources that cater to both beginners and advanced learners.

These resources offer lessons on T-SQL syntax, functions, and best practices for using CTEs effectively. Many platforms also offer certification programs that ensure learners have a robust understanding of T-SQL components and CTEs. Such programs often build towards a deeper proficiency in SQL-related tasks, enhancing career readiness.

Frequently Asked Questions

This section addresses common inquiries about using Common Table Expressions (CTEs) in T-SQL. Topics include syntax, functionality, examples for complex queries, the advantages of CTEs over subqueries, learning resources, and performance considerations.

What is the syntax for a CTE in SQL Server?

A CTE in SQL Server starts with a WITH clause, followed by the CTE name and column names in parentheses. After that, include the SQL query that defines the CTE. Finally, use the CTE name in the main query. Here is a simple structure:

WITH CTE_Name (column1, column2) AS (

SELECT column1, column2 FROM TableName

)

SELECT * FROM CTE_Name;

How do common table expressions (CTE) work in T-SQL?

CTEs work by allowing temporary result sets that can be referenced within a SELECT, INSERT, UPDATE, or DELETE statement. They improve readability and manageability by breaking complex queries into simpler parts. Each CTE can be used multiple times in the same query and is defined using the WITH keyword.

What are some examples of using CTE in T-SQL for complex queries?

CTEs are useful for tasks like creating recursive queries or simplifying complex joins and aggregations. For example, a CTE can be used to calculate a running total or to find hierarchical data, such as organizational charts. They are also helpful in managing large queries by breaking them into smaller, more manageable sections.

In what scenarios should one use a CTE over a subquery in SQL Server?

CTEs are preferred when a query is complex or needs to be referenced multiple times. They can increase readability compared to deeply nested subqueries. Additionally, CTEs make it easier to test and modify parts of a query independently. They are particularly useful when recursion is required.

How can I learn to write CTE statements effectively in T-SQL?

To learn CTEs, start by studying basic T-SQL tutorials and examples. Practice by writing simple queries and gradually work on more complex tasks. Books like T-SQL Querying can provide more insights. Experimentation is key to mastering CTEs.

Are there any performance considerations when using CTEs in T-SQL?

CTEs enhance query readability. However, they might not always improve performance. They do not inherently optimize queries, so you need to be careful, especially with large data sets. Recursive CTEs, in particular, can lead to performance issues if not managed properly. You need to analyze execution plans and test to ensure efficiency.