Understanding T-SQL and Its Functions

Transact-SQL (T-SQL) is an extension of SQL used predominantly in Microsoft SQL Server. It adds programming constructs and advanced functions that help manage and manipulate data.

SQL Functions in T-SQL are tools to perform operations on data. They are categorized into two main types: Scalar Functions and Aggregate Functions.

Scalar Functions return a single value. Examples include mathematical functions like ABS() for absolute values, and string functions like UPPER() to convert text to uppercase.

Aggregate Functions work with groups of records, returning summarized data. Common examples are SUM() for totals and AVG() for averages. These functions are essential for generating reports and insights from large datasets.

Example:

-

Scalar Function Usage:

SELECT UPPER(FirstName) AS UpperName FROM Employees; -

Aggregate Function Usage:

SELECT AVG(Salary) AS AverageSalary FROM Employees;

Both types of functions enhance querying by simplifying complex calculations. Mastery of T-SQL functions can significantly improve database performance and analytics capabilities.

Data Types in SQL Server

Data types in SQL Server define the kind of data that can be stored in a column. They are crucial for ensuring data integrity and optimizing database performance. This section focuses on numeric data types, which are vital for handling numbers accurately and efficiently.

Exact Numerics

Exact numeric data types in SQL Server are used for storing precise values. They include int, decimal, and bit.

The int type is common for integer values, ranging from -2,147,483,648 to 2,147,483,647, which is useful for counters or IDs. The decimal type supports fixed precision and scale, making it ideal for financial calculations where exact values are necessary. For simple binary or logical data, the bit type is utilized and can hold a value of 0, 1, or NULL.

Each type provides distinct advantages based on the application’s needs. For example, using int for simple counts can conserve storage compared to decimal, which requires more space. Choosing the right type impacts both storage efficiency and query performance, making the understanding of each critical.

Approximate Numerics

Approximate numeric data types, including float and real, are used when precision is less critical. They offer a trade-off between performance and accuracy by allowing rounding errors.

The float type is versatile for scientific calculations, as it covers a wide range of values with single or double precision. Meanwhile, the real type offers single precision, making it suitable for applications where memory savings are essential and absolute precision isn’t a requirement.

Both float and real are efficient for high-volume data processes where the data range is more significant than precise accuracy. For complex scientific calculations, leveraging these types can enhance computational speed.

Working with Numeric Functions

Understanding numeric functions in T-SQL is important for handling data efficiently. These functions offer ways to perform various computations. This section covers mathematical functions that do basic calculations and aggregate mathematical functions that summarize data.

Mathematical Functions

Mathematical functions in T-SQL provide tools for precise calculations. ROUND(), CEILING(), and FLOOR() are commonly used functions.

ROUND() lets users limit the number of decimal places in a number. CEILING() rounds a number up to the nearest integer, while FLOOR() rounds down.

Another useful function is ABS(), which returns the absolute value of a number. This is especially helpful when dealing with negative numbers.

Users often apply mathematical functions in data manipulation tasks, ensuring accurate and efficient data processing.

Aggregate Mathematical Functions

Aggregate functions in T-SQL perform calculations on a set of values, returning a single result. Common functions include SUM(), COUNT(), AVG(), MIN(), and MAX(). These help in data analysis tasks by providing quick summaries.

SUM() adds all the values in a column, while COUNT() gives the number of entries. AVG() calculates the average value, and MIN() and MAX() find the smallest and largest values.

These functions are essential for generating summaries and insights from large datasets, allowing users to derive valuable information quickly.

Performing Arithmetic Operations

Arithmetic operations in T-SQL include addition, subtraction, multiplication, division, and modulus. These operations are fundamental for manipulating data and performing calculations within databases.

Addition and Subtraction

Addition and subtraction are used to calculate sums or differences between numeric values. In T-SQL, operators like + for addition and - for subtraction are used directly in queries.

For instance, to find the total price of items, the + operator adds individual prices together. The subtraction operator calculates differences, such as reducing a quantity from an original stock level.

A key point is ensuring data types match to avoid errors.

A practical example:

SELECT Price + Tax AS TotalCost

FROM Purchases;

Using parentheses to group operations can help with clarity and ensure correct order of calculations. T-SQL handles both positive and negative numbers, making subtraction versatile for various scenarios.

Multiplication and Division

Multiplication and division are crucial for scaling numbers or breaking them into parts. The * operator performs multiplication, useful for scenarios like finding total costs across quantities.

Division, represented by /, is used to find ratios or distribute values equally. Careful attention is needed to avoid division by zero, which causes errors.

Example query using multiplication and division:

SELECT Quantity * UnitPrice AS TotalPrice

FROM Inventory

WHERE Quantity > 0;

The MOD() function calculates remainders, such as distributing items evenly with a remainder for extras. An example could be dividing prizes among winners, where MOD can show leftovers.

These operations are essential for any database work, offering flexibility and precision in data handling.

Converting Data Types

Converting data types in T-SQL is essential for manipulating and working with datasets efficiently. This process involves both implicit and explicit methods, each suited for different scenarios.

Implicit Conversion

Implicit conversion occurs automatically when T-SQL changes one data type to another without requiring explicit instructions. This is often seen when operations involve data types that are compatible, such as integer to float or smallint to int.

The system handles the conversion behind the scenes, making it seamless for the user.

For example, adding an int and a float results in a float value without requiring manual intervention.

Developers should be aware that while implicit conversion is convenient, it may lead to performance issues if not managed carefully due to the overhead of unnecessary type conversions.

Explicit Conversion

Explicit conversion, on the other hand, is performed by the user using specific functions in T-SQL, such as CAST and CONVERT. These functions provide greater control over data transformations, allowing for conversion between mismatched types, such as varchar to int.

The CAST function is straightforward, often used when the desired result is a standard SQL type.

Example: CAST('123' AS int).

The CONVERT function is more versatile, offering options for style and format, especially useful for date and time types.

Example: CONVERT(datetime, '2024-11-28', 102) converts a string to a date format.

Both methods ensure data integrity and help avoid errors that can arise from incorrect data type handling during query execution.

Utilizing Functions for Rounding and Truncation

Functions for rounding and truncation are essential when working with numerical data in T-SQL. They help in simplifying data by adjusting numbers to specific decimal places or the nearest whole number.

Round Function:

The ROUND() function is commonly used to adjust numbers to a specified number of decimal places. For example, ROUND(123.4567, 2) results in 123.46.

Ceiling and Floor Functions:

The CEILING() function rounds numbers up to the nearest integer. Conversely, the FLOOR() function rounds numbers down.

For instance, CEILING(4.2) returns 5, while FLOOR(4.2) yields 4.

Truncate Function:

Though not a direct T-SQL function, truncation is possible. Using integer division or converting data types can achieve this. This means removing the decimal part without rounding.

Abs Function:

The ABS() function is useful for finding the absolute value of a number, making it always positive. ABS(-123.45) converts to 123.45.

Table Example:

| Function | Description | Example | Result |

|---|---|---|---|

| ROUND | Rounds to specified decimals | ROUND(123.4567, 2) |

123.46 |

| CEILING | Rounds up to nearest whole number | CEILING(4.2) |

5 |

| FLOOR | Rounds down to nearest whole number | FLOOR(4.2) |

4 |

| ABS | Returns absolute value | ABS(-123.45) |

123.45 |

For further reading on T-SQL functions and their applications, check this book on T-SQL Fundamentals.

Manipulating Strings with T-SQL

Working with strings in T-SQL involves various functions that allow data transformation for tasks like cleaning, modifying, and analyzing text. Understanding these functions can greatly enhance the ability to manage string data efficiently.

Character String Functions

Character string functions in T-SQL include a variety of operations like REPLACE, CONCAT, and LEN.

The REPLACE function is useful for substituting characters in a string, such as changing “sql” to “T-SQL” across a dataset.

CONCAT joins multiple strings into one, which is handy for combining fields like first and last names.

The LEN function measures the length of a string, important for data validation and processing.

Other useful functions include TRIM to remove unwanted spaces, and UPPER and LOWER to change the case of strings.

LEFT and RIGHT extract a specified number of characters from the start or end of a string, respectively.

DIFFERENCE assesses how similar two strings are, based on their sound.

FORMAT can change the appearance of date and numeric values into strings.

Unicode String Functions

T-SQL supports Unicode string functions, important when working with international characters. Functions like NCHAR and UNICODE handle special characters.

Using NCHAR, one can retrieve the Unicode character based on its code point.

To analyze string data, STR transforms numerical data into readable strings, ensuring proper formatting and length.

REVERSE displays the characters of a string backward, which is sometimes used in diagnostics and troubleshooting.

These functions allow for comprehensive manipulation and presentation of data in applications that require multi-language support.

By leveraging these functions, handling texts in multiple languages becomes straightforward. Additionally, SPACE generates spaces in strings, which is beneficial when formatting outputs.

Working with Date and Time Functions

Date and time functions in T-SQL are essential for managing and analyzing time-based data. These functions allow users to perform operations on dates and times.

Some common functions include GETDATE(), which returns the current date and time, and DATEADD(), which adds a specified number of units, like days or months, to a given date.

T-SQL provides various functions to handle date and time. Other functions include DAY(), which extracts the day part from a date. For instance, running SELECT DAY('2024-11-28') would result in 28, returning the day of the month.

Here’s a simple list of useful T-SQL date functions:

- GETDATE(): Current date and time

- DATEADD(): Adds time intervals to a date

- DATEDIFF(): Difference between two dates

- DAY(): Day of the month

Understanding the format is crucial. Dates might need conversion, especially when working with string data types. CONVERT() and CAST() functions can help transform data into date formats, ensuring accuracy and reliability.

By utilizing these functions, users can efficiently manage time-based data, schedule tasks, and create time-sensitive reports. This is invaluable for businesses that rely on timely information, as it ensures data is up-to-date and actionable.

Advanced Mathematical Functions

T-SQL’s advanced mathematical functions offer powerful tools for data analysis and manipulation. These functions can handle complex mathematical operations for a variety of applications.

Trigonometric Functions

Trigonometric functions in T-SQL are essential for calculations involving angles and periodic data. Functions such as Sin, Cos, and Tan help in computing sine, cosine, and tangent values respectively. These are often used in scenarios where waveform or rotational data needs to be analyzed.

Cot, the cotangent function, offers a reciprocal perspective of tangent. For inverse calculations, functions like Asin, Acos, and Atan are available, which return angles in radians based on the input values.

Radians and Degrees functions are helpful in converting between radians and degrees, making it easier for users to work with different measurement units.

Logarithmic and Exponential Functions

Logarithmic and exponential functions serve as foundational tools for interpreting growth patterns and scaling data. T-SQL provides Log and Log10 to calculate logarithms based on any positive number and base 10 respectively.

The Exp function is used to determine the value of the exponential constant, e, raised to a specific power. This is useful in computing continuous compound growth rates and modeling complex relationships.

T-SQL also includes constant values like Pi, which is essential for calculations involving circular or spherical data. These functions empower users to derive critical insights from datasets with mathematical accuracy.

Fine-Tuning Queries with Conditionals and Case

In T-SQL, conditionals help fine-tune queries by allowing decisions within statements. The CASE expression plays a key role here, often used to substitute values in the result set based on specific conditions. It is a flexible command that can handle complex logic without lengthy code.

The basic structure of a CASE expression involves checking if-else conditions. Here’s a simple example:

SELECT

FirstName,

LastName,

Salary,

CASE

WHEN Salary >= 50000 THEN 'High'

ELSE 'Low'

END AS SalaryLevel

FROM Employees

In this query, the CASE statement checks the Salary. If it’s 50,000 or more, it labels it ‘High’; otherwise, ‘Low’.

Lists of conditions within a CASE statement can adapt queries to user needs. For instance:

- Single condition: Directly compares values using simple if-else logic

- Multiple conditions: Evaluates in sequence until a true condition occurs

T-SQL also supports the IF...ELSE construct for handling logic flow. Unlike CASE, IF...ELSE deals with control-of-flow in batches rather than returning data. It is especially useful for advanced logic:

IF EXISTS (SELECT * FROM Employees WHERE Salary > 100000)

PRINT 'High salary detected'

ELSE

PRINT 'No high salaries found'

The IF...ELSE construct doesn’t return rows but instead processes scripts and transactions when certain conditions are met.

Tables and conditional formatting allow data presentation to match decision-making needs effectively. Whether using a CASE expression or IF...ELSE, T-SQL provides the tools for precise query tuning.

Understanding Error Handling and Validation

In T-SQL, error handling is crucial for creating robust databases. It helps prevent crashes and ensures that errors are managed gracefully. The main tools for handling errors in T-SQL are TRY, CATCH, and THROW.

A TRY block contains the code that might cause an error. If an error occurs, control is passed to the CATCH block. Here, the error can be logged, or other actions can be taken.

The CATCH block can also retrieve error details using functions like ERROR_NUMBER(), ERROR_MESSAGE(), and ERROR_LINE(). This allows developers to understand the nature of the error and take appropriate actions.

After handling the error, the THROW statement can re-raise it. This can be useful when errors need to propagate to higher levels. THROW provides a simple syntax for raising exceptions.

Additionally, validation is important to ensure data integrity. It involves checking data for accuracy and completeness before processing. This minimizes errors and improves database reliability.

Using constraints and triggers within the database are effective strategies for validation.

Performance and Optimization Best Practices

When working with T-SQL, performance tuning and optimization are crucial for efficient data processing. Focusing on index utilization and query plan analysis can significantly enhance performance.

Index Utilization

Proper index utilization is essential for optimizing query speed. Indexes should be created on columns that are frequently used in search conditions or join operations. This reduces the amount of data that needs to be scanned, improving performance. It’s important to regularly reorganize or rebuild indexes, ensuring they remain efficient.

Choosing the right type of index, such as clustered or non-clustered, can greatly impact query performance. Clustered indexes sort and store the data rows in the table based on their key values, which can speed up retrieval. Non-clustered indexes, on the other hand, provide a logical ordering and can be more flexible for certain query types.

Query Plan Analysis

Analyzing the query execution plan is vital for understanding how T-SQL queries are processed. Execution plans provide insight into the steps SQL Server takes to execute queries. This involves evaluating how tables are accessed, what join methods are used, and whether indexes are effectively utilized. Recognizing expensive operations in the plan can help identify bottlenecks.

Using tools such as SQL Server Management Studio’s Query Analyzer can be beneficial. It helps in visualizing the execution plan, making it easier to identify areas for improvement. By refining queries based on execution plan insights, one can enhance overall query performance.

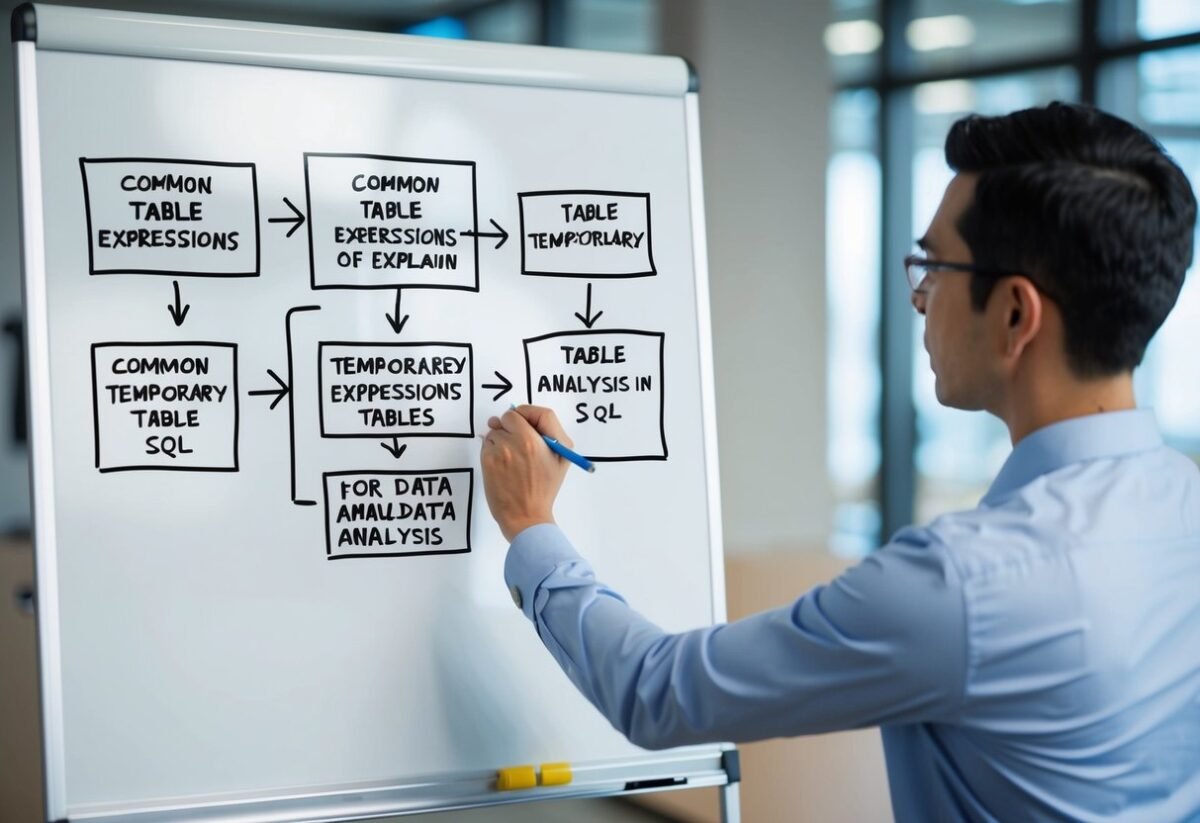

Can you explain the three main types of functions available in SQL Server?

SQL Server supports scalar functions, aggregate functions, and table-valued functions. Scalar functions return a single value, aggregate functions perform calculations on a set of values, and table-valued functions return a table data type. Each type serves different purposes in data manipulation and retrieval.