Machine learning is a powerful branch of artificial intelligence that enables computers to learn from data and make decisions or predictions without explicit programming. This technology has become essential for modern innovation, impacting industries ranging from healthcare to finance.

At its core, machine learning uses algorithms to analyze patterns in data, which can lead to highly efficient and effective problem-solving. By prioritizing data-driven insights, businesses and researchers can discover new opportunities and enhance existing processes.

The efficiency of machine learning lies in its ability to handle vast amounts of data and extract meaningful insights quickly. In fields like content management, machine learning algorithms can recommend personalized content, enhancing user experience.

This adaptability demonstrates how machine learning fosters innovation, enabling systems to evolve and improve over time. Ethical considerations are crucial, as these technologies influence many aspects of daily life and require careful oversight to ensure fairness and accountability.

Machine learning continues to advance, offering new tools and frameworks for developers and researchers. As technology evolves, the relationship between machine learning and artificial intelligence will likely grow stronger, driving future developments. Understanding these concepts can empower people to leverage machine learning effectively in their pursuits.

Key Takeaways

- Machine learning transforms data into actionable insights.

- Ethical considerations are essential in deploying machine learning.

- Advancements in AI and machine learning spur innovation.

Fundamentals of Machine Learning

Machine learning is a field that focuses on creating algorithms that allow computers to learn from data. It relies on recognizing patterns and making predictions. The key areas are understanding what machine learning is, how it differs from traditional programming, and the various types of machine learning approaches.

Defining Machine Learning

Machine learning involves teaching computers to learn from data without being explicitly programmed for specific tasks. It is a subfield of artificial intelligence focused on learning patterns and making predictions based on data.

Algorithms are used to process data, identify patterns, and improve over time. The goal is to develop systems capable of adapting to new data, enabling them to solve complex problems. This is different from traditional software, which follows predefined instructions.

Machine Learning vs. Traditional Programming

Traditional programming requires explicit instructions for each task a machine performs. Machine learning, on the other hand, enables computers to learn from data.

In machine learning, algorithms are trained with data, and they learn to recognize patterns and make decisions based on this learning.

Traditional Programming:

- Developers write step-by-step instructions.

- Computers strictly follow these instructions.

Machine Learning:

- Systems learn from data through training.

- Algorithms modify their approach as they process information.

This method is more adaptive, allowing systems to improve their functions as they receive more data.

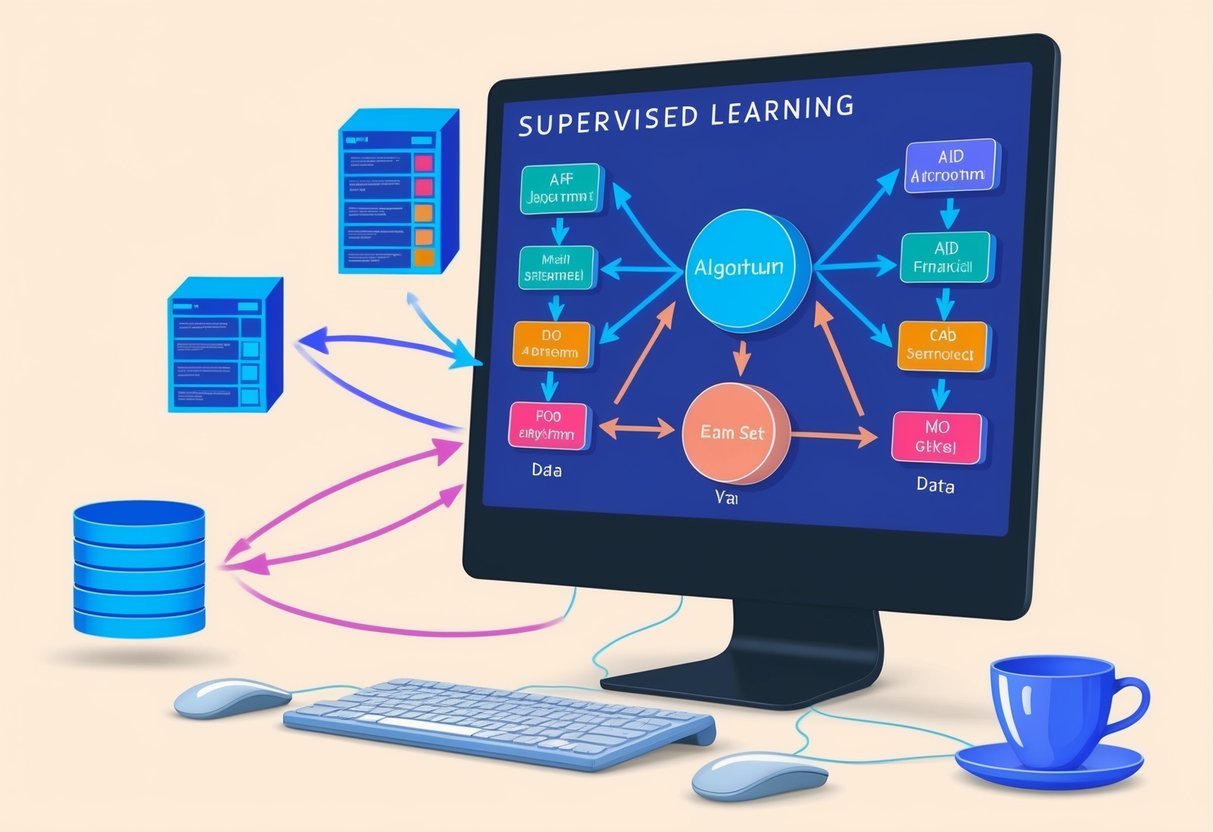

Types of Machine Learning

Machine learning can be categorized into three main types: supervised, unsupervised, and reinforcement learning. Each type uses different methods to analyze data and make predictions.

Supervised Learning involves training algorithms on labeled data, where the output is known. This approach is ideal for tasks like classification and regression.

Unsupervised Learning deals with unlabeled data, focusing on finding hidden patterns without pre-existing labels, making it useful for clustering and dimensionality reduction.

Reinforcement Learning uses rewards and punishments to guide learning, teaching algorithms to make decisions through trial and error. It is often used for robotics and game playing.

Each approach has unique techniques and applications, tailored to various problem-solving needs. Each method also emphasizes its distinct method of learning and interacts with data differently to achieve desired outcomes.

Data: The Fuel of Machine Learning

Data is central to machine learning, acting as the key element that drives models to make predictions and decisions. This section focuses on understanding data sets, the role of data mining and predictive analytics, and the significance of quality training data.

Understanding Data Sets

Data sets are crucial in the world of machine learning. They consist of collections of data points, often organized into tables. Each data point can include multiple features, which represent different aspects of the observation.

Labeled data sets are commonly used in supervised learning, providing examples with predefined outcomes. These labels guide the learning process.

The size and diversity of data sets influence the model’s ability to generalize and perform accurately across various tasks.

Machine learning often begins with selecting the right data set. The choice can impact the model’s effectiveness and reliability, making this an important step.

Data Mining and Predictive Analytics

Data mining is the process of discovering patterns and extracting valuable information from large data sets. It helps in organizing data, making it easier to spot meaningful trends.

It is closely linked to predictive analytics, which uses historical data to predict future outcomes.

These techniques are essential for refining data and informing machine learning models. By identifying patterns, predictive analytics can anticipate trends and enhance decision-making processes.

When data mining and predictive analytics work together, they provide insights that improve model performance. This synergy helps in transforming raw data into actionable intelligence.

Importance of Quality Training Data

Training data quality is vital for successful machine learning. High-quality data improves model accuracy and reliability, while poor data can lead to incorrect predictions.

Important factors include accuracy, completeness, and the relevance of the data to the task at hand.

Preparing training data involves cleaning and preprocessing, filtering out noise and inaccuracies. This step ensures the data is fit for use.

Effective use of training data leads to models that perform well and adapt to new data. Quality training data is the backbone of dependable machine learning models, shaping how they learn and make decisions.

Algorithms and Models

In machine learning, algorithms and models are central to understanding how systems learn from data and make predictions. Algorithms process data, whereas models are the final product that can make predictions on new data.

Introduction to Algorithms

Machine learning algorithms are sets of rules or instructions that a computer follows to learn from data. They help identify patterns and make predictions.

Among the many types of algorithms, Linear Regression and Decision Trees are quite popular. Linear Regression is used for predicting continuous outcomes by finding relationships between variables. Decision Trees, on the other hand, are used for classification and regression tasks by breaking down a dataset into smaller subsets while building an associated decision tree model incrementally.

Neural Networks are another type of algorithm, mostly used in deep learning. They consist of layers of nodes, like neurons in a brain, that process input data and learn to improve over time. These algorithms are crucial for training complex models.

Building and Training the Model

Building a machine learning model involves selecting the right algorithm and feeding it data to learn. The process typically starts with preparing data and choosing a suitable algorithm based on the task, like classification or regression.

During training, the algorithm processes the input data to build a model. For example, Linear Regression creates a line of best fit, while Decision Trees form a branching structure to classify data points. Neural Networks adjust weights within the network to minimize error in predictions.

Training continues until the model achieves acceptable accuracy. Often, this is done by optimizing parameters and minimizing the loss function to find the best predictions.

Model Evaluation and Overfitting

Evaluating machine learning models involves assessing their accuracy and ability to generalize to new data. Metrics such as accuracy, precision, and recall are used to measure performance.

A significant challenge during evaluation is overfitting. Overfitting occurs when models become too complex and perform well on training data but poorly on unseen data. This happens when the model learns noise and irrelevant patterns.

To prevent overfitting, techniques like cross-validation, pruning of Decision Trees, and regularization methods are applied. These strategies ensure that models maintain high accuracy while also functioning effectively with new data sets.

Practical Applications of Machine Learning

Machine learning affects many aspects of life, from how people shop to how they drive. It improves efficiency in various sectors like healthcare and agriculture. Understanding these applications showcases its role in modern society.

Machine Learning in Everyday Life

Machine learning is woven into daily experiences. On platforms like Netflix, recommendation systems suggest shows based on past viewing habits. This personalization increases user engagement by suggesting content they are likely to enjoy.

In transportation, autonomous vehicles use machine learning to improve navigation and safety. These cars process real-time data to make driving decisions, enhancing both convenience and security.

Customer service also benefits through chatbots. These AI-driven tools provide quick responses to customer inquiries, streamlining support processes and freeing human agents to handle complex issues.

Sector-Specific Use Cases

In healthcare, machine learning aids in diagnosing diseases. Algorithms analyze medical images and patient data to help doctors make informed decisions. This can lead to early detection and better treatment outcomes.

In banking, fraud detection systems use machine learning algorithms to flag suspicious transactions. These systems learn from past fraud patterns to identify potential threats and protect customer accounts.

The retail sector leverages machine learning for inventory management. Algorithms forecast demand and optimize stock levels, reducing waste and ensuring product availability for consumers.

Impact on Society and Businesses

Machine learning significantly transforms society and businesses. In agriculture, it optimizes crop yield by analyzing satellite images and environmental data. This enables farmers to make informed decisions about planting and harvesting.

For businesses, machine learning enhances decision-making processes. It provides insights from large datasets, helping companies understand market trends and customer preferences.

Businesses also use machine learning to improve productivity. Automation of routine tasks allows humans to focus on more strategic activities. This technological advance drives efficiency and innovation, leading to competitive advantages in various industries.

Artificial Intelligence and Machine Learning

Artificial Intelligence (AI) and Machine Learning (ML) are core components of modern technology. AI aims to create intelligent systems, while ML focuses on enabling these systems to learn and improve from data. Understanding their connection and unique roles in the tech landscape is essential.

Link Between AI and Machine Learning

AI is an expansive field that involves creating machines capable of performing tasks that typically require human intelligence. This includes areas like computer vision and speech recognition.

Machine Learning is a subset of AI that provides systems with the ability to learn from experience. This learning capability is achieved without being explicitly programmed, making ML crucial for developing smarter systems.

ML uses algorithms to find patterns in data. The connection between AI and ML is that ML enables AI applications to adapt and improve their performance over time by learning from data. By incorporating ML, AI systems can enhance capabilities such as predicting outcomes and automating decisions.

Subfields of AI

AI comprises several subfields, each focusing on a specific aspect of intelligence. Deep Learning is one major subfield which uses neural networks to enhance learning processes and improve tasks like image and speech recognition.

Another important subfield is computer vision, which allows machines to interpret and understand visual information from the world.

Natural language processing (NLP) is also a key subfield focusing on enabling machines to understand and interact using human language. This involves tasks like language translation and text analysis. Speech recognition further extends NLP by enabling systems to convert spoken language into text. These subfields together drive the advancement of AI in understanding and replicating human-like cognitive functions.

Technological Tools and Frameworks

Machine learning tools and frameworks empower developers to build, test, and deploy models efficiently. These technologies include comprehensive platforms and open-source tools that enhance productivity and innovation in machine learning.

Machine Learning Platforms

Machine learning platforms are pivotal in supporting complex model development and management. IBM offers a robust platform with Watson, which allows businesses to integrate AI into their operations. This platform is well-known for its scalability and extensive toolkit.

Google Cloud AI Platform provides a seamless environment for training and deploying models. It supports popular frameworks like TensorFlow and offers tools for data preprocessing and feature engineering. Users can leverage its AutoML capabilities to automate the model-building process.

These platforms are crucial for organizations looking to harness machine learning for various applications, such as Google Translate, offering language translation services that are enhanced by machine learning efforts.

Open-Source Tools

Open-source tools offer flexibility and community support, making them essential for machine learning practitioners.

TensorFlow is a widely-used library known for its vast community and comprehensive resources. It provides tools for building neural networks and deploying them on different platforms.

Scikit-learn is another popular choice, providing simple tools for data analysis and modeling. It’s user-friendly and integrates well with other libraries, making it ideal for beginners and experts alike.

These tools help automate the development of machine learning models, streamlining tasks is essential in enhancing productivity and accuracy in data-driven projects.

Automation in machine learning workflows has become increasingly important for efficient operations in this field.

Machine Learning in Content and Media

Machine learning transforms how media and content are created and accessed. It plays a crucial role in text analysis, social media insights, and processing of images and videos.

Text and Social Media Analysis

Machine learning enhances text and social media analysis by identifying patterns in data. Algorithms mine large datasets from platforms like social media to derive meaningful insights.

Predictive models excel in understanding user preferences and trends, which helps content creators produce engaging material tailored for their audience.

Machine learning also utilizes natural language processing to interpret user sentiment. By analyzing text content, it distinguishes between positive and negative feedback, aiding companies in refining their strategies. This technology aids in managing vast amounts of data by categorizing them efficiently.

Image and Video Processing

Pattern recognition in images and videos is greatly improved with machine learning. Companies like Netflix employ machine learning to personalize recommendations by analyzing viewing habits.

Models analyze visual data, leading to more effective promotional media.

Image processing involves identifying key elements from videos, such as faces or objects, which refines how content is tagged and searched.

Custom models, such as those developed with TensorFlow, can be utilized to extract insights from visual content. This streamlines content creation and enhances the viewer experience by delivering relevant media faster.

Ethical Considerations in Machine Learning

Machine learning technologies have rapidly changed various industries. Along with this growth, there are significant ethical challenges. Addressing bias, safeguarding privacy, and preventing discrimination are crucial for responsible AI development.

Bias and Discrimination

Bias in machine learning can occur when models learn skewed information from the data used to train them. This can lead to unfair outcomes.

For example, if a dataset lacks diversity, the resulting model might favor certain groups over others. Such issues can negatively affect decisions in areas like healthcare, hiring, and criminal justice.

Mitigating bias is vital. Developers need to evaluate training data for representation. Techniques like resampling and reweighting can help balance datasets.

Moreover, diverse teams should oversee model development to spot potential discrimination early. Embedding fairness checks into machine learning processes further reduces bias risks.

Privacy and Data Security

Privacy is a major concern in machine learning, as models often rely on vast amounts of personal data. Protecting this data is essential to prevent misuse and maintain user trust.

Data breaches and leaks can expose sensitive information, leading to identity theft or unauthorized surveillance.

To ensure data security, encryption and anonymization are crucial practices. Developers should minimize data collection, only using what is necessary for model functions.

Regular security audits and robust access controls help safeguard data against unauthorized access. Additionally, organizations must comply with privacy regulations like GDPR to protect individual’s rights and secure their information.

Advancing the Field of Machine Learning

Machine learning continues to evolve with breakthroughs transforming both technology and society. This advancement is propelled by innovations in algorithms and predictions about future applications.

Pioneering Research and Innovations

Arthur Samuel, one of the early pioneers in machine learning, set the foundation with his work on computer learning in the 1950s. Today, research has expanded into deep learning, natural language processing, and reinforcement learning. These areas drive progress in developing intelligent systems.

A key innovation is the improvement of neural networks, which have surpassed many previous performance benchmarks.

Machine learning algorithms now enable real-time decision-making, enhancing technologies like self-driving cars and voice assistants. Tools like chatbots are becoming more sophisticated, using advances in language processing to better understand human interaction.

Future Trends and Predictions

The future of machine learning involves numerous exciting possibilities. There are predictions of AI reaching human-level intelligence in certain tasks.

Projects are underway to enhance machine learning models with increased ethical considerations, aiming to minimize risks.

Emerging trends emphasize transparency and fairness in AI. Industry experts foresee a rise in personalized AI applications, like virtual health assistants and more interactive chatbots.

Machine learning holds promise for sectors such as healthcare, finance, and education. Its potential could reshape how individuals and businesses operate, driving efficiency and innovation.

Learning and Understanding Machine Learning

Machine learning involves using algorithms to teach computers to learn from data, identify patterns, and make decisions. There are various educational resources available to build a strong foundation and advance a career in this field.

Educational Resources

To gain knowledge in machine learning, there are many valuable resources online and offline.

Websites like GeeksforGeeks offer tutorials that cover basic to advanced topics. Similarly, the Google Developers Crash Course provides modules to understand the core principles of machine learning, focusing on regression and classification models.

For those seeking formal education, platforms like Coursera offer courses with comprehensive study plans. These courses help learners grasp key concepts such as representation, generalization, and experience in solving real-world learning problems.

Books and academic journals are also crucial for deepening understanding, exploring topics like data representation and algorithm efficiency.

Building a Career in Machine Learning

Establishing a career in machine learning requires a blend of formal education and practical experience.

Many successful professionals begin with degrees in computer science, statistics, or related fields. Building a portfolio showcasing experience with machine learning projects can significantly enhance job prospects.

Networking and joining communities can provide insights into the latest trends and challenges in the field. Attending conferences and workshops may also offer opportunities to connect with industry experts and potential employers.

As for job roles, opportunities range from data analyst to machine learning engineer, each requiring a solid grasp of mathematical concepts and proficiency in programming languages such as Python and R.

Frequently Asked Questions

Machine learning encompasses various algorithms and tools, offering applications across numerous fields. Understanding its distinction from artificial intelligence and the role of data science enhances comprehension. Beginners and experts alike benefit from grasping these key elements.

What are the types of machine learning algorithms and their applications?

Machine learning algorithms are typically divided into three types: supervised learning, unsupervised learning, and reinforcement learning.

Supervised learning uses labeled data and is commonly applied in email filtering and fraud detection. Unsupervised learning finds patterns in data and is used in customer segmentation. Reinforcement learning is applied in robotics and gaming to improve decision-making processes.

How can beginners start learning about machine learning?

Beginners can start by enrolling in online courses or tutorials that introduce basic concepts such as statistics and programming languages like Python. Books and webinars also offer accessible learning paths.

It is beneficial to work on small projects and use platforms like Kaggle to gain practical experience.

What tools are essential for machine learning projects?

Popular tools for machine learning projects include programming languages like Python and R, along with libraries such as TensorFlow and PyTorch.

Jupyter Notebooks facilitates an interactive coding environment. Tools like Scikit-learn and Pandas assist in data manipulation and analysis, making them integral to data-driven projects.

What distinguishes machine learning from artificial intelligence?

Machine learning is a subset of artificial intelligence focused on developing systems that learn and adapt through experience. While AI encompasses a broader range of technologies including natural language processing and robotics, machine learning specifically concentrates on algorithm development and data interpretation.

What is the role of data science in machine learning?

Data science is crucial in machine learning as it involves collecting, processing, and analyzing large datasets to create accurate models.

It provides the techniques and methods needed to extract insights and patterns, forming the basis for model training and evaluation. The collaboration between data scientists and machine learning engineers optimizes data usage.

How is machine learning applied in real-world scenarios?

Machine learning is extensively applied in various industries. It aids in improving medical diagnostics through image recognition.

In finance, it’s used for algorithmic trading and risk management.

Retail businesses use it for personalized advertising and inventory management. Each application aims to optimize performance and decision-making processes through data-driven insights.