Understanding Pandas and Its Ecosystem

Pandas is a powerful tool in data science. It helps analysts and scientists manage and analyze data. Its integration with Python and other libraries like NumPy and SciPy enhances its capabilities, making it an essential part of data processing and analysis workflows.

The Role of Pandas in Data Science

Pandas is crucial for data manipulation and analysis. It provides structures like DataFrames and Series, which are pivotal for organizing and working with data efficiently. These structures allow for seamless handling of large datasets, which is important in data science tasks.

Data scientists often rely on Pandas for tasks like data cleaning and transformation. Its functions simplify operations such as filtering data, filling in missing values, and rearranging datasets. This efficiency is why pandas is preferred in many data science projects.

Moreover, Pandas offers a suite of tools for visualization. This makes initial data exploration straightforward, allowing users to plot graphs directly from the dataset. Such features empower users to draw insights quickly.

Python and Its Libraries: Numpy and Scipy

Python’s strength lies in its robust ecosystem of libraries suited for various aspects of data science. NumPy is vital for numerical operations, providing support for arrays and matrices. It also offers mathematical functions to perform operations on these arrays swiftly.

Pandas builds on NumPy, making it even more powerful. It enhances data handling through advanced indexing and labeling, allowing more complex data manipulations than what NumPy alone offers.

On the other hand, SciPy extends the capabilities of NumPy by adding modules for optimization, integration, and statistical functions. In combination, pandas, NumPy, and SciPy enable comprehensive data modeling and analysis, forming an integral part of a data scientist’s toolkit. Their synergy allows for a seamless workflow from raw data processing to advanced statistical computation.

Installing and Importing Pandas

Pandas is essential for data manipulation and analysis in Python. Proper installation and importing methods ensure smooth use of its powerful features.

Setting up Your Environment

Before using the pandas library, it’s important to have a suitable environment. Most users opt for Anaconda, a popular distribution that includes pandas and other data science tools.

Anaconda simplifies the setup with its package manager, Conda. To get started, download Anaconda from its official site. After installation, open the Anaconda Navigator and create a new environment if necessary.

Alternatively, pandas can be installed using pip, Python’s package installer. Run the command pip install pandas in the terminal or command prompt. Ensure Python is already installed on your system.

Whether using Conda or pip, confirm the installation by executing import pandas as pd in a Python script or interactive shell.

Import Pandas Syntax

After installation, importing pandas is straightforward. Use the command import pandas as pd. This convention, pd, is widely accepted, making code sharing and collaboration easier. The alias helps in reducing repetition since pandas is referenced frequently in scripts.

Here’s a simple example to demonstrate importing and using pandas:

import pandas as pd

data = {'Name': ['Alice', 'Bob'], 'Age': [25, 30]}

df = pd.DataFrame(data)

print(df)

In this snippet, import pandas as pd brings pandas into the script. The example creates a DataFrame, a core structure for data handling in pandas, showcasing its power and ease of use.

For detailed pandas capabilities, consult their comprehensive documentation available online.

Pandas Series: The One-Dimensional Array

A Pandas Series is a key component of the Pandas library, designed as a one-dimensional array with labeled indices. It is similar to a list or array, offering powerful capabilities for managing data. Users can work with a sequence of values linked to a specific label or index.

Creating Pandas Series

A Pandas Series can be created from diverse data types like scalar, list, or dictionary. The simplest form is from a scalar, where the value is repeated across indices:

import pandas as pd

s = pd.Series(5, index=[0, 1, 2])

Creating a series using a list or an array is common. The values are directly taken as the series content, and an automatic index is provided:

data = [10, 20, 30]

s = pd.Series(data)

Using a dictionary to create a Series maps keys as indices and values as the series data:

data = {'a': 1, 'b': 2, 'c': 3}

s = pd.Series(data)

Understanding these basics helps in efficiently utilizing Pandas Series for data storage and manipulation.

Series Attributes and Methods

Pandas Series comes with various attributes and methods that enhance its functionality.

Attributes

Some attributes like index, values, and dtype offer basic information about the series.

s.indexreturns the index labels.s.valuesgives the data values.s.dtypeshows the data type of entries.

Methods

Meanwhile, methods such as head(), tail(), and describe() provide data analysis tools.

s.head(n)shows the firstnelements.s.tail(n)displays the lastnelements.s.describe()offers statistics like count, mean, and standard deviation.

Learning these attributes and methods is essential for maximizing the potential of a Pandas Series.

Working with DataFrames

DataFrames in Pandas are a essential for handling large sets of tabular data. They allow users to organize, manipulate, and analyze data efficiently using a familiar format that resembles a spreadsheet.

Understanding DataFrames

A DataFrame is a two-dimensional, size-mutable, and heterogeneous tabular data structure with labeled axes (rows and columns). Each column in a DataFrame can be of different types, making it versatile for data analysis.

In Pandas, a DataFrame consists of two main components: the data itself and the index. The index is an important part, as it allows users to access data quickly. It labels the rows and can be set to a specific column or a range.

Users can perform operations like filtering, aggregation, and more using index labels. Rows and columns can be easily accessed and modified through various methods. For example, the .loc and .iloc accessors are commonly used.

DataFrames also support a wide array of operations, including merging, joining, and reshaping, providing robust options for any data analysis task.

Constructing DataFrames from Different Sources

Pandas DataFrames can be constructed from various data sources, making them adaptable to different data needs. A common source is a dictionary, where the keys become column headers and the values are data points.

DataFrames can also be created from CSV files, Excel spreadsheets, SQL databases, and other formats. Each data source has its own method.

For instance, pd.read_csv() helps read data from a CSV file, while pd.read_sql() fetches data from SQL databases.

DataFrames handle missing data gracefully, allowing users to fill or drop these values as necessary. By offering seamless integration with a variety of data types and formats, Pandas makes data preparation and analysis straightforward.

Indexing and Selecting Data

Indexing and selecting data in Pandas allow users to efficiently access and manipulate data within a DataFrame or Series. Understanding index objects and advanced indexing techniques is critical for effective data handling.

The Power of Index Objects

Index objects are central to data manipulation in Pandas. They serve as the labels for the rows and columns in both DataFrames and Series. Each Index points to a piece of data, making it easy to locate and manage information.

Pandas Series and DataFrames use these Index objects to provide fast and reliable access to data elements. Series, which are essentially one-dimensional arrays, use Index to reference each data point. In DataFrames, indices allow access to rows and provide a way to align data when performing operations involving multiple DataFrames.

Index objects support both integer and labeled indexing. This dual ability aids in flexible data selection, enabling operations such as slicing. For example, accessing rows by position or by column names becomes straightforward, enhancing the versatility of data manipulation tasks.

Advanced Indexing Techniques

Advanced indexing techniques in Pandas enhance the ability to select and manipulate data with precision. These techniques include boolean indexing, fancy indexing, and multi-level indexing.

Boolean indexing involves creating logical statements to filter data. It allows users to extract subsets of data that meet specific criteria. For example, selecting rows where a column value satisfies a condition is a practical use of boolean indexing.

Fancy indexing, also known as positional indexing, permits access to specific rows or columns using integer arrays. This method is useful for rearranging data or extracting non-continuous sections of the dataset.

Multi-level indexing, or hierarchical indexing, lets users work with data that has multiple indices. This structure enables complex data manipulation by allowing operations on different levels of the index. It is particularly powerful for managing large datasets with multiple variables, facilitating tasks like aggregations or complex slicing.

Understanding and applying these advanced techniques can significantly enhance data handling capabilities in Pandas.

Data Manipulation with Pandas

Data manipulation with Pandas offers powerful tools for transforming and analyzing datasets. Key skills include handling missing values and applying data aggregation using the groupby function.

Handling Missing Values

Handling missing values is crucial when working with data in Pandas. Missing data can lead to gaps in analysis, resulting in inaccurate conclusions. In Pandas, missing values are represented as NaN (Not a Number).

Several methods help manage these gaps in data.

One common approach is using fillna(), which replaces NaN with specified values, like zero or the mean of a column. For example, to fill missing values with the column mean:

df['column_name'].fillna(df['column_name'].mean(), inplace=True)

The dropna() function is another useful tool. It removes rows or columns with any missing values, preserving only complete data.

df.dropna(inplace=True)

These methods ensure data integrity, allowing for more reliable analysis.

Data Aggregation with GroupBy

Data aggregation helps in summarizing data and finding patterns. The groupby function in Pandas enables this by grouping data based on specified columns and applying aggregate functions, such as sum, mean, or count, to these groups.

Here’s an example of how to use groupby:

grouped = df.groupby('category_column')

result = grouped['value_column'].sum()

This code groups the data by category_column and calculates the total of value_column for each category.

The groupby function is especially useful in complex data analyses, as it allows for detailed summaries and insights. It forms the foundation for many advanced operations in data science, helping to make sense of large datasets efficiently.

Mastering Data Operations

Mastering data operations involves performing calculations and organizing data for better analysis. The use of tools like Pandas in Python makes these tasks more efficient and effective.

Mathematical Operations on Data

Mathematical operations are critical in data analysis. Pandas makes it easy to perform calculations directly on data within Series or DataFrame objects. Users can apply operations like addition, subtraction, multiplication, and division across entire columns. This allows swift manipulation of large datasets without needing loops.

Pandas also supports functions like sum(), mean(), and max() to extract key statistics from data. Results are immediate and formatted neatly within the DataFrame.

Users can implement operations across axes to compare columns or many rows of data. For complex data manipulation, boolean filters and array functions enhance flexibility, allowing precise control over what data is calculated.

Sorting and Organizing Data

Organizing data using sorting operations is vital for clarity and efficient data processing.

Pandas provides a sort_values() function that arranges data by a specific column, either ascending or descending. This helps highlight important data points and identify trends.

Multi-level sorting is supported, letting users specify multiple columns to sort, such as sorting first by date and then by value.

Another powerful feature is the use of the sort_index() method, which rearranges data based on its indices.

By carefully structuring data, users gain clearer insights, aiding in trend analysis and decision-making.

Exploring and Cleaning Data

Pandas is essential for data cleaning and exploration. These processes help refine data into a valuable resource for analysis and gaining insights.

The Art of Data Cleaning

Data cleaning is crucial in preparing datasets for analysis. It involves identifying and correcting errors, filtering out irrelevant details, and filling in missing values.

A common technique is dropping duplicate entries to ensure data integrity. Using methods such as fillna() in Pandas, missing values can be effectively handled, enhancing the dataset’s reliability.

Another important aspect is Pandas Series and Index objects, which simplify indexing tasks. Indexing helps locate data within a DataFrame, making it easier to spot inconsistencies. Careful attention to data types is also necessary, ensuring compatibility throughout data operations.

Exploratory Data Analysis Techniques

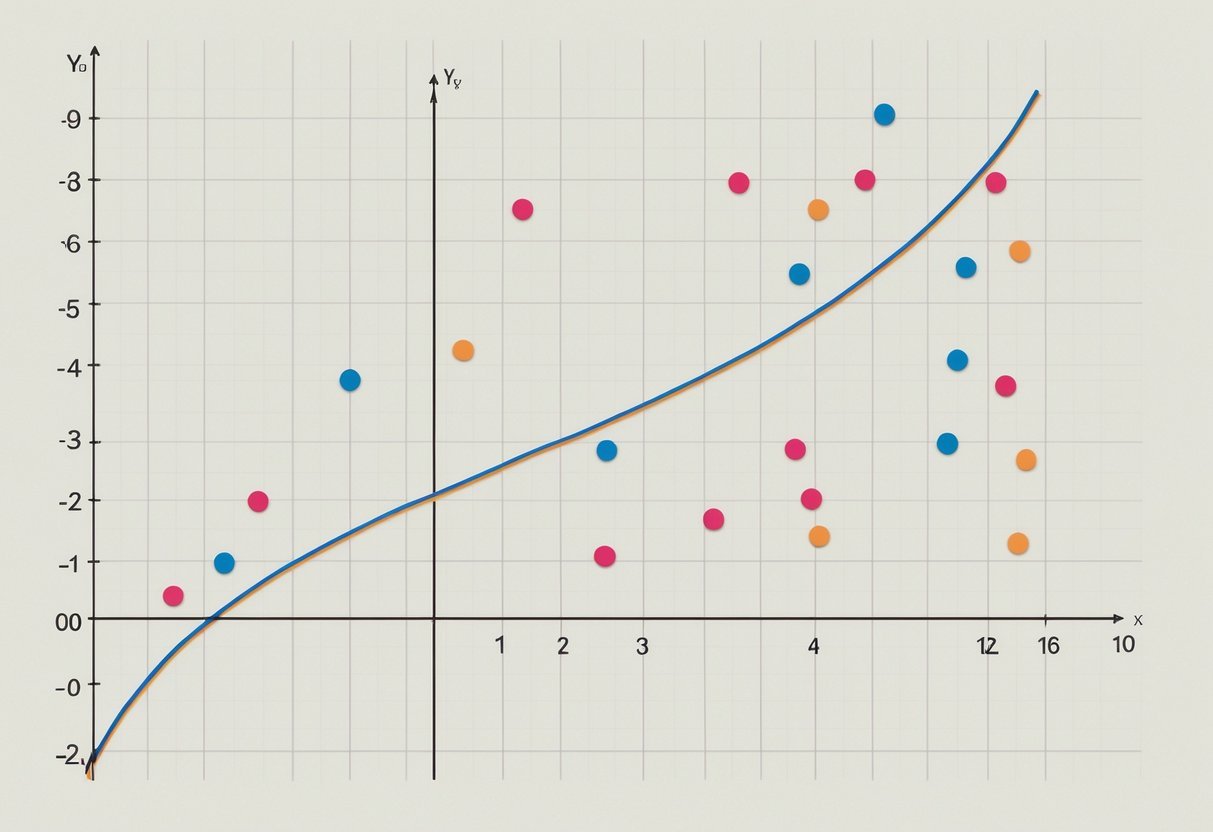

Exploratory Data Analysis (EDA) involves using statistical graphics and data visualization to understand data structures and detect patterns.

Techniques like scatter plots and histograms can reveal correlations and data distributions. These methods help in verifying initial assumptions.

EDA often uses descriptive statistics to describe central tendencies and variabilities. Doing so supports a more in-depth data exploration, highlighting essential attributes for deeper analysis. Using libraries like Matplotlib and Seaborn further enhances the interpretability of a dataset.

Visualizing Data with Matplotlib

Matplotlib is a key tool for anyone working with data. It’s widely used for creating static, interactive, and animated visualizations in Python. This library allows users to generate plots like line graphs, bar charts, and scatter plots, making data exploration more intuitive and revealing hidden patterns.

Basic Plotting:

To get started with Matplotlib, import it using import matplotlib.pyplot as plt. Create a simple line plot with plt.plot(x, y) and display it using plt.show(). This function helps in quickly visualizing data trends and relationships.

Customizing Plots:

Matplotlib provides flexibility in customizing plots. Change colors, labels, and styles to improve clarity.

Use the plt.xlabel() and plt.ylabel() functions to add labels to the axes. Titles can be set with plt.title(), and legends can be added using plt.legend().

Data Exploration and Analysis:

Using Matplotlib helps in data exploration by providing visual insights. For instance, a histogram can reveal the distribution of data points. Scatter plots are effective for observing relationships between two variables and can highlight correlations.

Useful Features:

- Create grid layouts with

plt.subplots(). - Annotate important points with

plt.annotate(). - Explore a range of plot types like pie charts and box plots for comprehensive data analysis.

Matplotlib’s ability to create visuals supports better understanding and communication of data findings. Its integration with other Python libraries enables seamless use in data science projects.

Input/Output Operations with Pandas

Pandas offers powerful tools for handling data input and output operations. These functionalities are essential for data scientists working with various data files and formats.

Reading Data from Different Sources

Pandas provides a variety of functions to read data from multiple sources, making it a versatile library for data scientists.

The read_csv function is widely used for reading CSV files due to its simplicity and efficiency. Additionally, the read_excel function allows for easy import of data from Excel spreadsheets. This is particularly helpful when handling tabular data common in many business settings.

Apart from CSV and Excel, pandas supports other formats like JSON, HTML, and SQL databases.

By using functions like read_json, read_html, and read_sql, users can import data seamlessly. Pandas io tools are optimized for performance, ensuring that even large datasets are loaded efficiently.

Exporting Data to Various Formats

Exporting data is another critical feature of pandas that aids in sharing and deploying data findings.

The to_csv function facilitates saving data frames to CSV files, ensuring compatibility across different platforms. Data scientists often prefer this format for its simplicity and ease of use.

For those working with spreadsheets, the to_excel function is invaluable. It allows the export of data frames to Excel files, maintaining data integrity and structure.

Pandas also supports exporting to formats like JSON and SQL using functions such as to_json and to_sql. These capabilities make pandas a trustworthy tool for data manipulation and sharing.

Advanced Topics in Pandas

Understanding advanced concepts in Pandas can greatly enhance a data analyst’s ability to manipulate data efficiently and extract meaningful insights. This section explores correlation and data analysis techniques, followed by tips for improving performance and efficiency.

Correlation and Data Analysis

Pandas offers powerful tools for analyzing relationships between data variables. One of these is the corr() function, which computes the correlation matrix for a DataFrame. This matrix shows the correlation coefficients between different columns.

Correlation coefficients range from -1 to 1. A value close to 1 implies a strong positive correlation, while a value close to -1 suggests a strong negative correlation.

Understanding these relationships can help in predicting outcomes, identifying trends, or detecting anomalies.

For deeper analysis, Pandas can be combined with libraries like NumPy and SciPy to perform more complex statistical operations. This integrated approach allows analysts to streamline workflows and leverage the strengths of each tool.

Data visualization libraries such as Matplotlib and Seaborn can also be used alongside Pandas to visually represent these correlations, making it easier to spot patterns or outliers quickly.

Performance and Efficiency Tips

Efficient data processing is crucial for handling large datasets.

In Pandas, performance can be improved through vectorization, which allows Pandas to operate on entire arrays, reducing the need for Python loops.

Utilizing functions like apply() and map() can further optimize operations by applying functions across data structures more effectively. Understanding data types is also key; for instance, using category data types instead of object can save memory and speed up operations.

Another tip is to break complex operations into smaller, manageable steps, which helps with debugging and efficiency.

By combining these strategies, data analysts can handle data more swiftly and accurately, reducing processing time significantly.

Real-World Applications of Pandas

Pandas is a versatile tool used widely in data science for handling and analyzing data. It offers data structures and functions designed for fast and efficient data manipulation. Data scientists rely heavily on Pandas to clean, transform, and analyze data sets, which makes it an indispensable part of their workflow.

One common application is in data analysis. Pandas allows data scientists to load data from various file formats like CSV, Excel, and SQL databases. It provides tools to filter, sort, and group data, making it easier to extract insights from large datasets.

In the field of finance, Pandas is used to analyze stock market data. It can handle time-series data with its powerful DateTime functions. This assists analysts in performing tasks like calculating moving averages and tracking trends over time.

The healthcare sector uses Pandas to manage patient records and analyze medical data. Pandas helps in identifying patterns and trends in public health data, contributing to better decision-making in healthcare policies.

Pandas’ capabilities extend to exploratory data analysis (EDA), where it helps data scientists visualize data distributions through plotting libraries like Matplotlib. This process is crucial for understanding data characteristics and identifying any anomalies or outliers.

More information on how Pandas is used for real-world scenarios can be found in Hands-On Data Analysis with Pandas.

By providing a streamlined process from data collection to analysis, Pandas remains essential in the toolkit of modern data enthusiasts.

Frequently Asked Questions

Pandas offers powerful tools for data manipulation and analysis, such as Series and DataFrames. Understanding the differences between them, as well as how to efficiently select and manipulate data, is crucial for effective use.

How can I convert a Pandas Series to a DataFrame?

To convert a Pandas Series to a DataFrame, one can use the to_frame() method. This method creates a new DataFrame with the Series as a single column.

For example, series.to_frame(name='column_name') will result in a DataFrame with ‘column_name’ as the column header.

What are the key differences between a DataFrame and a Series in Pandas?

A Pandas Series is a one-dimensional labeled array capable of holding data of any type. A DataFrame, on the other hand, is a two-dimensional labeled data structure with columns that can hold different types of data. This makes DataFrames similar to spreadsheets or SQL tables.

How do you select and manipulate data in a DataFrame using index and column labels?

Data selection in a DataFrame can be done using loc[] for label-based indexing and iloc[] for position-based indexing.

Column labels are used to access specific data, while index labels can help in slicing rows. Manipulating data often involves using functions to add, remove, or modify columns and rows.

Can you explain the role of index objects in Pandas and how they are used?

Index objects in Pandas serve as identifiers for Series and DataFrame rows. They allow users to access and manipulate rows efficiently.

Index objects can be reset, set, or modified to ensure data is well-organized. This aids in connecting rows with corresponding data points for seamless data operations.

What are the best practices for indexing and selecting data in Pandas for efficient data manipulation?

For efficient data manipulation, it is recommended to use boolean indexing and the query() method to filter data, as they are faster and more efficient.

Indexing should be done carefully to avoid setting new copies of data unless necessary.

Using meaningful index names can also improve code readability and data understanding.

How can you efficiently iterate over rows in a Pandas DataFrame or a Series?

To iterate over rows efficiently, you can use the iterrows() or itertuples() methods. iterrows() yields index and Series pairs, while itertuples() provides namedtuples. Namedtuples are faster and take up less memory. However, for performance reasons, it is generally best to use vectorized operations instead of row iteration.