Building an effective data model in Power BI often begins with preparing your data in SQL. Setting a strong foundation in SQL ensures that the transition to Power BI is smoother and more efficient.

Understanding how to manage and organize your data beforehand allows for a seamless integration into Power BI’s features.

A well-prepared SQL database is crucial for creating meaningful insights in Power BI. By organizing data correctly, users can take full advantage of Power BI’s ability to create visual reports and analyses.

With the right setup, data modeling becomes more intuitive, empowering users to leverage their SQL knowledge within Power BI effectively.

Understand Data Modeling Basics

Data modeling in Power BI is essential for transforming unorganized data into a structured form. At its core, data modeling involves organizing the data elements, defining their relationships, and creating structures that make data easy to analyze.

Creating a strong data model often starts with identifying the tables and columns that will be used. These tables are usually categorized as either fact tables, which contain measurable data, or dimension tables, which provide context by describing the data in the fact tables.

Learning to distinguish these types is vital for efficiency.

Building relationships between tables is another important aspect. In Power BI, users can create relationships using unique keys that connect different tables. This helps in ensuring data integrity and allows for more dynamic and robust data connections.

Measures and calculated fields are also crucial in data modeling. Measures are used for calculations that aggregate data, while calculated fields can be created within tables to enhance the analysis.

These features help in deriving insights from complex datasets.

To optimize performance in Power BI, it’s beneficial to understand cardinality, which refers to the uniqueness of data values in a column. Properly managing cardinality can improve the speed and efficiency of data models.

Identify Key Power BI Features

Power BI offers various features that help users transform raw data into insightful analytics. One essential feature is the ability to design semantic models. These models allow users to create a structured framework to enhance data analysis and reporting.

Another key feature is the use of DAX (Data Analysis Expressions) formulas. These formulas help users create custom calculations and improve the performance of data models. This capability is crucial for building dynamic and flexible reports.

Power BI supports data modeling techniques such as the star schema. This structure organizes data into fact and dimension tables, enhancing the clarity and performance of data models. It simplifies complex databases into easy-to-understand reports.

Integrating data from multiple sources is another significant feature. Power BI can connect to various data sources, allowing users to combine data into a single, cohesive report. This integration is vital for comprehensive business analysis and decision-making.

Additionally, Power BI provides tools for data visualization. Users can create a variety of charts, graphs, and dashboards that present data in an easily digestible format. These visual tools help stakeholders quickly grasp important information and trends.

Lastly, Power BI offers real-time data monitoring capabilities. With this feature, users can access up-to-date information, enabling timely responses to business changes. Real-time insights can boost operational efficiency and strategic planning.

3) Optimize SQL Queries

Optimizing SQL queries is crucial for better performance in Power BI. Slow queries can impact the overall efficiency of data processing.

Start by selecting only the necessary columns. Avoid using “SELECT *” as it retrieves more data than needed, increasing query time. Instead, specify the columns that are essential for the report.

Implement indexing to improve query performance. Indexes help the database quickly locate and retrieve data without scanning entire tables. This is particularly useful for large datasets.

Use joins wisely. Properly structured joins speed up data retrieval. Ensure that joins are based on indexed columns for faster data access. Consider using INNER JOINs when appropriate, as they tend to perform better than OUTER JOINs.

Apply filtering early in the query. Using WHERE clauses to filter data as soon as possible reduces the number of rows that need to be processed. This not only makes the query faster but also decreases the load on the database server.

Consider aggregating data within the SQL query. Reducing the amount of data that needs to be transferred to Power BI can significantly enhance performance. Use functions like SUM, COUNT, or AVG to create summary tables or datasets.

If working with complex queries, consider breaking them down into simpler parts. This can make optimization easier and debugging more straightforward.

Monitoring query performance is also important. Regularly analyze query execution plans to identify bottlenecks and detect any inefficiencies. Tools like SQL Server Management Studio provide insights into query performance, helping to make informed optimization decisions.

4) Data normalization in SQL

Data normalization in SQL is a method used to organize databases. This process removes redundant data and maintains data integrity, making databases more efficient. By structuring data into tables with unique and precise relationships, users ensure data consistency.

Normalization uses normal forms, which are rules designed to reduce duplication. The process starts with the first normal form (1NF) and progresses to more advanced forms like the fifth normal form (5NF). Each step aims to eliminate redundancy and improve data quality.

The first normal form (1NF) requires each table column to contain atomic values. It also ensures that each table row is unique. When a database meets these conditions, it avoids repeating groups and ensures data is straightforward.

Achieving the second normal form (2NF) involves eliminating partial dependencies. This means a non-prime attribute must be fully functional and dependent on a table’s primary key. This step further reduces redundancy.

The third normal form (3NF) focuses on removing transitive dependencies. A non-prime attribute shouldn’t depend on another non-prime attribute. This step keeps data relationships clear and precise.

Normalization also helps during the data transformation process in Power BI. Using normalized data makes it easier to prepare models. Well-structured data allows for better performance and accurate reporting.

Understanding and applying normalization techniques is vital for efficient database design. It prepares SQL data for smoother transitions into platforms like Power BI. Proper normalization leads to databases that are consistent, dependable, and easy to manage.

5) Design star schema in Power BI

Designing a star schema in Power BI is a key step for creating efficient data models. A star schema includes a central fact table connected to dimension tables. This layout allows for efficient querying and reporting. The fact table contains measurable, quantitative data while dimension tables store descriptive attributes related to the data in the fact table.

Using a star schema improves performance because it simplifies complex queries. Instead of handling many complex joins, Power BI can pull data from clear links between fact and dimension tables. This leads to faster data retrieval and helps in building more responsive reports, enhancing user experience significantly.

In Power BI, implementing a star schema involves using Power Query to import data or create relationships manually. Establishing clear relationships between tables is crucial. Users should ensure referential integrity, where every value in a column of a related dimension table matches a value in the corresponding fact table column.

Choosing the right granularity level is another important aspect. Granularity refers to the level of detail in the fact table. Matching the granularity to the business needs allows for more accurate and meaningful analysis. Power BI users should consider typical queries and reports they’re aiming to create when deciding on the proper granularity.

Creating a star schema offers clear advantages for Power BI semantic models. It provides an intuitive way to analyze data, enabling users to focus on specific business elements and gain actionable insights. Proper implementation of star schemas supports better data organization and accessibility, which is crucial for efficient and clear data modeling and reporting.

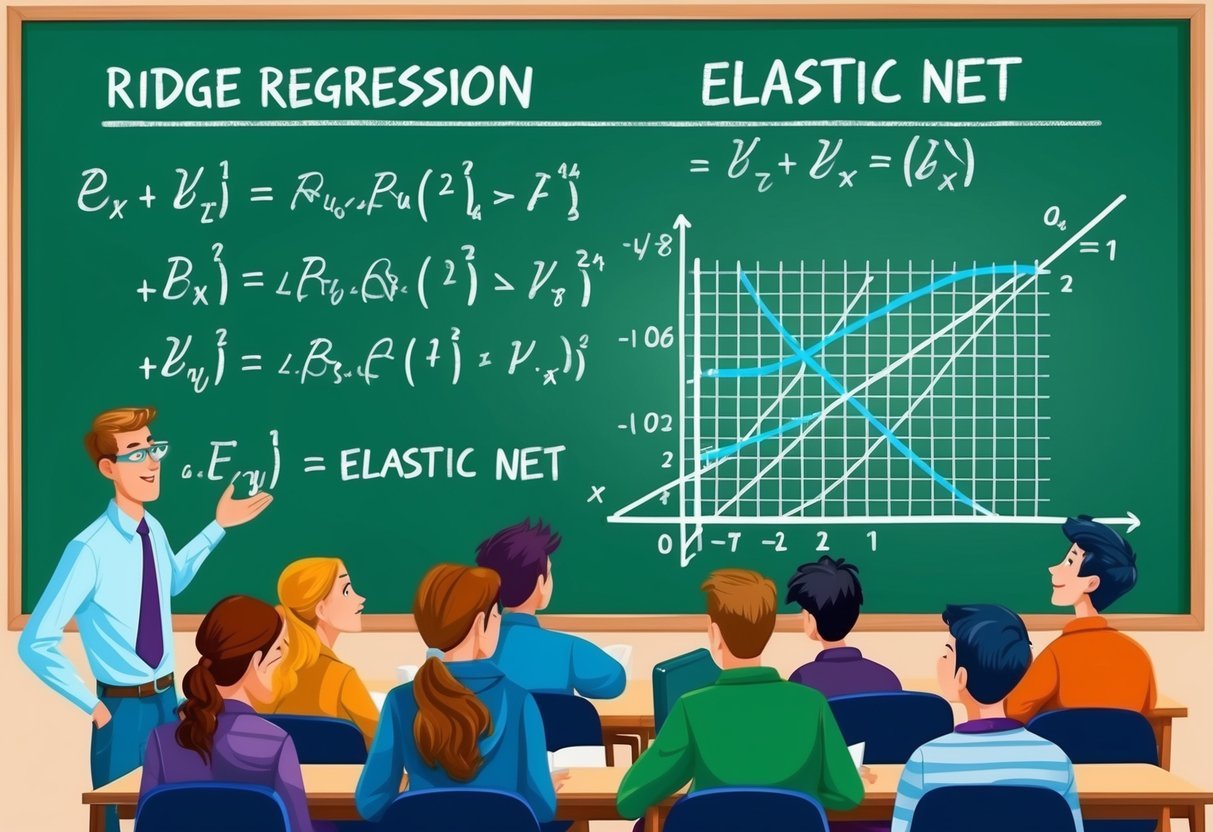

6) Use DAX for Calculations

In Power BI, Data Analysis Expressions (DAX) is a powerful tool used for creating custom calculations. It allows users to make data models dynamic and insightful.

DAX can be used in measures, calculated columns, and tables, enhancing how data is analyzed.

DAX formulas resemble Excel but are designed for relational data models. This means they allow users to perform complex calculations across related tables.

DAX helps in creating measures that can summarize and interpret data within Power BI environments effectively.

DAX offers functions for statistical, logical, text, and mathematical operations. These functions help in carrying out various tasks, such as aggregating data, filtering results, and executing conditional calculations. Understanding these functions can greatly improve one’s ability to analyze large datasets.

Using DAX within Power BI allows users to build semantic models. These models support deeper analysis through the relationships between tables and data elements. This is crucial for creating meaningful insights from complex datasets.

Applying DAX requires understanding the concept of context. Row context and filter context are essential aspects that influence how formulas calculate results.

For instance, row context evaluates data row by row, while filter context applies a broader filter across the data set.

Learning DAX through practice and real-world application can make the process more intuitive. The Microsoft DAX overview page provides useful tutorials and examples to help users get started with DAX calculations.

7) Implement ETL processes

ETL stands for Extract, Transform, Load. It’s a key process for handling data in Power BI. In this process, data is taken from various sources, changed into a suitable format, and finally loaded into Power BI for analysis.

It’s important to properly set up ETL to ensure data accuracy and efficiency.

Power BI uses tools like Power Query for this task. Power Query allows users to extract data from sources like databases, spreadsheets, and online services. During extraction, it’s crucial to connect to each data source accurately, setting up proper authentication and permissions for access.

In the transformation stage, data is cleaned, reordered, and formatted. Tasks include removing duplicates, changing data types, and combining data from different sources.

Efficient transformation ensures data is ready for analysis and visualization. This prevents errors and helps in creating accurate reports and dashboards.

Loading is the final part, where data is imported into Power BI. It’s important to determine the refresh schedule and method, such as manual or automatic updates.

Proper loading keeps the reports current, aiding in timely business decision-making.

ETL processes benefit from proper planning and execution. Before implementing, understanding the data structure and business needs is vital.

Developing a clear ETL strategy reduces errors and increases data-driven insights.

For further reading on how ETL is applied in Power BI, check out resources like ETL with Power BI. These guides explain the practical aspects of setting up ETL processes using Power BI tools.

8) Monitor Power BI performance

Monitoring Power BI performance is essential to ensure that reports and dashboards run smoothly.

One effective way is to use the Query Diagnostics tool. This tool allows users to see what Power Query is doing during query preview and application.

Understanding these details can help in identifying and resolving bottlenecks in the data processing step.

Using the Performance Analyzer within Power BI Desktop is another useful method. It helps track the time taken by each visual to render.

Users can identify slow-performing visuals and focus on optimizing them. This can significantly improve the user experience by reducing loading times and enhancing the overall efficiency of reports.

Power BI also benefits from external tools like the SQL Server Profiler. This tool is particularly useful if reports are connected via DirectQuery or Live Connection.

It helps in measuring the performance of these connections and identifying network or server issues that might affect performance.

Optimization should not be limited to the design phase. It’s also crucial to monitor performance after deployment, especially in environments using Power BI Premium.

This can ensure that the reports continue to perform well under different workloads and conditions.

Finally, reviewing metrics and KPIs in Power BI can provide insights into report performance. Using metrics helps maintain high data quality and integration with complex models across the organization, as seen in guidance on using metrics with Power BI.

Properly monitored metrics lead to more accurate and reliable business insights.

9) SQL Indexing Strategies

SQL indexing is crucial for improving the performance of databases, especially when integrating with tools like Power BI. Proper indexing can speed up data retrieval, making queries faster and more efficient.

One key strategy is using clustered indexes. These indexes rearrange the data rows in the table to match the order of the index. It’s beneficial when data retrieval requires accessing large amounts of ordered data.

Non-clustered indexes are another effective approach. They hold a copy of part of the table’s data for quick look-up. This can be useful when frequent searches are performed on non-primary key columns.

Careful selection of columns for non-clustered indexing is important for optimizing performance.

Covering indexes can significantly boost query performance. They include all columns referenced in a query. This means the database engine can retrieve the needed data directly from the index without looking at the actual table itself.

Another technique involves using filtered indexes. These indexes apply to a portion of the data, instead of the entire table. They are beneficial for queries that frequently filter data based on specific criteria.

Regular index maintenance is vital for performance. Over time, indexes can become fragmented due to data modifications. Scheduled maintenance tasks should reorganize or rebuild indexes to ensure they remain fast and efficient.

For complex queries, using composite indexes may be advantageous. These indexes consist of multiple columns, providing an efficient way to retrieve data that is filtered by several columns at once.

Secure data access in Power BI

Securing data in Power BI is crucial to protect sensitive information. Power BI offers several features to maintain data security, including row-level security (RLS) and data loss prevention (DLP) policies.

RLS restricts access to specific data for certain users by creating filters within roles. This ensures that users only see the data they are authorized to access. It is especially useful for datasets connected via DirectQuery.

DLP policies help organizations protect sensitive data by enforcing security measures across Power BI. These policies can identify and manage sensitive info types and sensitivity labels on semantic models, automatically triggering risk management actions when needed. Microsoft 365 tools integrate with Power BI to implement these measures effectively.

To enhance security further, Power BI supports object-level security (OLS) and column-level security. These features allow administrators to control access to specific objects or columns within a data model. This level of detail provides companies with the flexibility to meet complex security requirements.

For organizations that regularly work with SQL Server data, it’s important to use best practices for secure data access and user authentication.

Ensuring proper integration and secure connections helps maintain the integrity and privacy of data while it’s processed in Power BI.

Understanding Data Models in Power BI

Data modeling in Power BI is crucial for transforming raw data into meaningful insights. This involves organizing data, creating relationships, and defining calculations that enhance analysis and visualization.

Importance of Data Modeling

Effective data modeling is key to making data analysis efficient and reliable. By creating structured data models, users can ensure data accuracy and improve query performance. Models also help in simplifying complex datasets, allowing users to focus on analysis rather than data cleanup.

Proper data modeling supports better decision-making by providing clear insights. When designed well, models can enhance the speed of data retrieval, enable easier report creation, and ensure that business logic is consistently applied across analyses. This ultimately leads to more accurate and meaningful reports.

A well-structured data model also makes it easier to manage and update datasets. It helps in organizing large amounts of data from multiple sources, ensuring that updates or changes to the data are reflected accurately throughout the Power BI reports.

Components of a Power BI Model

The main components of a Power BI model include tables, relationships, measures, and columns. Tables organize data into rows and columns, helping users visualize data more clearly. Dataquest explains how defining dimensions and fact tables creates an effective structure.

Relationships in a model connect different tables, allowing for integrated analysis. These relationships define how data points correlate and aggregate, facilitating advanced analysis. Measures and calculated columns provide dynamic data calculations, unlocking deeper insights.

Calculated tables and other elements enable complex scenarios and expand analytical capabilities. These components help users build comprehensive models that support diverse reporting needs, as Microsoft Learn suggests.

Through these elements, users can enhance the functionality and interactivity of Power BI reports.

Preparing your Data in SQL

Preparing data in SQL for Power BI involves following best practices to ensure data is clean, well-organized, and ready for analysis. Transforming data effectively in SQL helps optimize performance and simplifies integration with Power BI models.

SQL Best Practices for Power BI

When preparing data for Power BI, adhering to best practices in SQL is crucial.

Start by ensuring data integrity through primary and foreign keys. Use indexes to speed up query performance but maintain a balance as too many indexes can slow down write operations.

Normalization helps eliminate redundancy, promoting data consistency. However, avoid over-normalization which can lead to complex queries. Proper filtering and collision handling through constraints and triggers can maintain data accuracy. Use views to simplify data access and enhance security.

Consider the storage and retrieval needs of your data. Partition large tables for better query performance. Ensure you have up-to-date database statistics for SQL query optimization. Regularly back up your SQL databases to prevent data loss.

Transforming Data for Analysis

Transforming data in SQL involves shaping it for analytical purposes.

Use SQL transformations to clean and format data. String functions, case statements, and date formatting can standardize values, making them easier to analyze in Power BI.

Aggregations and summarizations can pre-calculate necessary metrics. Creating summary tables can reduce the load on Power BI, making reports faster and more responsive. These transformations are crucial for supporting Power BI’s DAX calculations and improving report performance.

Furthermore, take advantage of built-in SQL functions to manage data types and conversions.

Prepare data structures that align with the star schema, if possible, making it easier to set up in Power BI. This approach leads to efficient data models and reliable reporting.

Frequently Asked Questions

Incorporating SQL with Power BI can enhance data handling and visualization greatly. Understanding the interaction between SQL and Power BI helps in setting up efficient models and ensuring smooth data connectivity and transformation.

How do you write and integrate SQL queries within Power BI Desktop?

Writing SQL queries can be done directly in Power BI Desktop by using the Query Editor.

Users can customize the SQL code to fetch specific data. This approach enhances the ability to control data size and complexity before importing into Power BI for visualization.

What are the best practices for connecting Power BI with a SQL Server without using a gateway?

To connect Power BI with a SQL Server without a gateway, it is crucial to ensure both systems are on the same network or use VPN if needed.

DirectQuery mode allows for real-time data refreshes without moving data into the cloud, maintaining data security.

What steps are involved in connecting Power BI to a SQL Server using Windows authentication?

Connecting Power BI to SQL Server using Windows authentication involves selecting the data source, logging in using Windows credentials, and configuring the settings to authenticate automatically.

This leverages existing user credentials for secure and seamless access to data.

How to optimally extract and transform data using Power Query for Power BI?

Power Query is essential for data extraction and transformation.

Users can shape their data by filtering, sorting, and merging queries. It simplifies the process to prepare clean, structured data sets, ready for use in Power BI’s visualization tools.

Is it beneficial to learn SQL prior to mastering Power BI, and why?

Learning SQL can provide a significant advantage when using Power BI.

SQL helps in understanding database structure and how to write queries that can optimize data extraction and transformation. This foundation supports more efficient and powerful data models in Power BI.

What are the essential steps to set up an effective data model in Power BI?

Setting up a data model in Power BI involves identifying key tables and relationships. Then, you need to design a logical model like a star schema. Lastly, optimize columns and measures. This structure allows for easy navigation and faster, more accurate data analysis.