Understanding Scikit-Learn and Its Ecosystem

Scikit-Learn is a crucial library in the Python machine learning environment, offering integration with tools like NumPy, SciPy, and Pandas to enhance data analysis and modeling efficiency.

These connections allow for powerful data manipulation, efficient execution of mathematical operations, and seamless installation processes.

Origins of Scikit-Learn

Scikit-Learn originated as a Google Summer of Code project in 2007 with initial contributions by David Cournapeau. It belongs to the broader SciPy ecosystem and was officially launched in 2010.

Originally designed to be a versatile tool, it focuses on providing accessible and efficient machine learning methodologies in Python. Over the years, it has become a staple for data scientists and researchers due to its robust set of algorithms and ease of use. Its open-source nature encourages contribution and improvement from developers all over the world.

Integrating Scikit-Learn with Numpy and Scipy

Scikit-Learn integrates smoothly with NumPy and SciPy, which are fundamental libraries for scientific computing in Python. NumPy provides powerful operations on large, multi-dimensional arrays and matrices, while SciPy offers modules for optimization, integration, and statistics.

Together, they enable Scikit-Learn to handle complex data operations efficiently. This integration allows for rapid prototyping of machine learning models, leveraging NumPy’s array-processing features and SciPy’s numerics.

Users can perform advanced computations easily, making Scikit-Learn a reliable choice for building scalable, high-performance machine learning applications.

Role of Pandas in Data Handling

Pandas plays an essential role in preprocessing and handling data for Scikit-Learn. Its powerful DataFrame object allows users to manage and transform datasets with ease.

With functions for filtering, aggregating, and cleaning data, Pandas complements Scikit-Learn by preparing datasets for analysis. Utilizing Pandas, data scientists can ensure that features are appropriately formatted and that any missing values are addressed.

This preprocessing is crucial before applying machine learning algorithms, ensuring accuracy and reliability in model predictions. By integrating these libraries, users can create seamless and efficient data workflows from start to finish.

Basics of Machine Learning Concepts

Machine learning involves teaching computers to learn patterns from data. Understanding its core concepts is crucial. This section focuses on different learning types, predicting outcomes, and working with data.

Using SciKit Learn, a popular Python library, can simplify handling these concepts.

Supervised vs. Unsupervised Learning

Supervised learning involves models that are trained with labeled data. Each input comes with an output, which helps the model learn the relationship between the two.

This method is often used for tasks like email filtering and fraud detection because the known outcomes improve prediction accuracy.

In contrast, unsupervised learning works with data that has no labels. The model attempts to find patterns or groupings on its own.

This approach is useful for clustering tasks, like grouping customers based on buying patterns. Both methods form the backbone of machine learning.

Understanding Classification and Regression

Classification refers to the process of predicting the category of given data points. It deals with discrete outcomes, like determining if an email is spam or not.

Tools such as decision trees and support vector machines handle these tasks effectively.

On the other hand, regression aims to predict continuous outcomes. It deals with real-valued numbers, like predicting house prices based on features.

Common algorithms include linear regression and regression trees. Both techniques are vital for different types of predictive modeling.

Features, Labels, and Target Values

Features are the input variables used in machine learning models. These can be anything from age and gender to income levels, depending on the problem.

Labels are the outcomes for each feature set, serving as the “answer key” during training.

In supervised learning, these outcomes are known, allowing the model to learn which features impact the result. Target values, often referred to in regression, are the data points the model attempts to predict.

Understanding how features, labels, and target values interact is essential for effective modeling. Emphasizing precise selection helps enhance model accuracy.

Essential Machine Learning Algorithms

This section focuses on vital machine learning models: Support Vector Machines (SVM), k-Nearest Neighbors (k-NN), and Linear Regression. Each technique has distinct features and applications, crucial for predictive modeling and data analysis.

Introduction to SVM

Support Vector Machines (SVM) are powerful for classification tasks. They work by finding the hyperplane that best separates different classes in the data.

SVM is effective in high-dimensional spaces and is versatile thanks to kernel functions.

Key to SVM is margin maximization, separating data with the largest possible gap. This improves the model’s ability to generalize to new data.

SVM can handle linear and non-linear data using kernels like linear, polynomial, and radial basis function. This flexibility is valuable for complex datasets.

Exploring k-Nearest Neighbors

The k-Nearest Neighbors algorithm (k-NN) classifies data based on the closest training examples. It is simple yet effective for various tasks.

In k-NN, data points are assigned to the class most common among their k closest neighbors. The choice of k controls the balance between bias and variance.

Distance metrics such as Euclidean and Manhattan are essential in determining closeness. Proper normalization of features can significantly impact results.

k-NN is computationally expensive for large datasets, as it requires calculating distances for each query instance. Despite this, it remains popular for its straightforward implementation and intuitive nature.

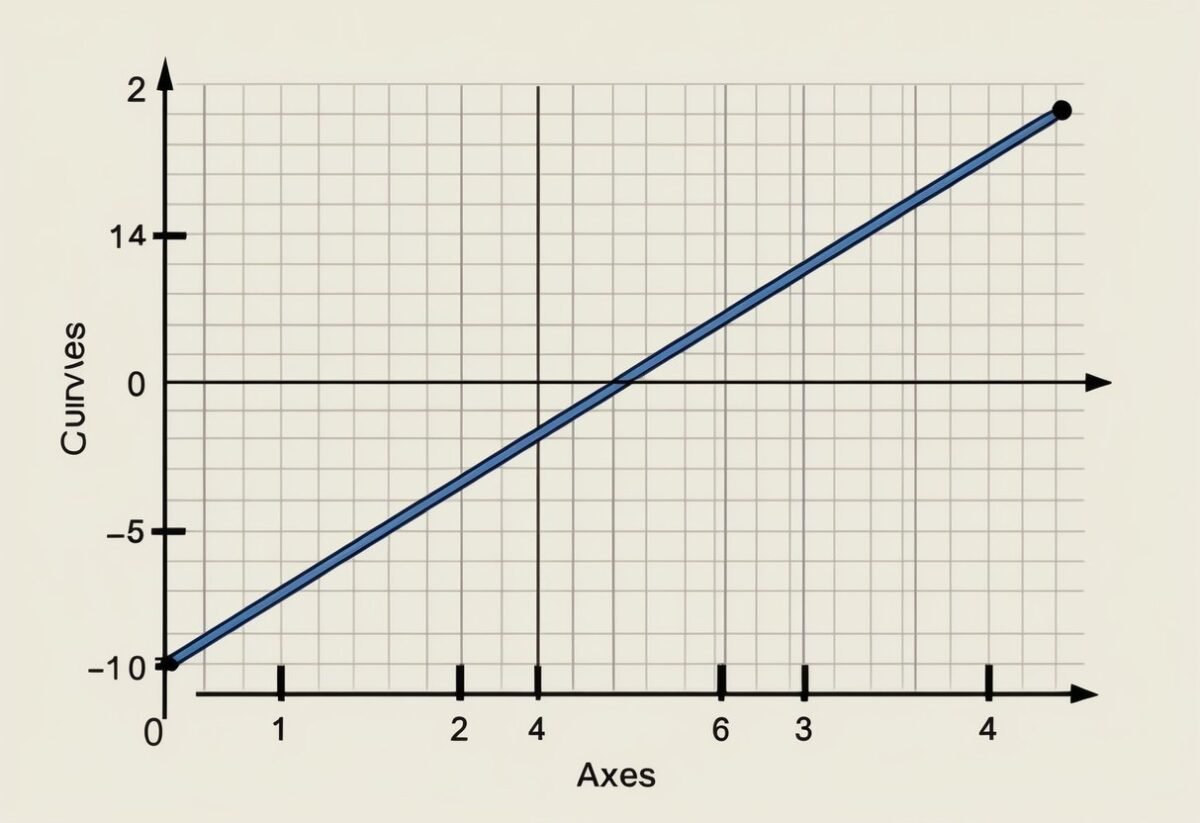

Linear Regression Techniques

Linear regression is fundamental for modeling relationships between variables. It predicts an output value using a linear approximation of input features.

In its simplest form, it fits a line to two variables, minimizing the sum of squared differences between observed and predicted values.

Linear regression extends to multiple variables with multivariate linear regression, making it applicable for more complex problems.

Regularization techniques like Ridge and Lasso regression address overfitting by penalizing large coefficients. This ensures models do not become overly complex, striking a balance between bias and variance.

Despite its simplicity, linear regression provides a baseline for more advanced machine learning algorithms and remains a go-to technique in many applications.

Data Preprocessing and Transformation

Data preprocessing and transformation are essential steps in preparing datasets for machine learning. These steps include transforming raw data into structured and normalized forms for better model performance. The use of tools like NumPy arrays, sparse matrices, and various transformers can enhance the effectiveness of machine learning algorithms.

Handling Numeric and Categorical Data

When dealing with machine learning, handling numeric and categorical data properly is crucial. Numeric data often requires transformation into a suitable scale or range. Categorical data might need encoding techniques to be properly used in models.

One common approach to manage categorical data is using one-hot encoding or label encoding. These methods convert categories into a numerical form that machines can understand.

By using scikit-learn’s techniques, both numeric and categorical data can be efficiently preprocessed, enhancing the performance of downstream models. Proper handling helps in reducing bias and variance in predictions.

Scaling and Normalizing with StandardScaler

Scaling and normalizing data ensure that the model treats all features equally, which can lead to faster convergence. StandardScaler from scikit-learn standardizes features by removing the mean and scaling to unit variance.

Through this method, data becomes uniform and easier to work with.

This transformation is crucial in algorithms sensitive to the scale of data, such as Support Vector Machines and K-means clustering. The process of scaling can be applied using NumPy arrays, which hold numerical data efficiently.

Using the StandardScaler tool, consistency across datasets is maintained, and the risk of model bias is minimized.

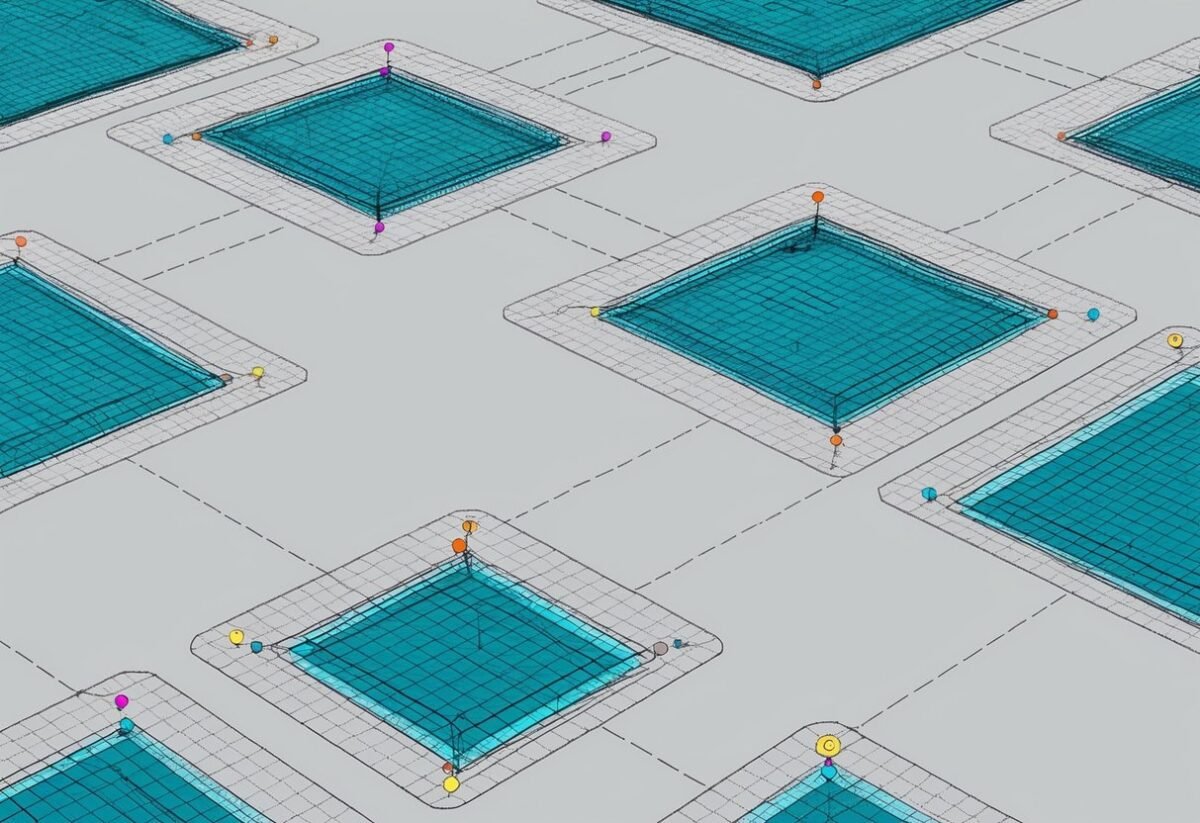

Efficient Data Preprocessing with ColumnTransformer

For complex datasets that contain a mix of data types, ColumnTransformer provides an efficient way to preprocess them. This tool allows the application of different transformers to each column of the data.

This is particularly useful when some fields require scaling while others might need encoding.

ColumnTransformer can manage various transformations simultaneously, processing both dense matrices and sparse representations. By utilizing this tool, the preprocessing pipeline becomes streamlined, making it easier to handle multi-type datasets.

It provides flexibility in managing diverse data types, ensuring robust data preparation for machine learning tasks.

Effective Model Selection and Training

Choosing the right model and training it effectively are important steps in machine learning. In this section, the focus is on splitting datasets using train_test_split, using cross-validation for enhancing model reliability, and training models with the fit method.

Splitting Datasets with train_test_split

Dataset splitting is crucial for model evaluation. It provides an independent report on a model’s quality.

The train_test_split function in scikit-learn helps divide data into training and testing sets.

It is important to allocate a proper ratio, often 70-80% for training and 20-30% for testing, allowing the model to learn patterns from the training data while the results can be tested for accuracy on unseen data.

Key Parameters:

- test_size or train_size: Specify proportions directly.

- random_state: Ensures reproducibility by fixing the seed.

- shuffle: Determines whether the data is shuffled before splitting.

These parameters allow customization of the train/test split, ensuring that the model is neither overfitting nor underfitting the data.

Utilizing Cross-Validation Techniques

Cross-validation is used for better assessment of a model’s performance. Instead of a single train/test split, cross-validation involves splitting the data multiple times to verify reliability.

Methods like K-Folds divide the dataset into K different subsets or folds.

During each iteration, the model is trained on K-1 folds and tested on the remaining fold. This process is repeated K times.

Cross-validation helps find optimal hyperparameters and improve model selection by verifying that the model’s performance is consistent and not random. This allows the practitioner to confidently compare and select the best model.

Learning Model Training and the fit Method

Training the model involves applying algorithms to datasets. In scikit-learn, this process is done using the fit method.

It adjusts the model parameters according to the training set data. Model training builds a mathematical representation that can predict outcomes from new data inputs.

Essential points about the fit method:

- Requires training data features and target labels.

- This step can be resource-intensive, depending on model complexity and dataset size.

Upon completion, the model should be able to generalize well to unseen data. Proper training can transform raw data into useful predictions, ensuring the model is ready for real-world application.

Understanding Estimators and Predictors

Estimators and predictors play a crucial role in machine learning models using Scikit-Learn. Estimators handle the fitting of models, while predictors are used to make predictions with trained models.

Estimator API in Scikit-Learn

Scikit-Learn provides a robust Estimator API that standardizes how different models fit data and predict outcomes. This API ensures that all estimators, whether they are support vector machines (SVM), decision trees, or linear models, follow a consistent interface.

To use an estimator, one usually calls the .fit() method with training data. This process adapts the model to identify patterns in the data.

Key features include flexibility to handle various types of data and ease of integration with other tools, such as pipelines.

From Estimation to Prediction

Once a model has been trained using an estimator, it transitions to making predictions. The .predict() method is central here, allowing the model to forecast based on new input data.

Predictors are vital for applying the insights drawn from data analysis to real-world scenarios.

For example, in classification tasks, such as identifying spam emails, the predictor analyzes features to classify new emails. Prediction accuracy is influenced heavily by the choice of estimator and the quality of the training.

Evaluating Estimators and Model Predictions

Model evaluation is crucial to understanding how well an estimator performs on unseen data.

Scikit-Learn offers various evaluation metrics to assess performance, like accuracy, precision, and recall. These metrics help in judging predictive power and are essential for refining models.

To ensure robust evaluation, techniques such as cross-validation are often used.

This involves splitting the dataset into parts and training the model several times, ensuring that model predictions are not only accurate but also reliable across different datasets.

Using Scikit-Learn’s tools, like GridSearchCV, developers can optimize model parameters systematically for better performance.

This systematic evaluation enhances the overall quality of predictions made by the model.

Evaluating Machine Learning Models

Evaluating machine learning models is crucial for understanding how well a model performs. This involves examining different metrics and tools to ensure accurate predictions and decision-making.

Metrics for Model Accuracy

Model evaluation begins with measuring how often predictions are correct.

The primary evaluation metric for this is the accuracy score, which calculates the percentage of correct predictions over the total number of cases.

Accuracy score is often used as a starting point, but it is important to consider additional metrics such as precision, recall, and F1-score. These provide a more granular understanding of model performance by revealing how many instances were correctly identified as positive or negative.

For example, Scikit-learn’s library offers tools to calculate these metrics, making it easier to compare different models or fine-tune parameters.

Confusion Matrix and ROC Curves

A confusion matrix is a table used to evaluate the performance of a classification model by showing the actual versus predicted values.

It presents true positives, false positives, true negatives, and false negatives. This helps identify not just the accuracy but also the kinds of errors a model makes.

The ROC curve (Receiver Operating Characteristic curve) illustrates the true positive rate against the false positive rate.

It is used to determine the optimal threshold for classification models, balancing sensitivity and specificity. Scikit-learn provides tools to plot ROC curves, offering insights into model discrimination between classes.

By analyzing these tools, users can better understand model performance in different scenarios.

Error Analysis and Model Improvement

Analyzing errors is key to improving model accuracy.

Errors can be categorized into two main types: bias and variance. Bias refers to errors due to overly simplistic models, while variance refers to errors because the model is too complex.

Errors can reveal inadequacies in data preprocessing or highlight areas where data might be misclassified.

Utilizing techniques such as cross-validation and hyperparameter tuning within Scikit-learn can help in refining model predictions.

By focusing on these errors, practitioners strive for a balance that minimizes both bias and variance, leading to better model performance.

Improving Model Performance through Tuning

Tuning a machine learning model can greatly enhance its performance. It involves adjusting hyper-parameters, employing various tuning strategies, and using optimization methods like gradient descent.

The Importance of Hyper-Parameters

Hyper-parameters play a vital role in defining the structure and performance of machine learning models. They are set before training and are not updated by the learning process.

These parameters can include the learning rate, the number of trees in a random forest, or the number of layers in a neural network.

Proper tuning of hyper-parameters can significantly boost a model’s accuracy and efficiency. For instance, in grid search, various combinations of parameters are tested to find the most effective one. Scikit-learn offers several tools to tune hyper-parameters effectively.

Strategies for Parameter Tuning

There are several strategies for parameter tuning that can help optimize model performance.

Grid search involves trying different combinations of hyper-parameters to find the best fit. Random search, on the other hand, selects random combinations and can be more efficient in some cases.

Bayesian optimization is another advanced technique that models the objective function to identify promising regions for parameter testing.

Scikit-learn provides convenient functions like GridSearchCV and RandomizedSearchCV, which automate some of these strategies and evaluate models on predefined metrics.

Gradient Descent and Optimization

Gradient descent is a fundamental optimization algorithm used in machine learning. It aims to minimize a cost function by iteratively moving towards the steepest descent, adjusting model weights accordingly.

There are different variants, such as Batch Gradient Descent, Stochastic Gradient Descent, and Mini-batch Gradient Descent, each with its own way of updating parameters.

This method is especially useful in training deep learning models and helps in refining hyper-parameters to achieve better performance. Understanding the nuances of gradient descent can enhance the effectiveness and speed of finding optimal parameters for a model.

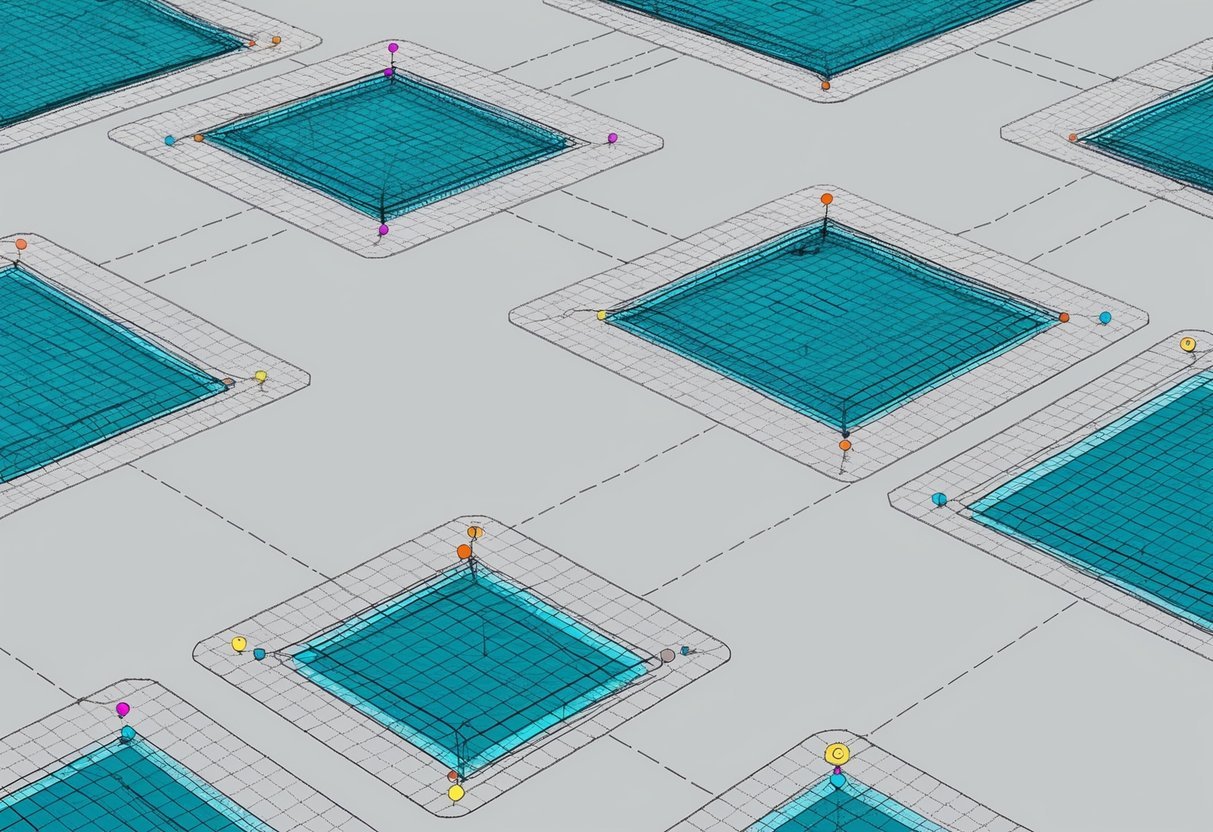

Workflow Automation with Pipelines

Scikit-learn Pipelines provide a structured approach to manage and automate machine learning processes. They streamline tasks such as data preprocessing and model training, making it easier to create consistent and maintainable workflows.

Building Effective Pipelines

Building a pipeline involves organizing several processing steps into a sequential order. Each step can include tasks such as data transformations, feature selection, or model training.

By chaining these together, users ensure that the entire operation follows a consistent path from input data to final prediction.

Pipelines also reduce code complexity. By encapsulating processes within a single entity, they keep the code organized and easier to maintain. This approach minimizes chances of errors and ensures that data flows seamlessly through various stages.

Additionally, effective pipelines promote flexibility by allowing users to easily modify or update individual steps without disrupting the entire workflow.

Using pipelines can enhance cross-validation practices. By treating the whole workflow as a single object, the same transformations apply consistently across training and validation datasets. This guarantees that model evaluation is fair and accurate, enhancing the overall reliability of predictions.

Integrating Preprocessing and Model Training

Integrating data preprocessing and model training is a core function of pipelines. By combining these steps, pipelines automate the repetitive task of applying transformations before every model training process.

This saves time and reduces the risk of inconsistency between training and deployment processes.

Preprocessing steps might include scaling features, encoding categorical variables, or handling missing values. By embedding these within a pipeline, users ensure they are automatically applied whenever the model is trained or retrained.

Pipelines enhance reproducibility by maintaining a detailed record of all processing steps. This makes it easier to replicate results later or share workflows with other team members.

Implementing pipelines helps maintain clear documentation of data transformations and model settings, ensuring transparency throughout the machine learning project.

Practical Machine Learning with Real-World Datasets

Engaging with real-world datasets is essential for learning machine learning. It allows learners to apply techniques such as classification and regression on actual data.

Navigating Kaggle for Machine Learning Competitions

Kaggle is an excellent platform for tackling real-world data challenges. Competitions here provide datasets and pose problems that mirror real industry demands.

Participating in competitions can help improve skills in data cleaning, feature engineering, and model evaluation.

Using a Pandas DataFrame for data exploration is common. This process helps in understanding the structure and characteristics of the data.

Kaggle provides a collaborative environment where users can share kernels, which are notebooks containing code and insights, enhancing mutual learning.

Working with Iris, Diabetes, and Digits Datasets

The Iris dataset is a classic dataset for classification tasks. It includes measurements of iris flowers and is often used as a beginner’s project. The goal is to predict the class of the iris based on features like petal length and width.

The Diabetes dataset is used for regression tasks, aiming to predict disease progression based on several medical indicators. It helps in grasping how to handle numeric predictors and targets.

The Digits dataset contains images representing handwritten digits. It is widely used for image classification projects, applying algorithms like the Decision Tree or Support Vector Machine. By working with these datasets, learners develop an understanding of how to preprocess data and apply models effectively.

Visualizing Data and Machine Learning Models

Visualizing data and machine learning models is crucial in data science. It helps to understand model performance and make data-driven decisions.

Tools like Matplotlib and Seaborn are popular for creating these visualizations within Jupyter notebooks.

Data Visualization with Matplotlib and Seaborn

Matplotlib is a versatile library for creating various plots and graphs. It’s widely used for line charts, bar charts, and histograms. The library allows customization, helping users clearly display complex information.

Seaborn enhances Matplotlib’s functionality by providing a high-level interface for drawing attractive and informative statistical graphics. It excels in visualizing distribution and relationship between variables. Seaborn’s themes and color palettes make it easier to create visually appealing plots.

Using these tools, data scientists can generate insightful visualizations that aid in understanding trends, outliers, and patterns in data. Both libraries are well-integrated with Jupyter notebooks, making them convenient for interactive analysis.

Interpreting Models through Visualization

Machine learning models can be complex, making them difficult to interpret. Visualization can bridge this gap by offering insight into model behavior and decision-making processes.

For example, plotting learning curves helps evaluate model scalability and performance.

Visualizations like Scikit-learn’s API offer tools to visualize estimator predictions and decision boundaries. These tools help identify model strengths and weaknesses.

Furthermore, using tools like partial dependence plots and feature importance graphs can reveal how different features impact predictions. This transparency aids in building trust in models and provides a clearer understanding of their functioning.

How do I contribute to the scikit-learn GitHub repository?

Contributing involves making meaningful additions or improvements to the codebase.

Interested individuals can visit scikit-learn’s GitHub repository and follow the guidelines for contributors.

Participating in community discussions or submitting pull requests are common ways to get involved.