Understanding T-SQL and Its Environment

T-SQL, or Transact-SQL, is a powerful extension of SQL that adds procedural programming features. It is used primarily with Microsoft SQL Server to manage and retrieve data.

This environment is critical for performing operations like data manipulation, querying, and managing databases efficiently.

Overview of T-SQL

T-SQL is a variant of SQL designed to interact with databases in Microsoft SQL Server. It includes additional features such as transaction control, error handling, and declared variables.

These enhancements allow users to create complex queries and stored procedures.

The language also supports relational operators such as JOIN, which are essential for combining data from multiple tables, enhancing data analysis.

T-SQL provides the capability to simplify queries through the use of commands like PIVOT and UNPIVOT, enabling dynamic crosstab reports that are otherwise complex to generate.

Fundamentals of SQL Server

Microsoft SQL Server is a relational database management system (RDBMS) that uses T-SQL as its primary query language. It offers a robust platform for running business-critical applications and supports large-scale database management through features such as scalability and performance tuning.

SQL Server provides a variety of tools for database tuning, such as indexes, which improve data retrieval speed.

Understanding the architecture, including storage engines and query processors, is vital for leveraging the full potential of SQL Server.

This knowledge aids in optimizing performance and ensuring efficient data handling and security.

Foundations of Data Structures

Understanding data structures is crucial for organizing and managing data efficiently in databases. The key elements include defining tables to hold data and inserting data properly into these structures.

Introduction to CREATE TABLE

Creating a table involves defining the structure that will hold your data. The CREATE TABLE statement announces what kind of data each column will store.

For example, using nvarchar allows for storing variable-length strings, which is useful for text fields that vary in size.

Choosing the right data types is important and can impact performance and storage. Specifying primary keys ensures each row is unique, while other constraints maintain data integrity.

Tables often include indexes to speed up queries, improving performance.

Inserting Data with INSERT INTO

Once tables are defined, data can be added using the INSERT INTO statement. This allows the addition of new records into the table.

It can specify the exact columns that will receive data, which is useful when not all columns will be filled with every insert.

Correctly aligning data with column data types is crucial. Using nvarchar for text ensures that the content matches the table’s data types.

To insert bulk data, multiple INSERT INTO statements can be used, or advanced methods like batch inserts can be utilized to optimize performance for large data sets.

Querying Data Using SELECT

Learning to query data with SELECT forms a crucial part of T-SQL proficiency. Understanding how to write basic SELECT statements and use the GROUP BY clause enables efficient data retrieval and organization.

Writing Basic SELECT Statements

The SELECT statement is a fundamental component of T-SQL. It allows users to retrieve data from databases by specifying the desired columns.

For example, writing SELECT FirstName, LastName FROM Employees retrieves the first and last names from the Employees table.

Using the DISTINCT keyword helps eliminate duplicate values in results. For instance, SELECT DISTINCT Country FROM Customers returns a list of unique countries from the Customers table.

It’s important to also consider sorting results. This is done using ORDER BY, such as ORDER BY LastName ASC to sort names alphabetically.

Another feature is filtering, achieved with a WHERE clause. For example, SELECT * FROM Orders WHERE OrderDate = '2024-11-28' retrieves all orders from a specific date, allowing precise data extraction based on conditions.

Utilizing GROUP BY Clauses

The GROUP BY clause is essential for organizing data into summary rows, often used with aggregate functions like COUNT, SUM, or AVG.

For instance, SELECT Department, COUNT(*) FROM Employees GROUP BY Department counts the number of employees in each department.

GROUP BY works with aggregate functions to analyze data sets. For example, SELECT ProductID, SUM(SalesAmount) FROM Sales GROUP BY ProductID gives total sales per product. This helps in understanding data distribution across different groups.

Filtering grouped data involves the HAVING clause, which is applied after grouping. An example is SELECT CustomerID, SUM(OrderAmount) FROM Orders GROUP BY CustomerID HAVING SUM(OrderAmount) > 1000, which selects customers with orders exceeding a certain amount, providing insights into client spending.

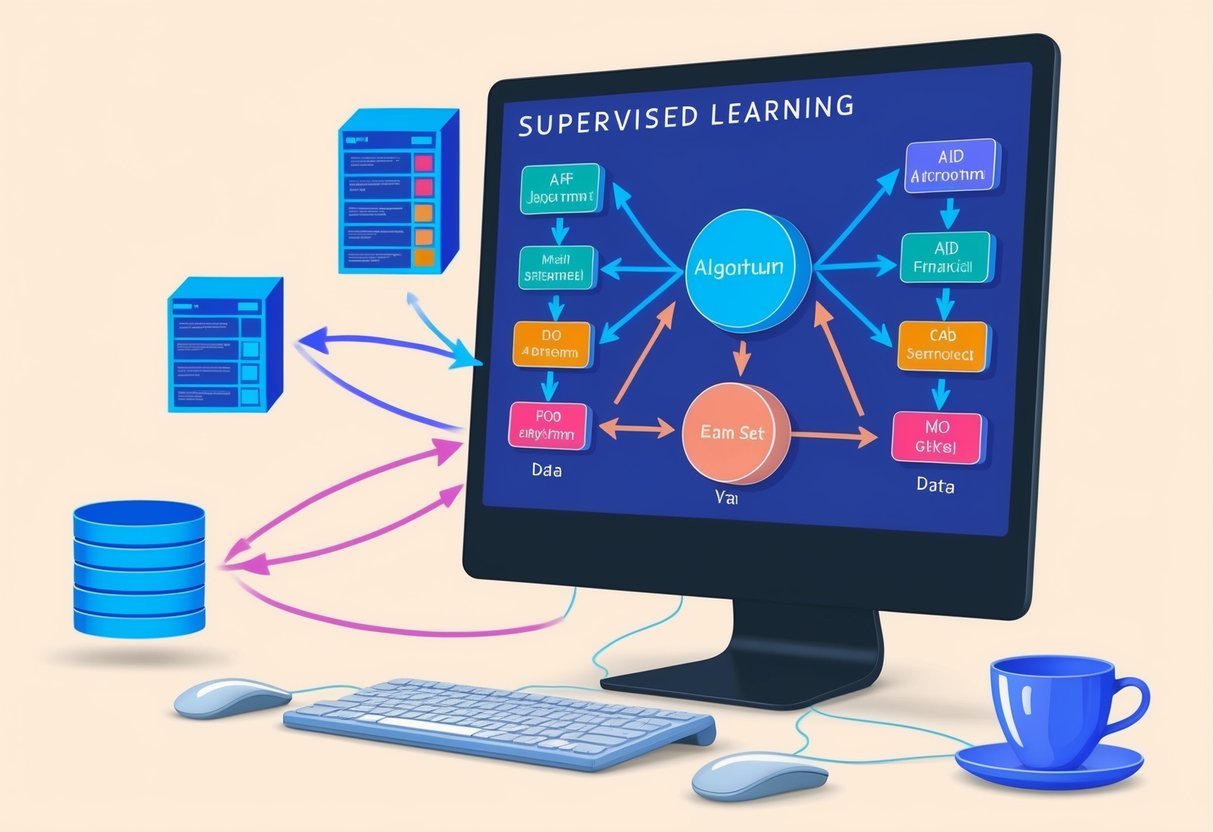

Exploring Aggregate Functions

Aggregate functions in T-SQL provide a way to compute a single result from a set of input values. These functions are essential for operations such as calculating totals, averages, and other statistical measures. Understanding their usage is key to effective data analysis and manipulation.

Using MAX, COUNT and Other Aggregates

The MAX function identifies the highest value in a column. It’s useful for finding maximum sales, highest scores, or other peak values in datasets. To use it, simply select MAX(column_name) from the target table.

The COUNT function counts the number of entries in a column. This is often used to tally the number of orders, users, or items. It runs by calling COUNT(column_name) and is crucial for quantifying data without needing additional detail.

Aggregate functions can be combined with other operations, like SUM for totals, AVG for averages, and MIN for minimum values.

Each function serves a specific purpose in summarizing data sets effectively and offers powerful insights into the data.

Advanced Selection Techniques

In learning T-SQL, two advanced selection techniques stand out: Common Table Expressions (CTEs) and CASE statements. These tools help manage complex queries and refine data selection for precise results.

Common Table Expressions (CTEs)

Common Table Expressions, or CTEs, offer a way to create temporary result sets. They simplify complex queries by breaking them into smaller, more manageable parts.

Using CTEs, one can enhance readability and make maintenance easier.

Syntax of CTEs typically starts with WITH, followed by a name for the CTE. Inside its body, a SELECT statement defines the result set.

CTEs are especially helpful for creating recursive queries, which repeat a process until a condition is met.

CTEs are valuable for improving query performance. They allow for referencing the same result set multiple times without writing repetitive SQL code.

This capability makes it easier to handle tasks like hierarchical data retrieval or managing recursive data.

Employing CASE Statements

The CASE statement in T-SQL provides a way to add conditional logic within queries. This feature allows for transforming data by altering the output based on specified conditions. It functions similarly to an if-else structure in programming.

The syntax of a CASE statement begins with CASE followed by multiple WHEN conditions and THEN results, and ends with END.

Each WHEN condition is evaluated in the order they appear, and the first true condition determines the result.

CASE statements are useful for data transformation, creating calculated fields, or replacing data values.

They enhance flexibility in queries, making it possible to modify data output directly in SQL without requiring additional programming logic. These capabilities allow for dynamic and precise data analysis within T-SQL.

Understanding the PIVOT Operator

The PIVOT operator is a powerful tool in T-SQL for transforming rows into columns, offering a new way to look at data. This functionality is especially useful for creating reports and making data more readable. Users often employ PIVOT in conjunction with aggregation functions to summarize data efficiently.

Basic PIVOT Syntax

Using the PIVOT operator begins with understanding its basic syntax. This syntax allows users to rearrange data fields, turning unique values from one column into multiple columns in the result set.

The core structure includes selecting a base table, choosing the columns to transform, and specifying an aggregation function. For example, using SUM with PIVOT helps sum data for each pivoted column.

A typical PIVOT statement starts with a select query that lays the groundwork for transformation. It specifies which column values will become column headings and what function will be applied to the data. Here is a basic template to visualize:

SELECT [column1], [column2], SUM([value_column]) AS Total

FROM TableName

PIVOT (

SUM([value_column])

FOR [original_column] IN ([new_column1], [new_column2])

) AS PivotTable

Aggregation with PIVOT

The power of PIVOT shines through when combined with aggregation, as it summarizes data across specified dimensions.

Aggregation functions like SUM, AVG, or MIN can be used within a PIVOT to calculate totals, averages, or other statistics for each new column value. For example, using SUM allows the user to see total sales for different product categories.

While executing a PIVOT query, it is crucial to define which data to aggregate. This requires selecting data that is both relevant and meaningful for the intended summary.

Often, users leverage additional tools like FOR XML PATH for further customization, though it is not required to use PIVOT.

This aggregation approach helps in not only reshaping data but also in extracting meaningful insights by presenting data in a new, easier to comprehend layout.

Creating Dynamic Pivot Tables

Creating dynamic pivot tables in SQL Server involves turning rows into columns to simplify data analysis. By using dynamic SQL, such as the sp_executesql function, users can handle varying data sets effectively.

Dynamic PIVOT in SQL Server

Dynamic PIVOT allows for flexible pivot table creation. It enables SQL Server users to convert row data into a columnar format without specifying static column names. This is beneficial when dealing with datasets that change over time.

To achieve this, one often employs dynamic SQL. The core functions used include EXECUTE and sp_executesql. These functions allow for the creation and execution of SQL statements stored in variables.

This approach helps pivot tables adjust to new data automatically.

Dynamic PIVOT is particularly useful when the number of columns is unknown ahead of time. By dynamically generating the SQL command, the table keeps pace with updates without manual intervention, helping maintain data integrity and consistency in reporting.

Delving into UNPIVOT

Unpivoting is a key process in data transformation, allowing data practitioners to convert columns into rows for easier analysis. It is especially useful when dealing with complex data formats, often simplifying the handling and reporting of data.

Working with the UNPIVOT Operator

The UNPIVOT operator helps convert columns into rows in a dataset. Unlike PIVOT, which turns row values into columns, UNPIVOT does the opposite. It creates a more streamlined data structure that is easier to analyze.

This conversion is essential for data normalization and preparing datasets for further manipulation.

When using the UNPIVOT operator, it’s crucial to specify the columns that will become rows. This involves selecting a column list from which data will rotate into a single column.

Here’s a simple structure of an UNPIVOT query:

SELECT Country, Year, Population

FROM

(SELECT Country, Population_2000, Population_2001, Population_2002

FROM PopStats) AS SourceTable

UNPIVOT

(Population FOR Year IN (Population_2000, Population_2001, Population_2002)) AS UnpivotedTable;

This query example converts population data from multiple columns representing years into one pivoted column listing all years. This transformation aids in making the data more comprehensible and ready for sophisticated analysis, such as time-series evaluations or trend identifications.

Excel and SQL Server Interactions

Excel and SQL Server often work together to analyze and display data. Excel’s PivotTables and SQL Server’s PIVOT feature are powerful tools for summarizing information. Each has its own strengths, catering to different needs and situations.

Comparing Excel PivotTables and SQL Server PIVOT

Excel’s PivotTables allow users to quickly group and analyze data in a user-friendly interface. They enable dragging and dropping fields to see different views of data. Users can apply filters and create charts easily.

Excel is great for users who prefer visual interfaces and need quick insights without coding.

SQL Server’s PIVOT function, on the other hand, transforms data in a table based on column values. It is efficient for large datasets and can be automated with scripts. It requires SQL knowledge, allowing detailed control over data transformation. It is suitable for users familiar with databases and who need precise data manipulation.

Implementing Stored Procedures

Stored procedures in SQL Server are essential for automating tasks and improving performance. They allow users to encapsulate logic and reuse code efficiently. In this context, using stored procedures to automate PIVOT operations simplifies complex queries and ensures data is swiftly processed.

Automating PIVOT Operations

Automation of PIVOT operations with stored procedures in SQL Server helps handle repetitive and complex calculations. By using stored procedures, users can define a query that includes the PIVOT function to transform row data into columns. This is useful when summarizing large datasets.

To implement, one might create a stored procedure to handle dynamic column generation. The procedure can accept parameters to specify which columns to pivot. Once created, it can be executed repeatedly without rewriting the query, enhancing efficiency.

This modular approach reduces error chances and ensures consistency in execution.

For instance, the procedure could look something like this:

CREATE PROCEDURE PivotSalesData

@Year INT

AS

BEGIN

SELECT ProductName, [2019], [2020]

FROM (SELECT ProductName, Year, Sales FROM SalesData WHERE Year = @Year) AS SourceTable

PIVOT (SUM(Sales) FOR Year IN ([2019], [2020])) AS PivotTable;

END;

Such procedures streamline data handling, making reports easier to generate and manage.

Optimizing PIVOT Queries

Optimizing PIVOT queries in T-SQL involves using advanced techniques to enhance performance. By refining the structure and efficient data grouping, queries can run faster and use fewer resources. This section explores two key methods: derived tables and grouping strategies.

Refining Queries with Derived Tables

Derived tables play a crucial role in enhancing PIVOT query performance. By using derived tables, the query planner can process smaller, more precise datasets before applying the PIVOT operator. This approach reduces the overall resource demand on the database.

For instance, when handling large datasets, it is effective to filter and aggregate data in a derived table first. This intermediate step ensures that only relevant data reaches the PIVOT phase. Optimizing the derived table with indexed columns can further improve speed by allowing the execution plan to efficiently seek data.

Utilizing derived tables ensures that the main query focuses on transformed data, paving the way for quicker operations while maintaining accuracy. This method is especially useful for queries that require complex transformations or multiple aggregations.

Effective Use of Grouping

Grouping is another vital technique for optimizing PIVOT queries. It involves organizing data so that the PIVOT operation is streamlined. Proper grouping ensures that the data is structured efficiently, reducing computation time when aggregating values.

When using the PIVOT operator, you need to group data by relevant columns that correspond to the intended outcome. This grouping sets a clear boundary for data transformation, making the PIVOT operation more straightforward and effective.

Furthermore, leveraging T-SQL’s built-in functions can simplify complex calculations, enhancing both readability and performance.

Incorporating grouping with indexing strategies can also lead to faster query execution times. By preparing the data in logical groups, developers can ensure that the PIVOT operation is more efficient, leading to better overall query performance.

Roles and Responsibilities of a Data Analyst

Data analysts play a crucial role in interpreting and transforming data. They use tools like PIVOT and UNPIVOT in T-SQL to manage and transform data structures efficiently. These techniques help in reorganizing and presenting data to uncover insights and trends in various datasets.

Data Analyst’s Tasks with PIVOT and UNPIVOT

Data analysts need to manipulate data to find insights. PIVOT allows them to transform row-level data into columns, helping to summarize and compare information efficiently. This method is useful for producing reports where trends over time are analyzed. For instance, sales data can be pivoted to view monthly summaries easily.

UNPIVOT is equally important, serving to convert columns into rows. This technique is employed when data requires restructuring for further analysis or integration with other datasets. By unpivoting, analysts can extend the flexibility of data visualization tools, enhancing the depth of the analysis. This skill is essential for handling diverse data formats and preparing data for complex analytical tasks.

Frequently Asked Questions

This section addresses common questions about using PIVOT and UNPIVOT in T-SQL. It explores syntax, practical examples, and alternative methods to enhance understanding while using these operations effectively in SQL Server.

How do you use the PIVOT clause in a T-SQL statement?

The PIVOT clause is used to rotate rows into columns in a SQL table. Users specify the column values to be transformed into new columns and an aggregation function applied to a remaining data column. This operation simplifies data analysis when viewing metrics over different categorical groups.

What are the differences between PIVOT and UNPIVOT operations in SQL Server?

PIVOT rotates data from rows to columns, creating a more compact, wide table structure. It is useful for summarizing data. UNPIVOT performs the opposite, transforming columns into rows. This is beneficial when needing to normalize table data or prepare it for detailed analysis, making each row represent a unique data point.

What is the syntax for un-pivoting tables using the UNPIVOT statement in T-SQL?

UNPIVOT syntax involves specifying the input columns that need conversion into rows, and defining the target for each resulting row’s data. The statement includes the columns to be un-pivoted and often uses an alias to rename them, enabling easier integration with larger datasets.

Can you provide an example of pivoting multiple columns in SQL Server?

In SQL Server, users can pivot multiple columns by first using a CROSS APPLY to unroll multiple attributes into rows, then applying the PIVOT function. This combination handles different measures for each category, offering a more comprehensive view of related data points.

What are some alternative methods to accomplish an UNPIVOT without using the UNPIVOT keyword?

Alternatives to the UNPIVOT keyword include using UNION ALL by combining SELECT statements that manually convert each column into a row. This process, while more manual, provides greater flexibility in controlling how data is transformed and displayed.

What are the best practices for creating dynamic PIVOT queries in T-SQL?

Best practices for dynamic PIVOT queries include using dynamic SQL to handle varying column names and counts. This involves constructing the PIVOT query within a SQL string. It also accommodates changes in dataset structures and ensures efficient query execution for performance improvement.