The Fundamentals of Data Analysis

Data analysis serves as a cornerstone for modern decision-making. Understanding it involves grasping key concepts and recognizing its role in shaping data-driven decisions.

Defining Data Analysis

Data analysis is the process of inspecting, cleaning, and modeling data to draw meaningful conclusions. It involves various techniques to transform raw data into useful insights. These insights help organizations make more informed choices.

Analysts often use statistical tools and software to perform these tasks efficiently.

A key part of this is understanding math and statistics. Courses like Fundamentals of Data Analysis on Coursera cover these essentials. They also introduce tools used in data analysis.

Structured programs and exercises help grasp these fundamental skills. This process allows individuals to become proficient, starting from the basics and progressing to advanced levels.

The Importance of Data Analytics in Decision Making

Data analytics plays a critical role in decision-making processes. By analyzing data, businesses can uncover trends, patterns, and correlations that are not immediately obvious. This approach allows for more accurate and actionable decisions.

Companies rely on these insights to tailor their strategies and optimize outcomes.

For example, structured learning paths like those offered by DataCamp emphasize the acquisition of skills necessary for making data-driven decisions.

Analysts use data to identify opportunities, assess risks, and improve performance. The ability to effectively use data analytics enhances strategic planning and operational efficiencies. Data-driven decisions are increasingly central to business success, guiding companies toward more precise and targeted solutions.

Data Analytics Tools and Software

Data analytics relies on powerful tools and software to process and visualize data. These tools include statistical programming languages, data visualization software, and comprehensive analytics platforms that offer a range of features for effective data handling and interpretation.

Statistical Programming with R and Python

R and Python are essential in statistical programming for data analytics. They provide robust libraries for data manipulation, analysis, and visualization.

R is particularly popular among statisticians and researchers for its data-centric packages and built-in statistical tools. Its versatility in handling statistical computing and graphics is noteworthy.

Python, on the other hand, is valued for its ease of use and flexibility across different applications. It boasts libraries like Pandas for data manipulation and Matplotlib and Seaborn for visualization.

Python’s ability to integrate with web services and other forms of technology makes it a versatile choice for both beginners and experienced data scientists. Its extensive community support and numerous learning resources add to its appeal.

Utilizing Data Visualization Software

In data analytics, visualization software transforms complex data sets into intuitive visual formats. Tableau and Microsoft Power BI are leading tools in this area. They allow users to create interactive and shareable dashboards that provide insights at a glance.

Tableau is known for its user-friendly drag-and-drop interface and its ability to connect to various data sources. It helps users quickly identify trends and outliers through visual reports.

Microsoft Power BI integrates well with other Microsoft products and supports both on-premises and cloud-based data sources. Its robust reporting features and real-time data access make it a preferred choice for businesses looking to leverage visualization in decision-making.

Exploring Data Analytics Platforms

Comprehensive data analytics platforms like SAS offer end-to-end solutions, covering data management, advanced analytics, and reporting.

SAS, a pioneer in analytics software, provides tools for predictive analytics, machine learning, and data mining. Its platform is realized for handling large data volumes and complex analytics tasks.

Such platforms offer seamless integration of various analytics components, enabling analysts to streamline processes. They support decision-making by offering high-level insights from data.

SAS, in particular, emphasizes flexibility and scalability, making it suitable for organizations of all sizes seeking to enhance their analytics capabilities through sophisticated models and efficient data handling.

Data Analysis Techniques and Processes

Understanding data analysis is all about recognizing the variety of methods and approaches used to interpret data. Key techniques include analyzing past data, predicting future outcomes, and creating models to guide decisions. Each of these techniques serves a unique purpose and employs specific tools to derive meaningful insights from data.

Descriptive and Diagnostic Analytics

Descriptive analytics focuses on summarizing historical data to identify trends and patterns. This technique uses measures such as averages, percentages, and frequencies to provide an overview of what has happened over a certain period. For instance, businesses might rely on sales reports to assess past performance.

Diagnostic analytics delves deeper, aiming to uncover the reasons behind past outcomes. By using data analysis techniques like statistical analysis, organizations can pinpoint the factors that led to specific events. This approach is crucial for understanding what went right or wrong and identifying areas for improvement.

Predictive Analytics and Prescriptive Analysis

Predictive analytics uses historical data to forecast future events. Tools such as machine learning algorithms analyze current and past data to predict upcoming trends.

Prescriptive analytics goes a step further by recommending actions to achieve desired outcomes. This technique uses simulation and optimization to suggest actions that can take advantage of predicted trends. These recommendations help businesses make data-driven decisions that align with their strategic goals.

Data Mining and Data Modeling

Data mining involves extracting valuable information from large datasets. It seeks to discover patterns and relationships that are not immediately obvious. Techniques such as clustering, association, and classification help in unearthing insights that can drive strategic decisions.

Data modeling involves creating abstract models to represent the structure and organization of data. These models serve as blueprints that guide how data is collected and stored.

In the data analysis process, data modeling ensures that data is structured in a way that supports efficient analysis and meaningful interpretation. This technique is essential for maintaining data integrity and facilitating accurate analysis.

Data Collection and Management

Data collection and management are crucial for gleaning insights and ensuring data accuracy. This section focuses on effective methods for gathering data, ensuring its quality by cleaning it, and implementing strategies for managing data efficiently.

Effective Data Collection Methods

Effective data collection is vital for generating reliable results. There are different methods depending on the goals and resources available.

Surveys and questionnaires can be used to gather quantitative data. They are practical tools for reaching large audiences quickly. For qualitative data, interviews and focus groups offer deeper insights into individual perspectives.

Tools like online forms and mobile apps have made data gathering more efficient. The choice of method should align with the specific needs and constraints of the project, balancing between qualitative and quantitative techniques.

Ensuring Data Quality and Cleaning

Data quality is ensured through careful cleaning processes. When data is collected, it often contains errors, such as duplicates or missing values. Detecting and correcting these errors is essential.

Data cleaning involves steps like removing duplicates, correcting anomalies, and adjusting for inconsistencies in datasets.

Tools for data cleaning include software applications capable of automated cleaning tasks. Ensuring data quality prevents analysis errors and enhances answer accuracy. With high-quality data, organizations can trust their analytical insights to improve decision-making processes.

Data Management Strategies

Data management involves organizing and storing data effectively to maintain its integrity over time.

Strategies include using structured databases to manage large datasets efficiently. These databases help in organizing data logically and making retrieval easy.

Implementing clear policies for data access and security is crucial. This helps guard against data breaches and ensures regulatory compliance.

Consistent data management strategies support smooth operations and reliable data analysis, constructing a foundation for robust data governance within organizations.

Mathematical Foundations for Data Analysis

Mathematics is a vital part of data analysis, providing the tools to interpret complex data sets. Key components include probability and statistical analysis as well as practical applications of math in data interpretation.

Probability and Statistical Analysis

Probability and statistics are fundamental in data analysis. Probability provides a framework to predict events, which is essential for making informed decisions.

Through probability models, data scientists estimate the likelihood of outcomes. This is crucial in risk assessment and decision-making.

Statistical analysis involves collecting, reviewing, and interpreting data. It helps uncover patterns and trends.

Descriptive statistics, like mean and median, summarize data. Inferential statistics use sample data to make predictions about a larger population. Both are important for understanding and communicating data insights.

Applying Math to Analyze Data

Mathematical techniques are applied to analyze and interpret data effectively. Algebra and calculus are often used to develop models. These models help in identifying relationships between variables and making predictions.

For instance, linear algebra is important for handling data in machine learning.

Mathematical notation is consistent across many fields of data science. This consistency aids in communication and understanding.

Techniques like matrix algebra and calculus create the backbone for many algorithms. They help in solving complex problems related to data mining and machine learning. Using these methods, analysts can extract meaningful insights from large data sets.

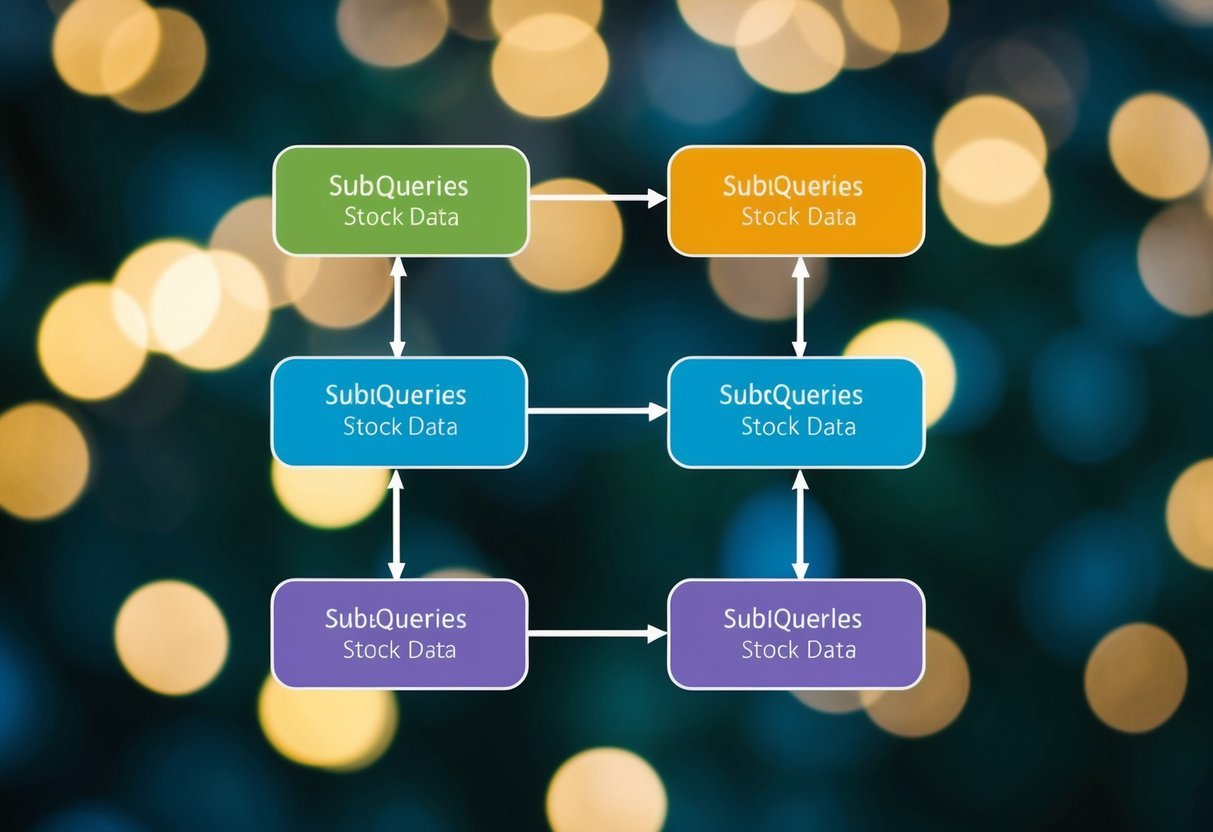

The Role of SQL in Data Analysis

SQL is a vital tool in data analysis, intertwining with relational databases to query and manage large datasets effectively. Mastery of SQL enables analysts to retrieve and manipulate data efficiently, facilitating key insights from complex data structures.

Mastering Structured Query Language

Structured Query Language (SQL) is the foundation for querying and managing databases. It acts as a bridge between data analysts and the data stored in databases.

Understanding basic commands like SELECT, INSERT, UPDATE, and DELETE is crucial. These commands allow analysts to access and modify data.

Complex queries often involve combining tables using JOIN operations, which is a skill required to extract meaningful insights from data spread across multiple tables.

Learning about filtering data with WHERE clauses and sorting results with ORDER BY enhances the ability to retrieve specific data sets effectively.

SQL also supports aggregate functions such as SUM, AVG, and COUNT, which help summarize data. For those pursuing a deeper dive, window functions and subqueries are advanced techniques useful for complex data analysis tasks.

Working with Relational Databases

Relational databases are structured collections of data stored in tables. They form the backbone of most data analysis processes due to their efficiency in organizing and retrieving information.

Using SQL, analysts can manipulate relational databases by creating, altering, and maintaining these tables.

The ability to link tables through foreign keys and define strong relationships enhances data integrity and consistency.

Understanding the structure and schema of a database is critical to navigating and extracting data efficiently. SQL commands like CREATE, ALTER, and DROP are essential for managing database structures.

Furthermore, learning to write optimized queries ensures that large datasets are handled swiftly, reducing processing time. This skill is particularly important as datasets grow in size and complexity.

Advancing Analytics with Machine Learning

Machine learning plays a vital role in enhancing data analysis, allowing businesses to make informed decisions and predictions. By employing advanced techniques, companies can process vast amounts of data efficiently, improving their strategic approaches.

Machine Learning in Data Science

Machine learning is an essential component of data science. It involves using algorithms to analyze data, find patterns, and make predictions.

For businesses, this means refining their data strategies by automating processes and improving accuracy.

One important aspect of machine learning in data science is its ability to handle large datasets. This capability leads to more precise outcomes, which aid in developing targeted solutions.

Machine learning helps analysts sort through complex data to identify trends and patterns that might otherwise go unnoticed.

Besides pattern recognition, machine learning aids in anomaly detection. This can be particularly useful in sectors like finance, where identifying irregularities is crucial.

The use of machine learning enhances data scientists’ ability to gain actionable insights efficiently.

Applying Predictive Models in Business

Incorporating predictive models is crucial for businesses aiming to stay competitive. These models use machine learning to forecast future outcomes based on current and historical data.

Such forecasts help businesses plan better by anticipating events and reacting proactively.

Predictive models allow companies to optimize operations by understanding customer behavior. For instance, marketing strategies can be improved by analyzing purchasing patterns.

Machine learning also aids in risk management. By predicting potential risks and outcomes, businesses can make strategic decisions that mitigate these risks.

The ability to adapt quickly to changing market conditions is enhanced through these predictive insights, making machine learning indispensable in modern business practice.

Building a Career in Data Analytics

Data analytics is a rapidly growing field with diverse job opportunities and clear pathways to success. Understanding the job landscape, educational requirements, and necessary skills can set individuals on the right path toward a successful career.

The Evolving Landscape of Data Analytics Jobs

The demand for data analysts and data scientists is increasing, driven by the need for companies to make data-informed decisions. Employment in data science and analytics is expected to grow significantly, making it a promising area for career advancement.

Many industries are actively seeking professionals with data skills, including finance, healthcare, and technology. Data analytics roles offer various opportunities, from entry-level positions to advanced roles like senior data scientist, providing a range of career growth options.

Educational Paths and Certifications

A solid educational foundation is crucial for a career in data analytics. Most entry-level positions require a bachelor’s degree in fields such as mathematics, economics, or computer science.

For those seeking advanced roles or higher salaries, a master’s degree in data science or business analytics is beneficial. Certifications, like the Google Data Analytics Professional Certificate, offer practical skills through online courses and can enhance job prospects.

These courses teach essential data analysis techniques and tools, making them valuable for both beginners and experienced professionals.

Skills and Competencies for Data Analysts

Proficiency in data analysis tools is vital for data analysts. Key skills include expertise in software applications like SQL, Python, and Microsoft Excel, which are frequently used in the industry.

Additionally, strong analytical and problem-solving abilities are crucial for extracting and interpreting meaningful insights from data.

Familiarity with data visualization tools such as Tableau and Microsoft Power BI can also be advantageous, enhancing the ability to communicate complex data findings effectively.

Continuous learning and upskilling are important in staying current with industry trends and technological advancements.

The Business Intelligence Ecosystem

The Business Intelligence (BI) ecosystem involves a structured approach to interpreting data and making informed decisions. It employs specific roles and tools to extract, transform, and analyze data, providing valuable insights for businesses.

Roles of Business Analyst and BI Analyst

A Business Analyst focuses on understanding business needs and recommending solutions. They work closely with stakeholders to gather requirements and ensure alignment with business goals.

They may perform tasks like process modeling and requirement analysis.

In contrast, a Business Intelligence Analyst deals with data interpretation. They convert data into reports and dashboards, helping organizations make data-driven decisions.

This role often involves using BI tools to visualize data trends and patterns.

Both roles are critical in the BI ecosystem, yet they differ in focus. While the business analyst looks at broader business strategies, the BI analyst zeroes in on data analytics to provide actionable insights.

Business Intelligence Tools and Techniques

BI tools support the analysis and visualization of data, making complex data simpler to understand. Common tools include Power BI, Tableau, and Microsoft Excel.

These tools help manipulate large datasets, build interactive dashboards, and create data models.

Techniques used in BI include data mining, which involves exploring large datasets to find patterns, and ETL (Extract, Transform, Load) processes that prepare data for analysis.

Real-time analytics is another important aspect, enabling businesses to respond quickly to operational changes.

By utilizing these tools and techniques, organizations can gain significant competitive advantages, streamline operations, and improve decision-making processes.

Developing Technical and Soft Skills

Data analysts need a strong set of skills to succeed. This includes mastering both technical knowledge and problem-solving abilities, while also being able to communicate their findings through data storytelling.

Technical Knowledge and Programming Languages

Data professionals must be proficient in several key areas. Technical knowledge is critical, including understanding math and statistics.

Familiarity with tools and techniques like data visualization helps in interpreting complex datasets.

Programming languages are crucial for data manipulation and analysis. Languages such as Python and R help in data processing and analysis owing to their extensive libraries.

SQL is another essential language, allowing analysts to interact with databases efficiently.

Problem-Solving and Data Storytelling

Effective problem-solving is a core skill for analysts. They must be adept at examining data sets to identify trends and patterns.

This requires critical thinking and the ability to ask insightful questions, which is fundamental in deriving meaningful conclusions.

Data storytelling is how analysts communicate their insights. It involves using visualization techniques to present data in a compelling narrative.

This helps stakeholders understand the results, making informed decisions easier. Effective storytelling includes clear visuals, such as charts and graphs, that highlight key findings.

Big Data Technologies in Data Analysis

Big data technologies have transformed data analysis by offering powerful tools and methods to process large datasets. These technologies enable the handling of complex information efficiently, providing valuable insights.

Navigating Big Data with Hadoop

Hadoop is a fundamental technology in big data analysis, designed to store and process vast amounts of data across distributed systems. It uses a network of computers to solve computational problems involving large datasets.

Its primary components are the Hadoop Distributed File System (HDFS) for storage and MapReduce for processing data.

Hadoop allows businesses to analyze structured and unstructured data efficiently. The system’s scalability means it can expand seamlessly as data needs grow.

This makes it a flexible option for organizations that must process diverse types of data without significant infrastructure changes. Hadoop’s cost-effectiveness also appeals to companies looking to maximize return on investment in data analytics.

Data Engineering and Its Significance

Data engineering is crucial for transforming raw data into a usable form for analysis. It involves designing systems to collect, store, and process data efficiently.

This field ensures that data pipelines are reliable and automated, which is essential for accurate analysis.

A key part of data engineering is the creation of data architectures that support efficient data flow. It includes tasks like cleaning data, ensuring quality, and integrating diverse data sources.

The work involves tools and techniques to handle both real-time and batch processing. Effective data engineering results in more robust and insightful data analysis, driving better decision-making in organizations.

Frequently Asked Questions

This section addresses common inquiries about data analysis, covering essential steps, various techniques, skills needed, and career opportunities. It also explores aspects like salary expectations and applications in academic research.

What are the essential steps involved in data analysis?

Data analysis involves multiple steps including data collection, cleaning, transformation, modeling, and interpretation.

The process begins with gathering relevant data, followed by cleaning to remove or correct inaccurate records. Data is then transformed and modeled for analysis, and the results are interpreted to generate insights that support decision-making.

What are the different types of data analysis techniques?

There are several techniques used in data analysis. These include descriptive analysis, which summarizes data, and predictive analysis, which forecasts future outcomes.

Other types include diagnostic analysis, which investigates reasons for past events, and prescriptive analysis, which suggests actions based on predictions.

How does one begin a career in data analytics with no prior experience?

Starting a career in data analytics without prior experience involves learning key tools and concepts. Enrolling in online courses or boot camps can be beneficial.

Building a portfolio through projects and internships is essential. Networking with professionals and obtaining certifications can also enhance job prospects in this field.

What fundamental skills are necessary for data analysis?

Data analysts should possess skills in statistical analysis, data visualization, and programming languages such as Python or R.

Proficiency in data tools like Excel, SQL, and Tableau is also important. Critical thinking and problem-solving abilities are crucial for interpreting data effectively.

What is the typical salary range for a Data Analyst?

Data analyst salaries vary based on factors like location, experience, and industry.

In general, a data analyst can expect to earn between $50,000 and $90,000 annually. Those with advanced skills or in senior roles may earn higher salaries, especially in tech-centric regions or industries.

How do you effectively analyze data in academic research?

Effective data analysis in academic research involves selecting appropriate statistical or analytical methods to address research questions.

Researchers must ensure data accuracy and integrity.

Utilizing data modeling techniques like regression analysis, clustering, or classification can help uncover trends and relationships.