Understanding Naïve Bayes Classification

Naïve Bayes classification is a powerful tool used for making predictions based on probability. It plays a crucial role in tasks like text classification, thanks to its reliance on Bayes’ Theorem.

Foundational Concepts of Naïve Bayes

Naïve Bayes is a simple yet effective classification approach. Despite assuming feature independence, it often performs well in practice.

This classifier calculates the probability of different possible outcomes based on prior data. The core idea is to use the probabilities of each feature independently contributing to the final classification label. This simplicity makes it easy to apply to large datasets with many variables.

The Bayes’ Theorem in NLP

Bayes’ Theorem is essential in natural language processing (NLP) when using the Naïve Bayes algorithm.

It helps in calculating the probability of a document or text belonging to a certain category. By using the theorem, the Naïve Bayes classifier evaluates how likely a text is to fit into different categories based on word frequency or presence.

This approach allows for accurate and quick text classification, turning it into a popular choice for tasks like spam detection and sentiment analysis.

Advantages of Using Naïve Bayes in Text Classification

Naïve Bayes is particularly advantageous for text classification due to its ability to handle high-dimensional data efficiently.

It is often used for tasks such as email filtering because it requires fewer computational resources. The algorithm is fast and effective, even with small datasets.

It also effectively handles missing data and provides strong results in binary and multi-class classification problems. Its straightforward design makes it adaptable to various NLP tasks.

Natural Language Processing Essentials

Natural Language Processing (NLP) is at the intersection of linguistics, computer science, and artificial intelligence. It focuses on enabling computers to understand and respond to text and speech like humans do.

Machine learning plays a pivotal role in enhancing NLP tasks by improving accuracy and efficiency in processing text data, which forms the backbone of NLP processes.

Core NLP Techniques

NLP involves various techniques to enable machines to understand human language effectively. Tokenization is the process of breaking text into smaller pieces like words or phrases.

Part-of-speech tagging identifies the grammatical role of words in a sentence.

Named entity recognition (NER) helps in locating and classifying names, locations, and other entities in text.

Sentiment analysis determines the emotional tone behind texts, crucial for understanding customer feedback and social media. These techniques collectively help in transforming raw text into machine-readable formats.

The Role of Machine Learning in NLP

Machine learning is integral to NLP. Algorithms learn from patterns and relationships within large text corpora to perform tasks like translation, classification, and prediction.

Models such as Naïve Bayes and Logistic Regression provide the foundation for understanding classification tasks in NLP, enabling applications like spam detection and topic categorization.

More advanced models, like neural networks, enhance the ability to capture context and improve outcomes in language understanding and generation. Machine learning drives constant improvements, making NLP systems more robust and adaptable.

Text Data: The Foundation of NLP

Text data forms the essential core of NLP, also known as a corpus. This data comes from books, articles, social media, and more.

Preprocessing steps, including removing stop words and normalizing text, prepare this data for analysis.

Effective text processing is critical for building accurate NLP models, as the quality and quantity of text data significantly affect performance.

Collecting extensive and diverse text data ensures that NLP systems can handle varied linguistic expressions and improve the overall capability of the technology in real-world applications.

Preparing Data for Naïve Bayes Classification

To effectively use Naïve Bayes for text classification, it’s crucial to prepare the data meticulously. This involves preprocessing the text, managing it using tools like NLTK and Pandas, and carefully selecting the right features.

Data Preprocessing Steps

Preprocessing text data begins with cleaning and organizing it, setting the stage for effective classification.

First, unnecessary symbols and punctuation are removed. This step is important to reduce noise.

Next, tokenization involves splitting the text into individual words or tokens, which helps in handling the data at a finer level.

Stop-word removal is another critical process. It involves eliminating common words like “the” and “and” that don’t add much meaning to the text.

Stemming and lemmatization follow, where words are reduced to their base or root form, assisting in standardizing the data while retaining essential context.

Handling Text with NLTK and Pandas

NLTK (Natural Language Toolkit) is widely used for precise text processing. It simplifies tokenization, stemming, and lemmatization, making these tasks easier.

For instance, with NLTK, one can efficiently manage stop-word removal and perform lemmatization to ensure the data is cleaner and more relevant for analysis. NLTK also offers pre-built lists of stop-words that streamline the cleanup process.

Pandas is equally vital. It handles data in structured formats like data frames, allowing for easy manipulation and organization.

By using Pandas, users can convert text data into a form that can be analyzed using Naïve Bayes, assisting in merging and organizing datasets efficiently before proceeding with classification.

Feature Engineering and Selection

The goal of feature engineering is to transform raw data into useful features that improve model performance.

For text classification, important features might include term frequency, inverse document frequency (TF-IDF), or even word embeddings.

Selecting the most informative features is crucial as it impacts the model’s speed and accuracy.

Selecting features involves creating a vocabulary of unique words from the training data. Techniques like TF-IDF help weigh terms by their importance, and this weight is used to decide which features are incorporated into the model.

Careful engineering and selection ensure that the classifier can make informed predictions based on the text data.

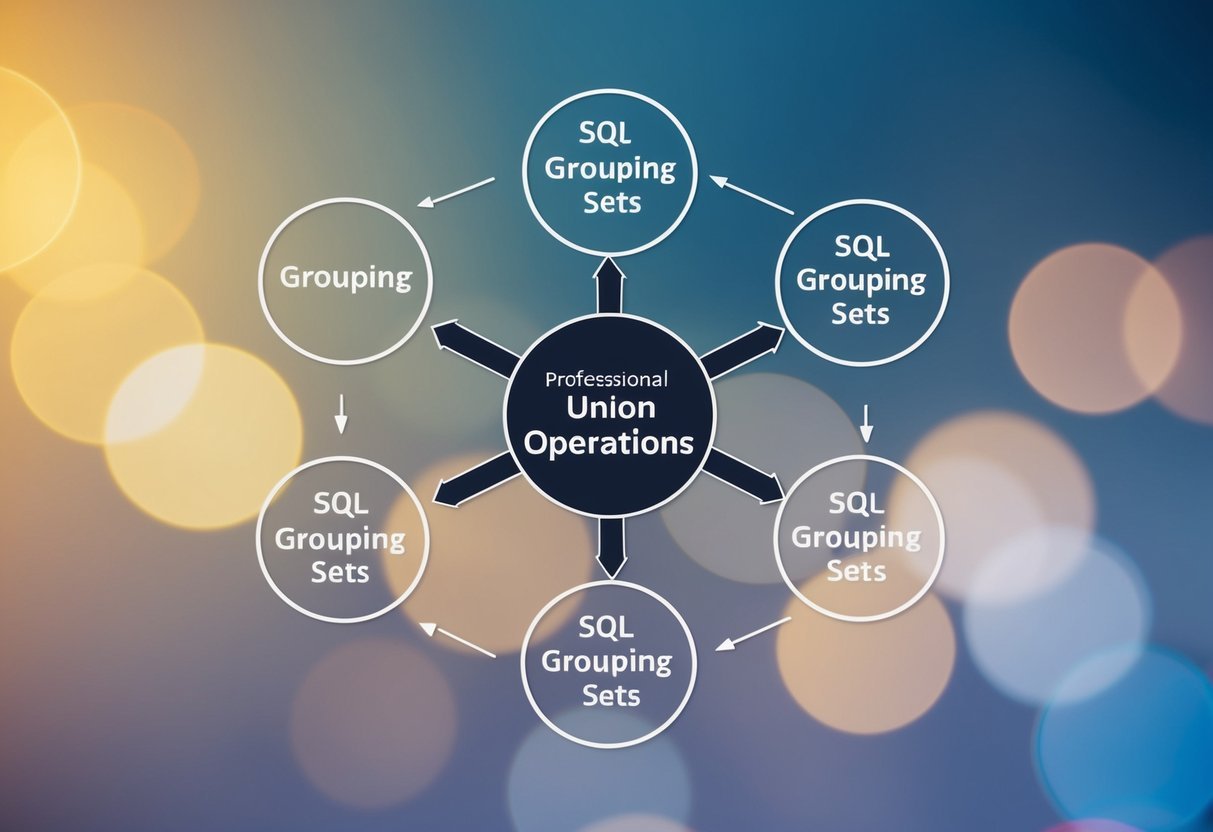

Algorithm Selection for Text Classification

Choosing the right classification algorithm is crucial for achieving the best results in text classification tasks. It involves evaluating various models like Naïve Bayes, support vector machines, and neural networks to determine which one fits the specific data set and task requirements.

Comparing Naïve Bayes with Other Models

Naïve Bayes classifiers, particularly the Multinomial Naïve Bayes, are popular for text classification due to their simplicity and efficiency. They work well with large feature spaces and are effective when the data is represented as frequency counts. These models assume that features are independent, which can be an advantage with text data.

In comparison, support vector machines (SVM) and logistic regression provide robust alternatives.

SVM is powerful for text categorization tasks with high-dimensional data. It works by finding a hyperplane that best separates data classes.

Logistic regression, on the other hand, predicts the probability that a text belongs to a particular category. Both models can handle binary and multiclass classification, which expands their applications beyond what Naïve Bayes can offer.

When to Use Multinomial Naïve Bayes

Multinomial Naïve Bayes is specifically suited for classification tasks where the data consists of word counts. It performs exceptionally well in dealing with problems like document classification and spam filtering.

Its efficiency in computation makes it ideal for projects with time and resource constraints.

It is particularly beneficial when classifying text into multiple categories, thanks to its ability to handle multiclass problems effectively. Although it assumes word features are independent, which isn’t always true in text data, this assumption simplifies computation and often still yields good results. Multinomial Naïve Bayes shines with large datasets where simplicity and speed are priorities.

Programming Naïve Bayes Classifiers

Naïve Bayes classifiers are simple yet effective methods for classification tasks. They are widely used in fields like natural language processing (NLP) to categorize text. This section explores how to implement these classifiers using Python, focusing on popular libraries like Scikit-Learn.

Implementing Naïve Bayes with Python

Implementing a Naïve Bayes classifier in Python involves understanding the algorithm’s theory and applying it practically. One of the most common libraries for this task is NLTK. It helps tokenize, lemmatize, and categorize text data efficiently. Users can also utilize NumPy for handling numerical data and performing necessary calculations.

To start, loading datasets is vital. Python’s flexibility allows for importing many file types, including CSV or JSON.

The basic steps involve cleaning the data, usually by removing stopwords and performing tokenization. After preprocessing, the data is split into training and testing sets. By doing this, the model can learn patterns and make predictions on unseen data.

An initial implementation might involve coding the algorithm from scratch, calculating the conditional probabilities of terms given a class. Libraries like NLTK simplify these tasks, providing pre-built methods for tasks like tokenization and classification.

Utilizing Scikit-Learn for Classification

Scikit-Learn is a powerful library for implementing machine learning models, including Naïve Bayes classifiers. It offers efficient tools that streamline the process of creating classification models.

To utilize Scikit-Learn, users can start by importing necessary modules, such as MultinomialNB for multinomial data. Datasets are loaded and preprocessed similarly, split into training and test sets.

Scikit-Learn makes it easy to train the classifier using its fit method on the training data.

Model evaluation is straightforward with functions like predict, which help test the model’s accuracy. Scikit-Learn also offers tools for cross-validation and performance metrics, providing insights into how well the model generalizes to new data. This makes Scikit-Learn an ideal choice for those aiming to quickly build and test Naïve Bayes classifiers.

Enhancing Naïve Bayes with NLP Techniques

Improving naïve Bayes through specific NLP methods can significantly boost its efficiency and accuracy. This involves advanced feature extraction and handling techniques like TF-IDF and word embeddings, which help represent and categorize text data more clearly.

Integrating TF-IDF in Feature Extraction

Term Frequency-Inverse Document Frequency (TF-IDF) is vital for refining the input features for a naïve Bayes classifier.

Unlike simple bag-of-words models that count word occurrences, TF-IDF considers the importance of words in the context of the entire document set. It assigns higher weights to words that appear frequently in a few documents but not uniformly across all.

This approach helps in minimizing the impact of common words like “the” or “is,” which might otherwise dominate the classification process. It increases the classification model’s sensitivity to more relevant, topic-specific terms.

Implementing TF-IDF can enhance the capability of naïve Bayes by making the feature set more representative and focused, ultimately boosting the prediction accuracy.

Leveraging Word Embeddings for Naïve Bayes

Word embeddings like word2vec provide another layer of depth for improving naïve Bayes classifiers. They move beyond simple vectorization, capturing semantic meanings by representing words as dense vectors in a high-dimensional space.

This technique is more informative compared to binary or frequency-based vectorization methods.

By converting words into word vectors, embeddings can capture context and relationships between words. This is useful in handling synonyms and polysemantic words effectively.

While naïve Bayes assumes feature independence, embedding vectors allow the method to gain insights from contextual similarities, leading to better performance.

Other techniques, such as locality sensitive hashing are also noteworthy. They further preserve similarities across documents, enhancing text classification with naïve Bayes.

Overall, word embeddings and these related techniques provide a valuable enhancement to traditional approaches, ensuring that texts are classified with greater accuracy and contextual understanding.

Assessing Classification Model Performance

Evaluating how well a model performs is crucial in machine learning.

Classification models, like Naïve Bayes, often use metrics to gauge effectiveness.

Understanding Confusion Matrix and Accuracy

A confusion matrix is a table used to describe how well a classification model performs. It compares actual and predicted values, detailing true positives, false positives, true negatives, and false negatives.

This helps identify where predictions go wrong.

Accuracy measures the percentage of correct predictions compared to the total number of predictions. While it’s useful, accuracy alone can be misleading if the data is imbalanced.

The confusion matrix helps provide a clearer picture by showing a detailed breakdown of model predictions.

Handling Sparse and Imbalanced Data

Working with sparse and imbalanced data presents challenges in natural language processing and classification tasks.

Sparse data often leads to weak predictions, while imbalanced data can bias the model towards the majority class.

Addressing these issues requires specific techniques to enhance classification accuracy and robustness.

Techniques like Laplace Smoothing

Sparse data occurs when many features have zero or near-zero instances, reducing the effectiveness of the model.

Laplace smoothing helps by adding a small constant to each feature’s count. This technique prevents the probability estimates from becoming zero, which is crucial in Naïve Bayes classification.

By ensuring all probabilities are non-zero, the model can make more reliable predictions despite limited training data.

This method is particularly useful in situations where certain word combinations in text data do not appear frequently, which is common in NLP tasks.

Properly handling sparse data improves model performance by maintaining a balanced probability distribution.

Training and Evaluating Naïve Bayes Models

Training Naïve Bayes models involves preparing appropriate datasets and evaluating model performance.

The process includes dividing data into training and testing sets, using cross-validation, and tuning hyperparameters for optimal results.

Creating Training and Testing Sets

To train a Naïve Bayes model, start by splitting the data into training and testing sets. The training set allows the model to learn from labeled examples, while the testing set evaluates its performance.

A common practice is to allocate 70-80% of data for training and the remainder for testing. This ensures the model learns effectively without overfitting.

Random shuffling is often used when splitting data to ensure each set is representative. This helps the model to generalize well on unseen data.

Careful selection and preparation of these datasets are crucial for model accuracy and reliability.

Cross-Validation and Hyperparameter Tuning

Cross-validation techniques like k-fold validation help in assessing model stability. By dividing data into k subsets, the model is trained k times, each time using different subsets as the testing set.

This approach minimizes bias and variance, offering a more reliable model assessment.

Hyperparameter tuning involves adjusting model parameters to improve performance. For Naïve Bayes, this may include modifying the smoothing parameter, which helps manage zero-probability issues.

Tuning is often automated with tools like grid search, balancing computational cost with the accuracy of predictions.

Proper evaluation and tuning are key to achieving high-performance models.

Applications of Naïve Bayes in Real-world NLP

Naïve Bayes classification is an essential tool in natural language processing, widely used for tasks such as spam detection and sentiment analysis. It powers various applications by efficiently categorizing documents and identifying topics with accuracy and speed.

Spam Detection and Document Categorization

Naïve Bayes is often applied in spam detection, where it classifies emails as spam or not based on word frequency and patterns. This method uses probabilistic models to determine the likelihood of an email being spam. Factors like specific keywords and the overall structure of the email contribute to this decision.

Besides spam detection, Naïve Bayes excels in document categorization. It sorts content into predefined labels, making it useful for organizing vast amounts of data.

In this setup, documents are analyzed and assigned categories based on word appearance probabilities.

Incorporating Naïve Bayes for these tasks helps streamline data management and improve efficiency in handling textual information.

Sentiment Analysis and Topic Classification

Sentiment analysis benefits significantly from Naïve Bayes by assessing the emotional tone in texts, such as reviews or social media posts. The algorithm calculates the probability of text expressing positive, negative, or neutral sentiments.

This application is crucial for businesses aiming to understand customer opinions.

For topic classification, Naïve Bayes identifies main themes within text collections. By examining the frequency of words related to specific subjects, it places documents into relevant topic groups.

This technique aids in content management, enabling easier navigation and insight extraction from large datasets.

Naïve Bayes, therefore, stands as a robust choice for analyzing text and extracting valuable information from it, enhancing both user experiences and organizational processes.

Advanced Topics in NLP and Naïve Bayes

Natural Language Processing (NLP) has witnessed a dramatic shift from traditional methods to advanced technologies like deep learning and neural networks. Understanding these developments, including the pros and cons of different techniques such as Naïve Bayes, is crucial for anyone pursuing a natural language processing specialization.

Deep Learning vs. Naïve Bayes for NLP

Deep learning has revolutionized NLP by using complex models like neural networks. These models excel in tasks like machine translation and sentiment analysis. They process large datasets, capturing intricate language patterns.

BERT, a significant deep learning model, has enhanced language understanding by improving context awareness.

Naïve Bayes, while simpler, remains useful for specific tasks. It is often good for text classification when computational resources are limited.

Naïve Bayes works well when speed is critical, but it may not match the accuracy of more complex models. For projects that don’t require deep layers, Naïve Bayes offers a practical, efficient choice, especially for beginners in NLP.

Evolution of NLP: From Rule-Based to Neural

NLP’s journey from rule-based approaches to neural networks marks a significant evolution. Originally dependent on handcrafted rules, early systems struggled with context and complexity.

This changed with statistical methods, allowing algorithms to learn patterns from data.

Today, modern NLP leans heavily on neural networks and deep learning. These techniques allow for better handling of language nuances and context.

Neural networks, such as those used in BERT, provide models the ability to understand context deeply, which was not possible with previous methods.

This evolution has made machine translation and other advanced NLP tasks more accurate and efficient, underscoring the field’s dynamic progress.

Best Practices in Naïve Bayes and NLP Integration

When integrating Naïve Bayes with Natural Language Processing (NLP), ensuring feature independence and managing limited training data are crucial. Carefully addressing these aspects enhances the effectiveness and reliability of classification models.

Maintaining Feature Independence

In Naïve Bayes, assuming feature independence simplifies computations but can affect performance if not properly managed.

Feature independence assumes that the presence of a word in a document is unrelated to the presence of any other word. This is ideal in theory, but in practice, dependencies between words often exist.

To uphold independence, preprocessing techniques like stop-word removal and stemming can be employed to reduce noise and redundancy.

Using a feature selection method helps in choosing the most relevant features to strengthen the model’s ability to predict the class variable accurately while maintaining computational efficiency.

Overcoming Challenges with Limited Training Data

Limited training data can hinder the performance of a Naïve Bayes classifier as it relies on calculating prior and conditional probabilities.

Data augmentation and synthetic data generation can help in expanding the training dataset, thus alleviating the problem of limited data.

Another approach is to leverage semi-supervised learning, where a small amount of labeled data is combined with a large amount of unlabeled data.

Techniques such as cross-validation ensure that the classifier’s performance is consistent and reliable across different data subsets.

Employing such strategies helps in improving accuracy while using minimal labeled data.

Links: For more on naive bayes in NLP, see Applying Multinomial Naive Bayes to NLP Problems.

Frequently Asked Questions

Naive Bayes classifiers are powerful tools in natural language processing and other types of data analysis. This section covers common questions about their application to text classification, foundational principles, differences between model types, and implementation in Python.

How is the Naive Bayes classifier applied to text classification problems?

The Naive Bayes classifier processes text data by assessing the likelihood that a given piece of text belongs to a certain category. It does this based on the frequency of words in training data. This method is commonly used for tasks like spam detection and sentiment analysis.

What are the underlying principles of the Naive Bayes algorithm in machine learning?

Naive Bayes relies on Bayes’ theorem, which calculates the probability of a hypothesis based on prior knowledge. It assumes independence between features, meaning each word contributes independently to the probability of the category. This simplification allows the algorithm to be efficient and fast.

Can you give an example of using the Naive Bayes classifier for numerical data analysis?

While commonly used for text, Naive Bayes can also handle numerical data. For instance, it can classify data into categories based on measurements like temperature and humidity. Continuous data is turned into categorical variables using techniques such as binning.

How does Multinomial Naive Bayes differ from other types of Naive Bayes models?

Multinomial Naive Bayes is specially designed for text classification. It is based on word frequency counts in documents. Unlike other types, such as Gaussian Naive Bayes, which is used for continuous data, Multinomial Naive Bayes excels in handling data with discrete counts like word occurrences.

What role does Bayes’ theorem play in NLP classification tasks?

Bayes’ theorem calculates the probability of a hypothesis given preceding data. In NLP, this can mean predicting the likelihood of a sentence belonging to a specific sentiment category. Bayes’ theorem helps adjust predictions based on training data and observed occurrences in the text.

In what ways can Python be used to implement a Naive Bayes classifier for NLP?

Python offers libraries like Scikit-learn for implementing Naive Bayes classifiers. These libraries provide built-in functions that simplify applying algorithms to text data.

By using Python, developers can preprocess data, train models, and evaluate accuracy efficiently.